The Landscape of (Extended) Reality

(upbeat jingle music) (audience applauding) - Thanks hi everybody.

And I hope some of you got to catch Eika's presentation. So we did a workshop day before yesterday with Eika Odey and it was a great day.

So yeah, that's me co-founder of Awe Media. And as I said, the original evangelist for mixed reality. So we started promoting the ability to deliver this through the web, a long time ago.

Probably back to the point where I was a bit crazy. Really wasn't possible but the vision seemed pretty clear to us.

And so the work that I have been doing is trying to push the standards and collaborate with people to drive forward through the W3C.

So through the web RTC working group and AR Community Group. And through the Khronos Group.

So if you don't know them, they developed WebGL, and they do much more of the silicon layout standards. And things like OpenXR and OpenVX, things like that are evolving at the moment.

And then also the ISO.

If you don't know, ISO have published a reference model for mixed and augmented reality.

It takes a traditional multi perspective architectural view, but it's a great document.

If you're trying to build a business case and get an overview of how all these different parts fit together.

A lot of larger organisations like to refer to that. So that's something that you might want to check out. So what I'd like to do is walk through a brief history of our journey and how we got here.

So as we said, back in 2009, really the only type of augmented reality that was working on mobile phones was geo location based.

And that was because the first Androids came out, that had both a gyro, an IMU, and also compass or magnetometer.

So you could work out roughly where someone was in the world and the direction, orientation of the device and then you could overlay content on to the world. You could take a guess about where they were and create the illusion of showing augmented reality. So we created a platform that was a simple drag and drop from map sort of base interface that lets you place objects in the world.

And then you could go around and see them and we just threw it out as an experiment to see if people would like to use it.

We found pretty quickly that people all over the world wanted to create content that way, but the problem was that once you'd created it through a web browser, you had to output it to access through an application layer or Genio or Wikitude. So we kept working on the R&D through that platform and extended it.

And about 2011, we really had seen that those apps were silos.

They were temporary transitions.

And we wanted to focus on a much more accessible, much more distributable format.

And so we started working with the different standards bodies through there.

That was when the grassroots AR standards organisation really kicked off.

In 2012, Computer Vision started to become available on mainstream on mobile devices.

So we worked to extend that and by that time, we had really formed the vision of what we wanted to do with the web.

So that was when we first announced our augmented web platform vision.

We announced that at augmented reality expo when it was back called that in 2012.

And luckily on stage, we had some great people. Mike Billinghurst and Bruce Sterling.

Bruce Sterling thought it was a cool idea and gave us some great feedback on that.

From there, we continued and got more involved with the different standard organisations.

And focused on some of the different verticals to learn what we could from that.

And then 2014, we continued to evolve that and we were really hoping that things would be standardised by then, but they really weren't. There was still a long way to go before we could start shipping these as usable products in wide mainstream sort of environment.

In 2015, again we were hoping that it would be available, but just the video APIs, in all of the major browsers at one point or another were broken.

That was really quite a bad year for us for standards. And we really had to delay the rolling out of this. So there was quite a big gap between the vision and the execution.

In 2016, we were hoping that Apple would've adopted WebRTC, the camera access.

But they hadn't and we didn't want to hold off any longer. So we launched the MPV of our platform that supported 360 content and VR experiences. And so we made that widely available and started getting users again from all over the world. Luckily since then, we've rolled out much more of the geo location functionality, and Apple have also adopted WebRTC.

So in the latest update, that just happened little while ago, iOS 11, you can now access the camera, and that lets us deliver real augmented reality. But what we've built on top of that is Computer Vision. So not just being able to look around and use GPS and deliver location based content.

While that's a great part of what we do, we also have Computer Vision and I'll talk more deeply about that in a second.

So what predictions did we get right? If we look back, the first one was just that mixed reality would be possible in the web.

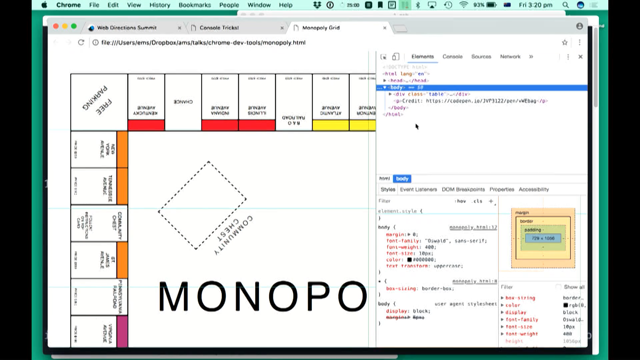

When we started this, this first diagram was from a workshop in Seoul in 2010.

It was pretty crazy back then.

It's a pretty ugly diagram too, but if you look at the standards stack, it's roughly right. Most of this stuff has come to fruition, and layers aren't that different except for the interjection of WebVR on top.

What we're also able to do was predict the specific APIs that would be important.

And there's a core set of them that we think really make or define this new generation of the web. So we were able to publish a web harness, our test harness, a web page, in 2011, in six months, from when we published this to when the first browser came along that could actually pass this test suite. And it's amazing to then start quickly seeing more and more browsers come through, that did pass that. And now there's about a three billion browsers worldwide that pass this sort of test harness.

Also in 2016, the W3C WebVR Workshop, this was six or seven months before ARKit was announced, and certainly well before ARCore was announced. Our proposition was that all of the iOS vendors would start making pose level tracking, a standard part of what they're delivering.

We think that's something that's gonna be commoditized and now it has already.

So we think that all of these technologies, the vision, the trajectory for these are really, really clear. And when you have a clear vision it is quite easy to map out the path.

It's a really hard thing is getting the timing right. So almost every week since 2010, we've had some friendly person quote this great quote from Tim O'Reily to us.

So being too early is indistinguishable from being wrong. I think Tim's right, but he is only right if you give up too soon.

So if you pick a really clear tech vision and execute on that, then this can really play out. So let's have a look at where we are up to now. And part of what we're gonna do first is look at the different terminology.

So I'm sure lot of you've tried the different experiences, VR and AR, and there's a lot of terminology bounced around inside the industry.

I think it's good to clarify a baseline for that first. So let's start with virtual reality.

It's very hard to pin down an exact definition of VR. You can see even back in '91 Myrong Krueger was supporting Jaron Lanier and they were talking then about integrating a lot of different pieces of technology that could be seen as virtual reality.

If you look along the top, the stereoscope viewer has been around since the 1800s or 1700s, 18th Century.

And the Viewmaster was launched in 1938.

So that sort of stereo experience has been around for a very long time.

On the far side there, you can see the Daydream, so that's the sort of modern incantation of that. You have other head mounted displays, pivot devices like the Vive and the Rift as well.

But they're all based on the same double ends model which is really just presenting a single stereo image to each eye.

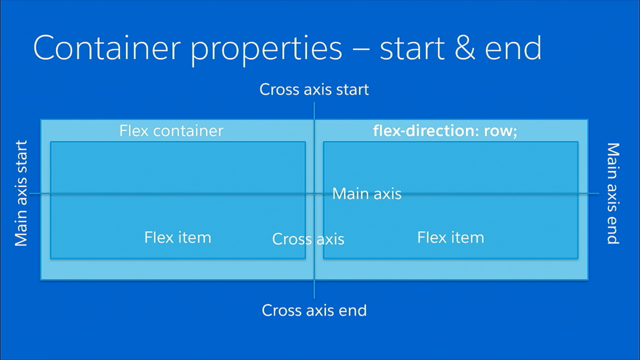

What is augmented reality? Well there's a bit of academic definition of AR. Ron Azuma Scott, probably the most referenced academic definition, and he's drawn a really bright white line around the definition of that.

And you'll see the three key points at the bottom, that he's used to define augmented reality. So the first is that it has to combine real and virtual content.

The second is that it has to be interactive in real time. And the third that it has to be registered in 3D. And the bit that's missing from there is from the user's perspective.

So this has been really useful for clarifying discussions certainly in academic circles.

There was a long debate for long time about what is AR. People were talking about the first down line and NFL games things like that.

That's technically not AR because it's not from the first person perspective.

So this has been a great academic definition. And then 1994, Paul Milgram came along and defined his virtuality continuum.

Again a highly referenced academic work.

This presents basically a continuum, where on one side you have reality, the fleshy meat space of real exist in, uncomfortable seats you're probably sitting on. And then on the other side, the complete far extreme is the virtual world.

Now this is a bit of a false continuum because the way that we experience the world is always through our body.

There is no way to not have our proprioception and be in an embodied space.

So there's no way to get to that fully virtualized side of the diagram.

But it is useful for analysis.

And then in the middle, the two types of what's generally called the mixed realities.

So augmented reality overlying digital content on the real world, and then the most horrible term in the whole industry, augmented virtuality.

Basically that's projecting real, sorry sensor data into a virtual space.

But the problem with the term mixed reality, I think Paul Milgram did a great job with that definition. But Microsoft started marketing content under the HoloLens mixed reality experience terminology.

And they did a great job.

And the whole industry started really aligning those two terms together, HoloLens and mixed reality. If you say to somebody, we're creating mixed reality experiences, they go cool where is the HoloLens that I can put on? So that created marketing issues for people certainly in the valley.

A bunch of the different vendors reacted against that. And Qualcomm have probably been driving the movement against that.

And so they've come up another term called eXtended reality. They have a range of white papers and I really recommend having a look at them.

They've done a great look at what are the key components that are needed to take us to the next level of this computing revolution.

And I believe most of the technology really is the next wave of computing after mobile technology. But if you look at it, they really are just talking about mixed reality.

If it's perfectly inside Milgram's academic definition of this, so while you'll probably hear a lot of people talking about XR now, I wouldn't get bamboozled by the term.

They really are generally just talking about mixed reality. But the challenge is that's evolved even further now. Now we have a new term WebXR.

I'll talk a little bit about specific proposals around this in a moment, but what it's come to me is really just on top of ARKit and ARCore.

So it's not even the full spectrum of things. It's just a subset of what's effectively slam light. Slam is a type of tracking that came from robotics. And this is really just a subset of that, and it's I think confusing the messaging even further. So I think we really need to be aware of how confusing this can be for the end users, how many different silos we're just recreating.

I think the thing that really applies here is Clarke's third law.

Any sufficiently advanced technology is indistinguishable from magic.

That's really what the users want.

They don't really care what it's called.

They want to be able to use something.

They want it to be useful.

They want it to work, and they just want to have an experience.

So what I'd like to do is show how, this video is about a year old now.

And we'll talk about some of the newer things from beyond here.

But what it does is it shows how this really is a continuum. And you can weave all of those different things together. - Here's a presentation of Milgram's mixed reality continuum.

And this shows you the four main modes that make up this continuum.

How we go from reality on the left hand side, right through to virtual reality on the far left hand side.

And the two main modes between them.

If we have a look at what's presenting to the front of us at the moment, this is a virtual scene. So this is a 360 or VR compatible screen.

It has some virtual objects in them and you can direct these (mumbles).

And of course you can put this in the stereograph, see some experiences some sort of (mumbles). So the left of that, we see augmented virtuality. And that's the same sort of scene but with sensor data, so we can view in this example, projected into the screen.

Of course that sensor data doesn't have to be just camels. You can project that as sensor data from there as well. And on the far left hand side as we said, this is reality. So this is just a plain standard view of the real world. And if we held up (mumbles) nothing happens. This is the general view of what we see, the main space that we live in.

But if we turn on augmented reality, we could see that tracking is now possible. And the digital content is overlaid on to the markers or objects that it sees.

So this is how augmented reality works.

Overlaying digital content on to the real world but that's really just one type of sensor data as you can see we can project that back into the augmented virtuality saying as well.

So if we hide that, it still allows us to move an object using this marker around inside that 3D virtual space. And we could use other sensor data from mobile devices and those sorts of things as well.

And in fact, the full virtual experience play, everything is just downloaded from the virtual environment and this is virtual reality.

This really shows the four main modes that augmented reality can give, all working and the key thing here is that this is all working just inside of (mumbles).

- So that demonstration was just inside a desktop browser but it works just as well on a mobile experience as well. And of course then on top of that, you can layer other spatial awareness.

So location content as well.

One thing that we find people are really engaging with is being able to create both an onsite and an offsite experience.

So if you're not at a location where the content is really presented, then you can create the sort of 360 virtual tour.

But if you're on location, then you'll get perhaps some AR way finding content.

So a classic example for that is campus guide or a museum experience.

Let's look at how you create these different experiences. This is where the web really pays off in terms of benefits.

So if you look at the head mounted displays, and the mobile application experience creation is very similar.

So it's quite a technical experience.

You generally have to be a developer.

It requires some sort of SDK or downloading an application and development kit.

You have to develop your apps.

You really especially with the head mounted device you need the specific devices that you want to test on. You need to convert the media into the right formats. You need to sign submit and then wait for approval through the app store.

And the app store is probably one of the biggest hurdles. You then need to publish and distribute those links. And then you often end up having to create a promotional website to drive people to that experience anyway.

If you do that through the web, then you can just open a browser, create an experience with one of the online platforms, we like to use that and then you can just upload your contents through the browser, and start manipulating things in situ, in the experience.

And it really is a truly whizzy-wig experience because you create and view what the end users are gonna see.

You're really seeing what they're gonna see. And then after that, it's ready to share.

And that's where it really pays off.

So if you look at how you view this experience, for head mounted devices and say the simplest example, mobile AR app.

We've measured with some of those and they can be up to 27 distinct user steps that someone has to go through, from knowing there's an experience to actually trying that experience.

And as you'll know at each of those steps there's a dropoff rate.

So 27 steps is a massive hurdle, but if you just share some web based mixed reality content, you share a link perhaps through a social media, and somebody taps on a link and it just works. But the example that we showed you before with the tracking that used the QR code style looking markers, they're called fiducial markers. Fiducial marker is just basically a known thing in the landscape.

If you place a flag in a field, that's technically a fiducial marker.

The great thing about those QR code style markers is they have a really clear affordances.

People look at them and they know what to do with them, and they have really clear corners.

So it's very easy for the computer vision to track them. But they're ugly.

People don't like to put them on their designs. And you'll also find that they're very easy to break. If somebody puts their thumb over one of the corners, then one of the four points is missing and the tracking just drops out completely. While we've been working for quite a long time is to port natural feature tracking to the web, and so there's a demonstration that shows how this can be delivered using just any sort of image. This wolf for example.

(peppy rhythmic music) So now we really have the basis of full mixed reality that just works in all of the mainstream browsers. This is supported in over three billion browsers right now.

This is really just the beginning of that basis too. So perhaps on all of the devices, the frame rate might not run quite as fast and you'll notice that still at points, the tracking is not completely robust. But we've got lot of optimization to go yet. So we're really proud of what we've delivered and we think that it's a great starting point. And then on top of that, so if you saw Eika's presentation, being able to integrate ARKit and ARCore, to provide just general spatial tracking, that's evolving to become a web standard.

And again we'll be able to integrate that easily as well, so the focus is on what you can just use in standard web browsers.

So let's look at the different types of mixed reality. And focus on the interaction modes.

This really drives the different types of interaction design that you can deliver.

So the first is the public interaction mode. So this is generally delivered in installations, retail, and even the Xbox connector is probably a good example of this.

Generally the person's whole body is in the field of view. So you have some sort of sensor and some sort of projected display and the user is seeing themselves projected on to that display along with some virtual content.

You tend to have more spectators.

It's great for drawing attention or shared experiences. And the one challenge with it is that it's generally a limited distribution.

So it's either in specific lanterns with the Xbox or specific retail or museum installations. That sort of thing.

So it's a much smaller audience.

And quite limited for wide distribution.

The next is the personal mode.

So this is generally delivered through computers, laptops, and desktops.

Most computers now have some sort of webcam connected. Certainly if you look at laptops it's a standard part of the feature.

The person's upper body mostly, sometimes whole body, but mostly upper body is in the field of view. It has fewer spectators.

You might have a few people huddling around the computer. But generally it's just one or two people.

But the benefit is that it has much wider distribution. So you can just share this through the web and the examples you've seen so far just can be distributed en masse.

So technically this is generally referred to as a magic mirror experience.

And it's because of the physical structure of this you're looking at a reflection of yourself. So other things that we can also support facial tracking and if you put the images on T-shirts and things like that, that's great.

If you're tending to hold up images to the computer, it creates quite a difficult user experience because often the object is between them and the screen. So it's quite a clunky experience, but if you're tracking faces or things printed on to people or hats, that sort of stuff, it can be quite a good experience.

The next one is the private mode.

So if the last one was the magic mirror, this is a magic window.

Or depending on your phone it could be a magic keyhole. You can have the extremities that hid in the field of view, but generally it's seeing through the device into the world, into the environment around you. So it tends to be one or two participants, but mostly one. The benefit of it is that it gives you much, much wider distribution.

So not only does everybody have a device like this in their pocket, but also they can then take these devices into multiple locations.

And you can adapt the content based on the location, time of day, that sort of thing.

So the interesting thing with mobile devices nowadays though is that they have multiple cameras.

And it is also possible to not just use the cameras that are taking in the environment around them, but you can also at the same time use the camera that's facing their face and use things like expressions or face tracking other things to do more complex, more sophisticated tracking as well.

Then we move on to the next mode.

This is the intimate mode.

And this is the mixed reality that people tend to think about.

So these are things that are strapped to your head. They generally have your extremities in the field of view. Depending if you're talking about VR then that's true. Some sort of simulated extremity using controllers. They're really focused on one direct participant. So it's our personal first person experience. They are pervasive from the terms of your perception. But they have really limited distribution.

And we will talk a bit about the market sizing and the market impacts later.

But you can break these into three key modes. So the first is the tethered VR.

This is the premium VR experience.

This is what most people think of when they're talking about strapping stuff to the head at the moment, because this is what's really captured people's attention. It gives you a great game experiences if you look at the Vive or the Rift that sort of thing. But they really are limited both in terms of how much you can move around.

And also how many people have them.

There's quite a bit hurdle both in terms of cost and setup time.

The next is mobile VR.

That's the Daydream, Gear VR, those sorts of things. Even Cardboard fits into this.

This is a much broader audience.

It doesn't have quite the resolution or performance that the tethered experiences do.

But it's in everybody's pocket and they can experience it whenever they want.

This is by far the dominant slice of the mixed reality experiences that people are sharing at the moment. And then at the other end, we have mobile AR/MR/XR depending on your terminology.

These are devices that actually have camera access turned on so you can see into the real world. So everything from Google Glass through to Vuzix Metaio those sorts of devices, they are getting quite a bit of traction in the enterprise space.

So there's a lot of people using these in task tracking, pick and pack sort of operations, field operations, so it's not a consumer experience but it really is getting a lot of traction in commercial enterprise space.

What's evolving right now, if we look at things are still changing a lot.

While we've got the basis of mixed reality in the browsers, things are still gonna change a lot over the next few years.

One of the first things that's really changing is depth sensing.

So the ability to not only capture images of what's in front of you, but measure how far away each of those pixels are.

The first and most common example was probably the Xbox connect.

So that uses infra red time of light to measure how far things are away and you can dance around and you can work out the pose of your skeleton. And capture depth buffers that describe your shape. Probably the most common small consumer device is the late motion sensor, you can see on the side here, that does hand tracking.

Its about 60 or 70 dollars put it in front of your computer to strap it to the front of your VR experience and you can literally just track your hands moving around. That's give you a really great sense of embodiment and you can really then start to reach into scenes and explore them.

And of course with the stereo cameras that are being launched on the higher end devices now.

And emojis are starting to spread.

So if you haven't seen them, you could take a standard emoji and as you move your face, you can do the sort of character mapping and record its variances. The next technology that's changing quickly at the moment is geo location.

GPS up until now has been fairly inaccurate but really useful.

It works really well especially outdoors, not so much in cities where there's canyons, but you can get down to about three metre accuracy. Indoors you can get not as accurate, but you can still get pretty good precision and everybody is really comfortable now, with using mapping technology on their devices. But this new set of chips that are starting to ship from early next year.

And so they can bring it down to about 30 centimetre accuracy.

The challenge with GPS up until now has been the satellite signal has come down, the length of the, what's effectively a ping, the length of the messages that come down when they bounce off multiple parts, they all overlap. You can't easily distinguish which is the true signal and which are the reflections.

I think it's level four or layer four is the new technology, and each of the pings are such high resolution at smaller time slice, so even when the messages overlap, they really don't overlap in length.

And so the first one that comes through is genuinely the first path.

And so it's much easier to discriminate to a much higher resolution.

That's gonna really change general positioning, full stop. OS level pose tracking.

So that's the ARKit and ARCore.

That's really gotten everyone's attention and you've seen demos everywhere on the web at the moment. That's really standardising and it's only gonna get better and better.

Also on top of that, this maps back to the depth sensing as well.

You can now buy devices that have Tango included. So Tango uses the depth sensor that helps you do the sort of OS level pose tracking.

And if you saw Eika's demonstrations, the quality of tracking of a Tango device over just a standard ARCore device there's a massive improvement.

Not only, just back on the Tango devices, not only do they let you do the pose tracking, but they're great for content creation as well. You can use them to just scan literally 3D objects. And so it allows people to just capture objects in space and then reuse them in their content or just leave them in place as virtual memoirs of what happened.

In terms of the standards, so WebVR is stabilising.

Up until now it's still been an evolving technology. It's not a formal W3C standard yet.

But it is becoming widely available.

So we've chosen not to make WebVR support standard in our platform up until now.

Custom application is supported.

But over the next couple of weeks we are rolling it out because we feel that it's at a point now where it is standard.

And especially for the editing experience that's great. WebVR is the basis for the projection into the new standards too.

So these are the things that we were talking about with ARCore and ARKit.

So Google's proposal that's come of Eika's team is for WebAR.

And they've released a series of open source apps that you can just build WebAR on ARCore, WebAR on ARKit, and WebAR on Tango.

Try saying that quickly when you've had a couple of years. So I'd recommend checking those out.

The demos are great.

You can download them, but it requires you to compile these applications. You can't just use these in standard web browsers. So these are just experimental proposals at the moment.

The other example of that is Mozilla's proposal. So they've gone to, Blair and his team have gone to defining an actual API proposal.

But again this requires compiled applications and isn't available on any of the browsers that are there. Also there's a couple of assumptions built in that I think we need to push beyond.

So there's a certain amount of assumptions that all of this tracking has to happen at the OS level and we just pass the pose up to the API and then the apps do that on top.

What we've done and what we think is really important is to be able to do computer vision up in the JavaScript layer as well.

That takes that extensible web manifestor sort of approach, where you expose underlying features, you let people experiment and then when things start to stabilise, when you really see the clear patterns, then you can formalise the standards more.

And I think leaving the ability for us to pass the pixel data, right up to the JavaScript and WebGL layer and be able to experiment there is really important.

Deep or machine learning.

That's really improving fast.

You can now deliver that, run that in the GPU on the browser.

The main difference so far and this isn't gonna persist but at the moment, between machine learning or deep learning and the sort of computer vision that I was showing before, is that deep learning is generally used more for classification.

So I can see this type of object in the viewport. You may highlight the region of interest, the area that's in there, but you can't work out its pose, its orientation that sort of thing. So traditional computer vision tracks the pose and orientation and scale of different things. That's gonna evolve and you really can't underestimate the impact that iOS can have in this space but yeah that's moving really really fast.

The other things that are evolving quickly that this is just becoming a lot more sensors. We use a lot of these, we use, we really heat up the phones, that's a great acitivity during winter, using AR in a mobile experience. Keeps your pocket warm.

More and more sensors are being made available through these devices.

The one thing that's missing, so there's a generic sensor API movement through the W3C to standardise these. One thing that's currently missing is generalised timestamping across these.

If you're using a lot of different sensors, you really want a common time code across all of them, so that's something that definitely needs to evolve still. And more cameras.

We're just gonna get more and more cameras. So we now have stereo cameras on the back of devices, different cameras on the front with AR projectors and AR cameras as well.

We're gonna have arrays of cameras on the back of devices. This is just gonna get more and more.

And we need to assume in all of these standards, that we could use all of them at the same time. We're really pushing the thermal limits of the devices, but the opportunities that they create is for creative experimentation and interaction is massive. So let's have a look at what are the missing pieces of the web.

So while we have mixed reality working now, there's still a lot of things that would really improve performance, really improve the ability to deliver and develop these experiences.

The first, so almost all computer vision is based on a really simple model called the pinhole camera model. And that harks back to the era when people would put themselves on a dark room, prick a hole in a blind and the light projecting through that would actually project an image on the wall.

And the mathematics of that is still what we use now. And that allows us to take the pixels of the images that we see, and work out what that pixel means in terms of where it actually is in three dimensional space. And there's a bunch of things that we use mathematically to construct that.

You need to know the size of the image, so the sensor size. You need to know the field of view.

So how wide the angle is that you're seeing and then offset some things like that.

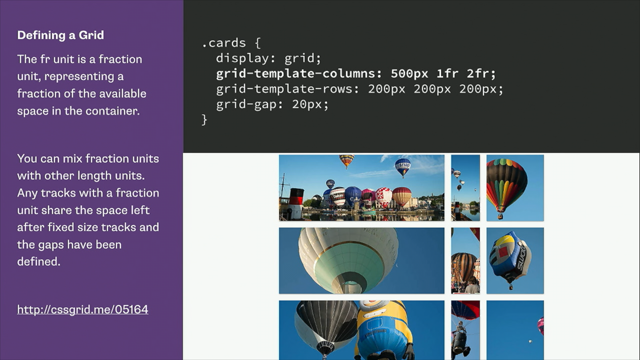

So all of those components are defined as the intrinsics of the camera.

At the moment, there is no API that lets us do that. So we can use WebRTC, the getuse media API and we can reach in and we can grab the pixel data. WebGL allowed us to use array buffers, and really reach in and grab each of the pixels and the channels and the pixels, and start doing real computer vision, analysing what's really there, but still now API that lets us introspect the camera and really learn all of the parameters that we need to make this more accurate.

We have dealt with this by making some assumptions around common devices that are out there.

Most mobile cameras have within a range similar setup for these sorts of things.

And then we also let the users tune the experience so it's just visually better.

So you can make it look right.

But to move forward to get better performance and to get just better registration, we need to have an API that lets us get these intrinsics. But not only reading the details of the camera, we also need to be able to control the camera API. So Qualcomm has had a proposal out there for a while for this, it hasn't gained much traction.

It's much more focused on photography, but allows you to control the auto focus, the white balance, the brightness, or sorts of other sensitive of the camera. Those things are really important especially once you get into more immersive experiences.

You can especially with Gear VR if you have a Daydream, I'd recommend hacking the corner of it with a hacksaw literally, so then you can expose the camera for you. What you can do is you can then turn on the camera in that experience and create a video seethrough experience, but one of the challenges at the moment is you will notice the experience jumps a little bit because of the auto focus and things like that.

So we really need to be able to control that to give real experiences.

We obviously need this one API that works across ARKit and ARCore.

That gives us the pose and the plane and then also deeper access to the point clouds.

Being able to use the point clouds to localise into a broader map is the big key missing part at the moment. If you look at all of the ARKit and ARCore experiences that you see, there are some people that have done much deeper implementations where they've integrated into cloud services, they can look and match those features to where it is in the real world, but that's a very, very custom development, very specialised area.

At the moment, all those experiences are session based. Generally the user has to mark out where they wanted to be, it's not gonna persist.

So if somebody else comes along and sees it, they're not gonna see it in exactly the same place. And one of the challenges, those features aren't even guaranteed to be the same, not just across session but across version. So if you read the ARKit docs, they explicitly say we don't guarantee that these features in the point cloud, will be persistent across different versions. We may change our algorithms, and it'll just be completely different next time.

We also need optimised pixel access.

So at the moment, there's a couple of layers to this. So when we render a video in a browser, it used to be that we would just have a black box object and you can play or pause, but now we can really reach in, grab a frame, and grab each of those pixels and start doing the processing I've been talking about. All of the browsers now when they render the videos, they are already using the GPU and they've already created a texture, which is the memory that describes that frame. So they have a texture ID.

When we do the computer vision at the moment, we then have to take that frame write it to a canvas, and then do another extraction and then that gives us an array buffer that's got all of the pixels in it.

That's a long and complex process, that we can really avoid.

What we should be able to do is just pass through the texture ID to the GPU.

It's already there and then that saves a massive step. So that's a major optimization.

We also hear a lot of people talking about using web workers for this sort of optimization.

Web workers are great.

So they're just like running a different thread. You have the main thread that the experience runs on, and then you can run these separate experiences, code in the background.

The challenge with that is you can do a zero copy, and we pass this data through to it.

But the workers actually run slower.

So we've done a lot of different experimentation to try and make multiple processes running in the background, doing this computer vision. But we find that they just run a lot slower. So the main experience is a lot more responsive. You'll find the main video stream is updating really really quickly, but then you get a sort of swimming experience with the tracking.

So we need to make sure that that's faster in the workers. So that's been through the technical aspects of it. Let's look at how the reality hype differs from the reality of the market.

And I think this is important for product designers and business people and if you're really looking about where you're gonna focus your development efforts, it's important to see where the market is playing to. So if we look at VR's probably had the biggest explosion up and until some of the ARKit ARCore stuff that's happening at the moment, but VR really grabbed people's attention.

The reason that really happened is a few layers, but the first thing is that VR has things to sell. AR has really suffered from a challenge that it's generally a free piece of functionality in an app that you give away. It really struggled with the business model, whereas VR is much more aligned with the video game sort of concept, where people were selling gaming cards, NVIDIA, those sorts of companies.

It matched perfectly.

So HTC, Oculus, PlayStation they're all selling physical devices that people are paying consumer dollars for.

It makes it much easier to build a market and then much easier to put a lot of effort into marketing and promotion.

The other thing is the way that technology tends to evolve in general.

So this is some work from Paul Saffo.

He's a great futurist and I recommend looking at his work. He talks about how projection of expectation of technology tends to be linear.

That's the main line that runs up through the middle. And when we first try a technology or first implement a technology, it tends to not quite deliver what we were expecting.

So you get that gap where we over predict the change in the experiences and it's great.

And then over time, we forget about the technology. And our linear expectation is continued from that point. But technology tends to improve faster.

And you get to the point where you're past the midline and it then starts to over perform and we under predict the change.

So anybody who tried VR in the '90s with pterodactyl, I always make the joke, probably still feeling nauseous now. It was a horrible experience.

It was really bad.

But if you look at where it's come to now, and you try the Vive and those sorts of things, it's really come a long way.

We've gone on leaps and bounds.

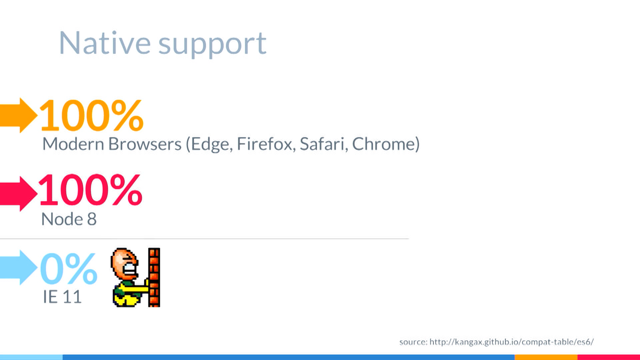

I think we're gonna go through another few waves of this. But this explains why most of these technologies even If-Pose went through a couple of waves of this where people's expectations exceeded what was possible. And in the end, it really achieved what was needed. If we look at the scale of the market too, so VR headsets are actually a really really tiny market. Looking at this slice here, the green slice is the mobile or smartphone based headsets. The PC powered headsets are the light green. And the game consoles are the blue ones.

But if you look at the total scale completely, for 2017, the end of this year, sort of 16 to 18 million headsets, to put that in context, there will be more iPhones 8's shipped by the end of this year than there are head mounted displays on the market. And that includes Cardboard.

It really is still a very small market to address. If we look at the global mobile market, phone sales have flattened out.

Our phones are generally meeting our needs and we're holding on to them for a longer time. If you look at that red line, the peak and then the dropoff that's really how our general turnover rate is declining.

But it's interesting to see how the slices have changed. So the green bar is the Android.

And the orange is iOS.

iOS is now quite a small slice of the global market. Android really have dominated global smartphone sales. Australia and the US are quite distinctive markets in that term.

So it's really important to keep that in mind if you're addressing a global audience.

But the good news for iOS is certainly that they adopt the new versions of the operating system so the standards get updated more quickly.

Three to four weeks after the release of iOS 11, well over half of all users had upgraded.

So that means you can now rely on iPhone users having the standards you need, where you can just send them a link and it just works. So what's likely in the future? This is a slide from Qualcomm showing what components are required in the sort of devices we'll strap to our head.

But it really is gonna take quite a long time for those to be available.

The question is whether the smartphone will evolve to become an XR wearable? Or whether it will be a separate accessory to that? But either way it's gonna take three to five years. If you look at their analysis, they say that its gonna take the same 30 year cycle that mobile phones did.

If you look at the 2020, that's really about 2020 that we start seeing people really using these devices strapped to their head in a mainstream experience.

So that's another three or more years.

That's still a long time.

So I'd definitely focus on mobile delivery of experiences in that time period between now and then.

So we think that simple form factor devices, this is a simple iPhone case that I own.

My wife says it's the most James Bond shit that I own. It cost $11, you get it on Ebay.

And it's just always with you.

It's basically Cardboard but you can pop out the experience and use AR or VR in an immersive format, wherever you are.

There's a very quick video I'll show from their Kickstarter project.

- Virtual Reality has come a long way since it was introduced right here on Kickstarter with Oculus Rift.

This is an incredible device that allow people to experience virtual reality for the very first time.

Then Google introduced Cardboard.

To tap into the power of the mobile device to make VR more accessible.

Imagine anywhere you go, VR content just moments away. Imagine to be able to share those virtual reality experiences with your friends, with your family, on the front lawn or on top of a mountain.

And that's what's so exciting, 'cos technology that's accessible, something that you could share with your friends and family, is technology that's most meaningful. - And I think that's the key point.

The accessibility, the sharability.

The key messages that I'd like you to take away from this presentation, WebVR is here now.

WebAR is here now.

We are really focused on removing the friction, changing the way people think about how they create and deliver VR and AR experiences.

So mixed reality now runs in the browsers, in your pocket or in your computer.

Thank you very much.

(audience applauding) (pleasant jingle music)

It feels like Virtual Reality is everywhere you look over the last year. For a technology that’s over 55 years in the making, it’s taken a long time for VR to become an “overnight success”. What’s driving this buzz and how does VR relate to Augmented, Mixed and Extended Reality?

We’ll set the context for how these new technologies create “Immersive” experiences and why this is the key defining factor that will drive the next computing revolution, much like mobile did over the last 10-15 years, and the PC did before that.

We’ll explore the key components that define these technologies and show you how you can start using them to solve design problems right now. Learn how you can use the Web to extend reality creating a friction-free, seamless experience for people across multiple devices (not just AR and VR goggles). Find out about the real world constraints that you need to keep in mind and how this is likely to evolve.