The State of Augmented Reality in the Web Platform

Ada Rose Cannon: Hi, everyone.

I hope you're enjoying Web Directions Code.

I'm Ada Rose Cannon, a developer advocate for the web browser Samsung internet.

Samsung internet is an Android web browser with a focus on user privacy.

I'm also co-chair of the W3C immersive web groups.

These are the groups which are developing the web APIs to allow web browsers to use a immersive hardware such as virtual reality headsets and augmented reality equipment to either put users to virtual worlds or to bring virtual content to the user's environment.

The main API used for this is WebXR.

WebXR is an API that gives you access to the positional information of immersive hardware, so you know what the user is looking at and what they're doing.

It also gives you access to displays so that you can render WebGL content from the user's perspective directly to the displays on the user's device.

And it does this in a very efficient manner.

A lot of the difficult problems with designing WebXR are related to working within a very tight frame budget and maintaining consistent performance.

WebXR can be used to produce virtual reality and augmented reality content.

In this talk, I'm going to focus on augmented reality or AR for short.

Augmented reality is where virtual content gets placed into the user's environment.

Sometimes people refer to more advanced, augmented reality as mixed reality, but to keep things simple, I will call it all augumented reality throughout this talk.

The APIs to work with augmented reality are pretty new, but they are available to use in browsers today.

There are two main ways augmented reality is done today.

Immersive headsets like the Microsoft HoloLens 2.

And handheld devices like modern smartphones.

When you are using hardware like that and a web browser, which supports the WebXR APIs, you can make an augmented reality experience as of today, September, 2021.

These are the browsers which support WebXR.

So Google Chrome on Android devices.

Samsung Internet, also an Android devices, Microsoft edge on the HoloLens too, and Oculus web browser on the Oculus Quest and the Oculus Quest 2.

Also some desktop browsers can work with tethered devices, but the situation there is more complex.

I'm sorry to say for iPhone users, Safari doesn't yet support WebXR, but it is in development.

So stay tuned and hopefully we'll hear something announced soon.

Here is the WebXR demonstration running in Samsung internet.

It uses the WebXR API to play some virtual content in the real world.

This example is a rollercoaster designer, which the user can do by placing items like the rollercoaster station or the various nodes in order to build the track.

When it's complete the rollercoaster runs and here is the exact same demo running in Microsoft edge on the HoloLens 2.

So we start the rollercoaster here, and then we go up to here and then we'd bounce up here and go up and then go down to here and then go back.

All right.

I've made no changes to the code to support this.

Nor does it even run down different code paths.

The abstractions provided by WebXR allow for us as developers to support multiple AR formats without needing to worry about all the hardware differences.

The ability to support unexpected hardware is one of the superpowers of the web.

No one was planning to support smart phones when old sites like the original space jam website were develop.

Yet it continues to work on this new hardware.

WebXR is designed with this behavior in mind.

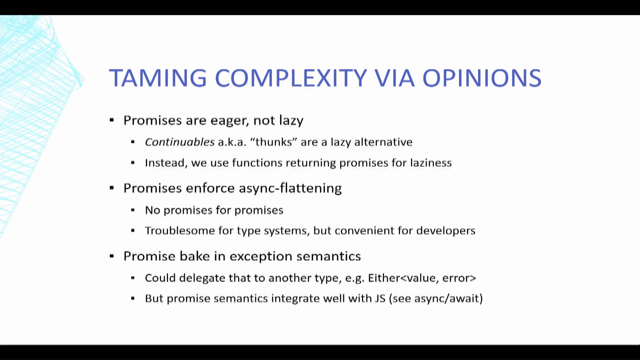

It's been built to last long into the future because the first attempt at immersive web technology was called webVR, which unfortunately had to be deprecated because it was not future-proof.

It was an API designed to only support virtual reality.

Using what was known about the hardware at the time.

But as the capabilities of immersive hardware improved and augumented reality started becoming a viable thing that people were working on it quickly became evident that web VR would not be sufficient to last into the future for two main reasons.

For one, WebVR was not designed around the level of world understanding required to do augumented reality.

In addition, different sorts of immersive hardware would have different sets of capabilities.

And no one piece of hardware would have every single possible feature.

Also different sorts of hardware would have different sets of capabilities.

So if the API had had support for all the features.

There wouldn't be a single piece of hardware, which supported every possible feature.

So no single device could support the whole spec, but WebXR is built differently.

So WebXR the API I'm talking about today is the successor to web VR.

And it's designed to mitigate the limitations we found when we were building web VR.

It does this by being modular.

So as new capabilities are available through the hardware, the API specification can be updated by introducing new modules to support the new features, rather than needing to update the whole spec.

To demonstrate this, I'm going to talk about four features that all got introduced at different times, but now work together to produce an excellent augumented reality experience.

So let's build up a scene together.

Step-by-step enabling each feature as we go, so we can demonstrate how they work.

These are all features, which are handled in the popular A-frame framework today.

A-frame is based around HTML.

It has custom components for all kinds of different web GL and WebXR features.

And because it's made with HTML, I can show the code for how to enable each feature on a single slide, which is great for a talk like this.

And there's great documentation for working with WebXR through other libraries as well.

The website immersiveweb.dev has a great set of tutorials for getting started with different frameworks.

The Core WebXR module is designed to do two things well.

A simple virtual reality experience with basic controller input.

And it's also designed to be extendable with modules.

The first of these modules that was added was simple, augmented reality support.

This doesn't do any environmental tracking or any other advanced features, but if your object doesn't need to be affixed to any physical location, it can just float in the air, to be honest, this might even be enough.

WebXR, is designed to allow developers to build in such a way that they can use whatever features are available on the device.

In the web this is known as progressive enhancement and for WebXR this means you can build a single experience to support virtual reality and augmented reality depending on the device being used.

But if you want to support VR and AR together, there's a couple of things you should be aware of.

So firstly, your virtual reality scene probably has some kind of background content like a floor and the sky so that the user isn't floating in endless void.

But this background content gets in the way in augumented reality because reality already has a floor and the sky and an environment around the user.

So you need to make sure that your environmental elements all get hidden in augmented reality.

A-frame has a really handy hide on enter AR component, which does just that you add hide on enter AR to the elements you want to hide.

And when the user enters augmented reality, these elements get hidden.

Generally you, you apply this to any lights or 3d models you want to disappear in augmented reality, the ones that don't make much sense.

Another thing you should be aware of is that compared to virtual reality, when you build an augmented reality scene, you need to be aware of some new constraints, size constraints.

Because when the user is in virtual reality, they exist in an infinite void.

So there is no limit to the size of the objects you can place, you can put objects like the Eiffel tower in front of the user.

When the user uses augmented reality to place objects in their environment, space is no longer infinite.

You have to fit the objects to the user's environment.

So the virtual objects you place.

Should probably have a footprint smaller than a few square meters, because if you get much larger than that, the user is going to have a difficult time fitting it into that real environment.

Because if they're inside, which they most likely will be, they won't have that much space.

Once you've done that, this is what, what the XR looks like with none of the additional features, just augumented reality.

The device sets the origin of the scene to its best guest location, usually trying to place it on the floor.

Next we have lighting estimation.

Lighting estimation is an important feature for ensuring the object looks like a believable part of the user's environment.

The WebXR lighting estimation API as an important API for ensuring the augmented reality content looks like a believable part of the user's real-world environment.

And it does this by giving you a few different things.

Firstly, the primary light direction and the major lights in the scene.

The major lights in the scene are given to you in a format called a spherical harmonic.

It also gives you an estimate of what the user's environment looks like as a cube map, which lets you have pretty realistic reflections on a shiny object.

And actually using this is easier than you'd expect.

A-frame has the background component.

And the job of the background component is to automatically generate the ref the background reflections from the scene.

For virtual reality and normal webGL, it uses this based on the virtual content.

But for augmented reality, it uses the lighting estimation to use the cube map as the reflection, it gives you the realistic reflections from the shiny objects.

If you give it a directional light, it will take control of it to set its color and direction to match those of the real environment.

But will also use a special probe light from the spherical harmonic information and add that to the scene as well.

All of this put together can make virtual objects look like they fit almost seamlessly into the real world.

It's quite remarkable.

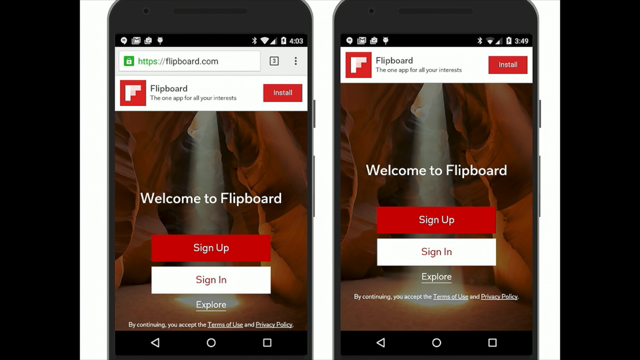

The next API I want to talk about is a really important one.

It's called DOM overlays.

So even though handheld augmented reality looks like it's just a normal webpage going full screen.

In reality, it's a special mode where it can composite, the 3d content and the real world content together.

Normally this prevents you from including any content from the HTML document.

This is unfortunate because it's throwing away one of the web's biggest perks, the normal web is very powerful.

It's built in features for accessibility and handling user interactions, using Java script, the DOM overlay API restores all of these features by allowing a full-screen overlay of HTML and CSS content.

It allows you to use all of the content you expect like links, you can do things like text selection and the accessibility tools still work.

One thing that you should be aware of is that when your users interact with the content in the DOM overlay, it also fires a select event on the underlying scene.

The select event is kind of like the click event for WebXR.

Now, this is really useful if the DOM overlay only contains informational content where the user expects to be able to tap through the DOM content in order to hit the scene underneath.

But if you want the user to interact with it, say you have buttons and form elements and things like that, then you probably don't want to be doing both at the same time.

And there is a thing to help you do that.

There is an event called the beforexrselect event.

So by applying this to your DOM overlay or to the individual elements, you can add an event listener for beforexrselect and you can cancel the select event entirely by calling event.preventDefault.

This is incredibly useful for combining what exhausts select based interactions and DOM and Java script style interactions together in the same scene.

This is a really fantastic API for building accessible user interface for augmented reality.

Unfortunately right now it's only available on handheld augmented reality devices.

And the reason for this is, is because defining what a full screen overlay looks like for virtual reality or head-mounted displays is a very difficult question to answer because in that situation, the user is placed entirely in the scene.

So do you make it cover the entire users field of view?

Do you place it a bit further away from the user and have it placed into their environment somewhere?

Or do you have it floating around the user?

It's a really difficult question to answer.

And it's not one we've been able to solve.

We are looking into other methods for providing more arbitrary areas of DOM content so that you can use HTML and CSS inside of immersive scenes.

But right now this is a long way down the road.

If you want to use the DOM overlay in A-frame, then on the WebXR component on a-dash-scene, you set the overlay element to be the selector string for the element you want to be made full screen.

A-frame will also add the CSS to make it appear full screen over the scene before the user enters augmented reality.

So you can maintain a consistent experience.

The next API I want to talk about is the hip test API.

The hit-test API finds where real surfaces exist in the virtual space.

These are surfaces such as floors and walls, tables, and chairs.

The API works by following the line in the direction of a WebXR space.

For example, the pointing Ray from a controller or the gaze from the viewer.

When this line touches a real world object, the position is returned to the developer for them to use however they want.

The main use case for it is to place an object at that point, if you place an object at that point, it all appear to be resting upon the detected surface.

To use this in A-frame you used the ar-hit-test component on the a-scene element to target a particular object.

This will automatically generate a placement radicle based on the footprint of this object.

So the users can see how large it's going to be when they're going to place it into their environment.

It also fires events so that you can inform the user of the status of the hit test system and make interactions based around placing of objects.

The next API we're going to talk about is a pretty subtle one, but it's very important.

Anchors.

So anchors are a fairly new AP But they work to solve a subtle and difficult problem in augmented reality.

But to help understand it I must first explain little bit about how AI systems work.

When an AR system first starts up it makes a lot of assumptions about the shape of the world to balance start-up time with the visual fidelity of the AR experience.

But over time, the AR systems knowledge of the world improves.

And this may invalidate some of the previous assumptions they had made.

For example, the height of the floor or the sizes of particular objects and landmarks.

When this happens, the shape of the world is updated.

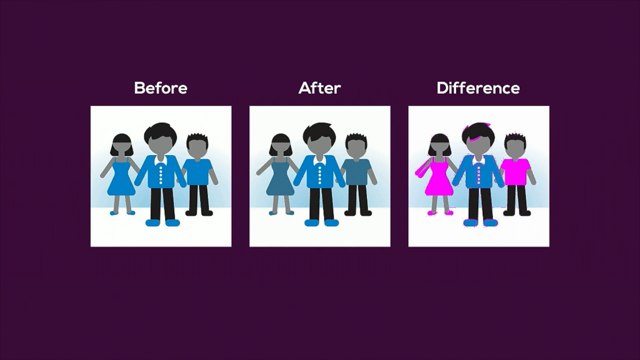

So as developers, we rely on this information to place our virtual objects onto the real world.

But as the known shape of the world changes, objects may appear to drift in comparison to their real world counterparts.

If we placed an object on a table that the system had previously assumed was one meter off the ground, but turned out to be one meter and 10 centimeters off the ground, then the object will appear to drift as it learns more information.

But this is where anchors come in.

Anchors tell the system, "this point should stay near this physical real-world location.

So tell me when it needs to move in the virtual space so that it can stay in the same place in the real world".

This has the effect that even though objects may move in the virtual space, the actually appear to not move at all.

When viewef through the device.

Which is a fantastic experience.

It really makes objects feel a lot more anchored in the real world because they don't drift around and shift in place.

This feature is really useful.

It goes hand in hand with hit-testing and that's how it's set up in a-frame.

So in a-frame, if you place an object using the ar-hit-test component, it will automatically generate an anchor for it as well.

You don't need to write any additional code to handle that, but I thought it would be is an important feature to, to point out.

Now we've built a neat augumented reality experience together, but placing an object in the real world.

I've build a more complete demo you can use to get started rapidly.

It's ar-starter-kit.glitch.me.

It implements all of the features I've described above.

It's on glitch.

So you can use the remix button to make your own version.

It has some nice to have features implemented already so that you can get started on the interesting parts of WebXR without needing to worry about the basics of putting an object into a scene.

My personal favorite feature is the automatic reticle generation, which I demonstrated earlier, this is really powerful for ensuring that the objects we place fit in the real environment for the user.

I also really like the fancy augmented reality shadows it ads as well.

Shadows are fantastic at helping the illusion that an object is part of the real world.

An interesting side note-because of the way holographic displays work, they are incapable of darkening the environment.

So things like shadows are actually impossible there.

But this shadow component does work really well on mobile phones or anything where the AR stream is overlaid on top of a camera feed.

Finally, it also contains a sample component for firing click events when you tap on augmented reality objects.

This will help you build lots of things.

But more importantly, it's designed to give you an idea of how to write code, which responds to WebXR events and can work out the position of the real hardware in 3d space.

Because although the API makes it pretty accessible this is still a surprisingly tricky process to do.

And I'm going to talk about that in the next section.

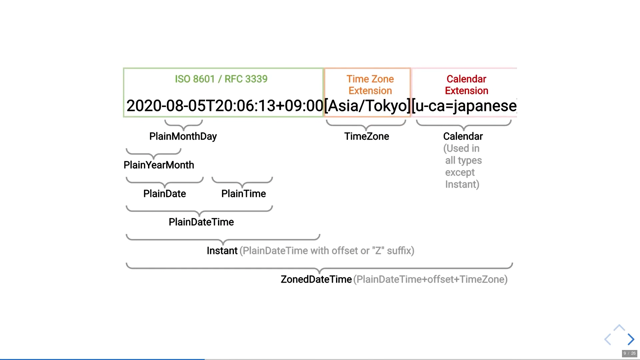

Two important concepts with WebXR are spaces and frames.

Spaces represent the positions of pieces of hardware or particular tracked points.

The artifact you want to extract from a WebXR space is a coordinate in your virtual 3d space, at a particular time, which you can use to place a 3d model.

But to get that, we need to think more in detail about what that actually means.

Because augmented reality has to exist in the real world.

And the real world does not have any innate coordinate system.

The up direction is entirely dependent on where you are on the planet.

If you are in space, what does "up" even mean?

And yes, this was a real issue we had to think about because there are HoloLenses on the international space station.

In reality, a 0, 0, 0 point is nonsensical.

Should it be at zero lattitude and zero longitude the earth's surface, but then what if you're not on the earth's surface?

Does it need to be the center of the earth?

What happens if you aren't on the earth, if you are on the moon or Mars?

The web has got to be future-proof and it's not an unrealistic scenario that the web XR API will be used on off world missions.

Web APIs have already been used in space travel.

So how do you deal with it?

Well, the trick is to abandon the idea of an absolute coordinate system entirely.

Instead, we use a relative coordinate system.

So when you start your scene, you request a reference base, and this is the space to which all other spaces are compared.

The other issue we need to solve is time.

So.

To make this make sense.

We need to break down how animation works.

Because after all WebXR is, is 3d animated content.

It uses standard animation concepts to work.

So animation is accomplished by displaying static images rapidly one after the other, in what is known as frames.

For XR, depending on the system you are on, rendering each frame can take up to 60 milliseconds.

But often a lot less time because XR devices tend to run at faster frame rates.

The time between each frame is the time you use to do your calculations in order to show the next frame.

The objects being tracked by WebXR are moving and rotating at different speeds.

For example, the head and the hands.

And some track anchors will all move differently and continuously over time, because after all, even though WebXR might be running at 60 or 90 or 120 frames per second, people will be moving continually over the course of a frame.

And the difference between the positions at the beginning of each frame and the end of the frame will be notable enough to cause inconsistencies.

So the best time to get the position is immediately before the frame is shown to the user.

But this is not a realistic expectation because the last step it's before shown to the user, the rendering step, that alone may take several milliseconds.

But if you use the position at the time, the code runs, so near the beginning of the frame, by the time it's shown to the user, you will have inconsistencies in the placement of these virtual objects.

On the less this can have the effect of breaking the illusion of augmented reality.

Or at worst it could make the user ill.

XR systems have the capability to estimate the future position of an object based on its current velocity and angular velocity.

So the API is designed so that when you request the position of an object, what you actually get is an estimate of its future position at the time the current frame is due to be running.

Putting the relative position and the predictive capabilities together, we get what is called a pose.

And a pose is an absolute position in virtual space.

At the point in time, the frame will be shown to the user.

These poses have numerical values for positions and orientations, which we can use directly in our 3d engine to render the object.

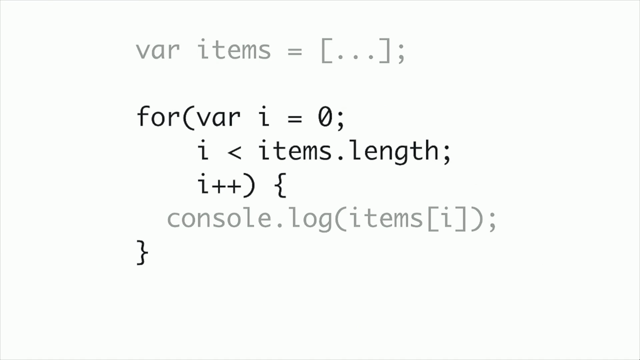

Getting the pose is where the WebXR frames come into play.

The frames are returned as part of the request animation frame for WebXR.

Each frame represents the point in time when the user will see the frame.

So we have the reference base we requested when we started the scene and we have the space we want to find the position of.

And in code, the final request looks like this.

This example's for getting the position and orientation of controllers, targetRaySpace.

So you can tell what the controller is pointing at.

So we use the getPose on the frame with the object we want to get the position orientation of, and the reference space for the whole scene.

This gives us the transform property on the pose.

Which gives us this raw information.

I know this is complex, and if you are struggling with that, I'm always happy to point you towards some code samples, demonstrating how to use each feature, as although we've done the best we can to make this API friendly for developers, it can be intimidating to work with the first time.

If you want to reach out to me, you can find me on Twitter at, @adarosecannon.

And you can also email the team at webadvocacy@samsung.com.

I talked about some of the APIs you can use today, but there are more APIs coming in the future to further improve the augmented reality experience.

These specs are less baked than the ones I mentioned earlier.

They're either behind a flag in browsers or some of them are even still being designed.

You can read more about them on the immersive web github organization.

Getting feedback on these APIs is really useful to the immersive web groups.

So if you think they are going to be useful to you or you have good use cases for them, it's really valuable to us to help justify the ongoing development of these specs.

So please let us know.

The first thing I want to talk about is depth sensing.

Depth sensing is really nice.

It gives you access to a depth map of the scene from the viewing device's point of view.

And you can use this for a few different scenarios, such as physics simulations and mapping the environment.

But one of the things that you can use it for which can benefit every AR scenario, is scene occlusion.

By occlusion, I mean you can work out when real objects, occlude virtual objects, so that when a person walks in front of your virtual object or your virtual companion or the object is moved under a table, or you change your position so that there is now an object in between you and the virtual object, then the virtual object can no longer be seen.

Whereas normally it will still be placed on top of the table, which looks a bit weird.

This effect is a remarkable improvement in terms of visual fidelity and believability for augmented reality.

A related API to this is the real world geometry API, which gets you similar information, but in a different format, instead of giving you a picture of the depth from the camera, it gives you actual 3d geometry you can use.

Some techniques prefer using geometry like this, to do a occlusion, but one of the really great use cases is use it for physics engines.

So you use it as the mesh for your physics, and then you're can have virtual objects rolling and flowing off a real table.

You can also use it to build 3d scans of the environment, or it's going to be useful for building augmented reality architecture tools.

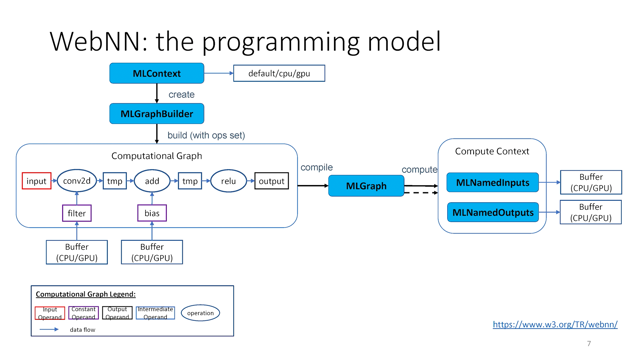

The next API-raw camera access-is a really interesting neo.

Which gives you raw camera information in formats for the CPU or the GPU, which can be used in conjunction with computer vision tools, compiled for the web to build your own environment detection features.

This API is incredibly powerful.

So if there's any augmented reality picture you need that hasn't been standardized yet, you can just build it yourself, using existing computer vision techniques.

For example, building your own Snapchat filters for the web in WebXR, would be a complicated, but doable process.

If you combine it with a real world geometry API, or the depth sensing API, you could use this to build 3d models of the user's surroundings or to recognize faces.

Now, this is a very powerful ability for a website to have.

So part of the challenge designing this API is how to effectively communicate the risk of it to end users.

Another API we're working on, which has some complicated security issues is navigation and by navigation I'm even, I mean, moving from one immersive website to another, without leaving AI or VR.

While this may seem odd since navigation is something that the web has been able to do for almost 30 years now.

And the issue is pretty simple.

When the website you're on controls every single pixel on the screen, how can you trust anything that is shown to you?

For an example, if you are on evilVRwebsite.com and you click a link to go to mybank.com, how can you really tell that the website you are visiting is the real website and not one that's been spoofed by badVRwebsite.com in order to steal your passwords and bank details.

Finally let's end on a happy note.

One of my favorite APIs that doesn't exist yet.

The goal of this API is to let you have access to the world position aware, augmented reality.

So for example, you can place augmented reality objects on well-known landmarks.

This is great for virtual signposts, informational labels, and worldwide augmented reality games.

A Anyway, that's all I wanted to talk to you about today.

I really hope I've inspired you to check out the webXR API.

It's pretty friendly to use today.

And building augmented reality features is really nice and very fun to do.

If you have any issues, feel free to reach out to me via Twitter or email.

And I'm happy to answer any questions you have.

If you want to get involved in the standards, the immersive web community group is open to everyone.

An immersive Web Working Group is available to anyone who works at a company that's a member of the W3C.

Thank you so much for listening.

And I really hope you enjoy the rest of Web Directions Code.

The WebXR API can be used to make VR and AR capable websites this talk will introduce some of the newer augmented reality features and how to use them.