AI: Risks and challenges

Introduction to Ethics and Regulation of AI

Ajit Pillai opens the discussion on the ethics and regulation of AI, setting the stage for a conversation about balancing productivity, efficiency, and responsible AI use.

Panelist Introductions and Focus on Responsible AI

The panelists introduce themselves, each bringing a unique perspective to the conversation about responsible AI. The discussion is aimed at understanding how ethics can be embedded in AI technology for businesses and organizations.

Challenges in Embedding Responsible AI

Panelist Bec Johnson discusses the inherent biases in AI systems due to training data and the challenge of ensuring that AI models reflect the values a company wishes to espouse.

AI in Industry and Predicting Outcomes

Michael Kollo addresses the challenge of predicting outcomes of AI systems in the industry, particularly in the context of competitive capitalist frameworks.

AI Regulation and Balancing Innovation with Safety

Raymond Sun, a technology lawyer, speaks about the challenge of regulating AI and finding a balance between innovation and safety, highlighting different approaches taken by countries.

Discussion on AI and Generative Technologies

Paul Conyngham reflects on the challenges of distinguishing between real and fake outputs from generative AI technologies, and the implications for regulation and human interaction.

Ensuring AI Does Not Violate Human Rights

The panel discusses how to ensure AI platforms do not violate human rights, touching on the importance of understanding legal, social, and ethical implications.

Transparency and Explainability in AI

The conversation turns to the challenges of achieving transparency and explainability in AI models, particularly in business contexts, and how to effectively communicate AI decisions to stakeholders.

Embedding AI in Business Aligned with Values and Brand

Panelists discuss the importance of embedding AI in business in a way that aligns with company values and brand, emphasizing fine-tuning and understanding the social impact of AI technologies.

AI Literacy and Education

The panel explores the need for AI literacy and education at different levels, from early childhood to professional settings, and the impact of AI on societal perceptions and job markets.

Responsibility in AI: Who Bears It?

Discussion focuses on who bears responsibility when negative consequences arise from AI use, covering regulatory, organizational, and individual perspectives.

Risks of Using Sensitive Data in AI Systems

The panel addresses the risks associated with inputting sensitive data into AI systems like ChatGPT, discussing confidentiality, privacy, and potential legal implications.

AI's Impact on Creativity and Intellectual Property

The conversation concludes with a focus on AI's impact on creativity, intellectual property, and education, examining challenges in content creation and the changing landscape of learning and copyright.

Ajit Pillai: All right, so as the end of the day, we come to the most fun and exciting conversations, which is going to be about ethics and regulation of AI.

So welcome to the party, everybody.

So I just want to talk that this has been the year of AI.

2023 will be known as the year of AI and everybody has been using it, everybody's excited about it.

We are all thinking about the productivity and efficiency that AI brings to us.

But on the other side, we also have people who are saying that AI is going to kill us all and, it's going to take over.

And it's going to create all kinds of destruction, but what kind of conversation we want to have today is about the balance between both these sides where we're thinking about how we can retain the productivity and efficiency while also thinking about the responsible aspects of how we could utilize AI.

So I'm Ajit.

I'm a researcher at the University of Sydney.

I specifically look into how ethics can be embedded within technology for organizations and businesses.

I also run a small consultancy which looks at how organizations can embed ethics within the culture.

And we have our amazing panelists over here who are going to help us dig into a direction of responsible AI, and with your help, we want to manage the discussion and have some kind of conversation, so please get on to Discord.

I think we have the QR code somewhere over here, so you can get on to Discord, ask the questions so that we can ask the panelists, and I'll make sure I'll monitor the questions too, ask them to answer.

So let's start off with a conversation about who the panelists are going to be.

So Bec, do you want to start off with, who you are and also one challenge that you think is important to consider for responsible AI?

Bec Johnson: Hi, I'm Beck.

I'm a, is it, can you hear me?

Yes.

I'm a final year PhD researcher at Sydney Uni.

I spent a year at Google and ethical AI and, a bunch of different things.

And, yeah, so I'm particularly looking at values, embedded and reflected in generative AI.

And that's not just in the training data, but also, in the prompts that we use and in the way that we evaluate them.

So I've particularly done a deep dive into evaluation metrics of generative AI, in the framework of ethical value pluralism.

The question was Ajit Pillai: One important challenge of embedding responsible AI.

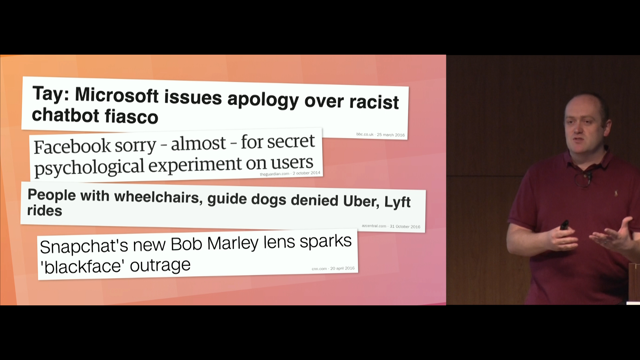

Bec Johnson: Yeah, I think, at the moment, we know that these systems are biased.

There's copious amounts of evidence out there.

And that's partly because of the training data, and so there's a lot of discussion and scholarship around that.

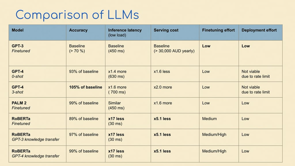

But there's also many other aspects where, particular types of perspectives and bias and worldviews can come into these systems, often now by fine tuning, whether that be through RLHF or R-L-A-I-F, so we, I think we have to always remember that these systems are inherently human systems and they're reflective of who we are as humans.

And whilst we might evaluate them to be, good or bad in the sense of other being racist or sexist or not, there's a whole bunch of other values that humans have in the middle of that.

And so how do we make sure that the models that we're using in our businesses are reflective of the values that you want your company to, to espouse?

Ajit Pillai: What about you, Michael?

Michael Kollo: That's a really good one.

Now I've got to think of something else.

Thank you.

It's fine.

So yeah.

My name is Michael Kollo.

I'm the CEO of Evolve Reasoning.

For the purposes of this panel, I'm probably, the person who comes most from industry in the sense of financial services and kind of investments and finance and so on.

So I'll be the evil capitalist.

I'll play Dr.

Doom.

Yeah.

Which is absolutely fine.

I think one of the challenges that I've seen specifically around the new form of AI, which is generative AI, but I suppose you can call it for a number of other things, is that you're not really sure about the outcomes of the system until you release it into the wild.

So your ability to forecast adverse outcomes, societal impacts, or variety of other kind of more, more standard empirical impacts is really a mix of what you've done in the system, but then how that system is deployed and used and engages with other systems in, especially in the capitalist kind of framework with industries that compete with one another.

And so therefore until you put it into the wild, which is what we saw the demonstration of Microsoft doing with Bing, early on this year, which disasters, followed and some kind of hilarious disasters followed as well, that was really quite on purpose because they said we cannot fully understand the effects and outcomes of the system until it gets released.

And obviously, inversely once the genie so called is out the bottle and it's out in the public space, especially if it's open source, it's hard to then pull those effects back even if you don't like them.

So it's a feeling that you're on a ride, you're just catching speed, and maybe you can influence the slight movement but you can't really easily slow down.

Raymond Sun: Hello everyone, so my name is Ray and I'm involved in AI in three ways.

So first way is I'm a technology lawyer at Herbert Smith Freehills and I basically have a focus on AI.

So I help clients, both startups and big companies with advising on regulatory issues around their AI projects, for example, privacy, IP, consumer protection, I help them write contracts, review contracts around AI products.

So that's basically what I do in the day.

My second involvement is I'm also a developer myself and I've got a few products under my name.

I'm mostly, most of my products are in the dance tech space, so using AI to analyze your dance.

Hence why the photos of me doing a dance.

And that, so I have that, so I like to dabble both in the law and the technology.

And my third involvement is, I create a lot of content, especially on LinkedIn, Youtube and Tik Tok, and I like to educate the community on AI regulation and AI legal issues.

So one of my other products is a website that tracks all the AI regulations across the world, just to keep people up to speed on what's happening around the world.

And that could potentially affect your AI projects.

And I guess the challenge is, on responsible AI is, how do you even regulate AI?

And the key dilemma is, how do you balance between innovation and safety?

And if you look at my tracker, countries have taken very different approaches and there's no one right answer, which actually makes it really hard and is really subject to a country's political, economic, and legal circumstances.

So that's a really big challenge.

Paul Conyngham: Where can people find your tracker?

Raymond Sun: It's on my website or, just search for TechieRay.com and it's also go on my LinkedIn and it's also linked there on my LinkedIn profile.

Thank you.

Paul Conyngham: Just to say one more word on that, it's actually really good.

Raymond Sun: Thank you.

Paul Conyngham: It's it's, I haven't seen anything else like it on the internet anywhere, so it's just updating legislation as it comes around the world.

Raymond Sun: And it's for free, so free to access.

Paul Conyngham: Hi guys, my name's Paul.

You've already heard me talk today.

I'm one of the directors of the Data Science and AI Association of Australia and run my own AI consultancy firm, Core Intelligence.

We've been doing GPT stuff since 2019.

In terms of challenge, I'm going to go for a relatively easy one versus everything else that's been pitched here already.

For me, I just think that, with the age of generative technologies, it's going to be really hard to tell which things are real and which things are fake, and how we go about regulating whether you're a human or whether you're a bot, I think that's going to be an interesting debate.

Ajit Pillai: Thank you, everybody.

So I want to start off with my first question.

So we are consistently developing, utilizing, and embedding AI and generative AI within our organizations and businesses.

How do we make sure that these generative AI or AI platforms that we use do not violate human rights?

Paul, Paul Conyngham: I'll have a crack at it?

You could use a vector database.

Yeah, anyway, that's a really hard question.

So you'd obviously have to build some kind of pipeline where you pull the entire website's data and then compare it against your country or your state's laws.

That's how you'd have to do that.

Raymond Sun: Yeah, this is where you have to look at the issue and then the solution.

In order to work out how to do it, you have to work out what does your application do, and the purpose, it's potential risks, and once you identify the risks, that's when we can then work out what the solutions are.

And your solutions broadly, it's not just the tech, it's tech, people, and process.

So you can have all the guardrails that you want, but if people are not following policies and processes appropriately, then the tech solution is not enough.

So that's where governance frameworks come in, policy documentation.

But as I said, documentation themselves don't do anything.

They must be followed by the actual employees or the organization's people.

That's where training comes in, education comes in.

A common misconception is that, Oh, I have an AI policy, I'm set for governance.

It's not like that.

You need to have both people, tech, and process measures in place to mitigate the risks associated with an AI project or system.

Ajit Pillai: That's a very interesting perspective that I find because, we are always using regulatory spaces and then feeding that into the models and to understand how we can understand what the issues are.

But that also means that you're always, trying, it's not an anticipatory model.

We're not anticipating the issues that are coming because it's always pushing the boundary and then when consequences happen, we track back and embed them.

So how can we create an anticipatory AI which can actually understand before we actually, negative consequences happen?

Beck, do you wanna?

Yeah, Bec Johnson: I think you really have to be careful with that because when you're saying anticipatory… That's also predictive and we've seen a lot of bad outcomes from, allowing these systems to automate, automate and predict, people's likelihood for recidivism and, robo debt and whatnot.

I think, and getting back to what Raymond was saying, you have to be really keeping the doors open to humans.

And humans change too.

And humans are different in different countries, in different communities, in different use cases, and we evolve as well.

I don't think that there's any fix or solution to this, I think it's an ongoing process that is iterative and that we have to build these structures to make sure that we can keep closing that loop of auditing the impacts of these systems.

Michael Kollo: And can I flip the other side?

Just to be the devil for a second.

I think equally you can have the perception of bias where there is empirically none.

Bec Johnson: There's always bias.

Michael Kollo: Absolutely.

A tiny bit of bias.

Bec Johnson: But bias is perspective.

Whether it's good or bad, there's always bias.

Michael Kollo: My point regarding this one is around the idea that causation, for example, the reason, the intention of why I'm doing something, I'm giving you credit or not giving you credit, or my credit decisioning, which is one of the typical models that's been put forward here.

Can basically be a different kind of variables in it, which come to rely upon gender or other things that we don't want it to rely upon temporarily.

So that's one cause of actual bias.

But then the other side is when you have to explain these models to people, and because they're not really explainable, they're not straightforward explainable, increasingly complicated, you can have the perception of bias.

So again, there's other ways to get around this, but this is, it's, I guess this is not such a simple thing as there's an empirical measure.

Yes, we have.

No, we haven't.

And therefore, as long as that number is below that number, we're fine.

I think there's probably a lot more education to do as well and talking to people about, for example, an algorithm has assessed your creditworthiness.

These are the kind of factors we've looked at.

This is the decision we've made.

And if you want to talk to a human, here's a human.

And we'll stand behind the decision.

So those kinds of elements as well, I think, that make it holistically useful.

Raymond Sun: And just to add to that, about how to anticipate potential, infringements or violations, I think it's also just, don't undervalue common sense.

So before you launch a project, just ask yourself, what can go wrong?

And just make a list of what can go wrong.

And just, that's simple foresight.

And then just mitigate the risks accordingly.

I think that's a, probably a starting question that sounds simple at first, but can go a long way.

Bec Johnson: Can I jump in on top of that?

I think that's a good idea, but I think it's also really important, and I don't see enough of this happening in, particularly in big tech organizations, is opening the doors to bringing people from impacted groups, so people from marginalized communities, people that are not technologists, but people who are maybe social scientists or philosophers or people that are working with disadvantaged groups.

So we need to keep that door open as well, because one person can't possibly anticipate all of the consequences and impacts on somebody who's having a very different lived experience.

Ajit Pillai: Thank you, everybody.

Very interesting conversation about diversity, which is required to understand also the biases that systems have, and also anticipate the biases before we actually embed AI.

And this is what I want to lead into the question of, like, how can we ensure transparency and explainability within AI models when businesses are embedding them into their organization?

Michael?

Raymond?

Raymond Sun: I think it's a given fact that models at this stage are mostly black boxes.

So from a technical perspective, explainability is quite hard to achieve practically.

So when it comes down to explainability and transparency, you're really going to have to front load that into the process.

The process, the procedure, and also the people around it, and there's already a lot of thinking around this, so for example, in the Europe's proposed legislation on AI, there are some transparency obligations that they put on an AI system provider to disclose when they launch a product, one of the, the big ticket items, is that if you're using copyrighted materials in your training dataset, you have to disclose that.

So that's one form of transparency.

Another form of transparency is if you're using AI system that will affect acute person's rights in a significant manner, you have to disclose to the user that an AI is being used on you.

So simple stuff like this, not simple, but like pretty common sense ideas like that have to be embedded in the process until the point that we can finally find a way to make black box model explainable is mostly a process and human accountability type of solution.

Paul Conyngham: There's a field of study, in the AI safety land called mechanistic interpretability, and it's all about trying to explain black box models.

The progress is very small at the moment.

We still have lots of progress to make.

The thing I will say though is potentially you can add unit tests at the end as part of your process.

To, try to capture as many, outlier cases, but there will always be something that you never thought of.

Michael Kollo: Maybe one more point to add on this one.

I think there's other issues behind explainability.

So if we think about why a person goes to a bank and says, why didn't you give me a loan?

Why didn't you put my [???] forward?

If you give them a statistical equation and a nonlinear one, they might not go, wow, thank you.

Now, I've got it.

So good with that.

Thank you.

They might have the rights to that for sure, but you're not necessarily solving the underlying problem, which is that they would like someone to comfort them in this moment of adverse selection.

And I think that moment of comfort in the adverse selection is not a mathematical problem.

It's a human problem.

And so we can go down this whole other road of going, no, I'm going to give you a mathematical explanation when really people are looking for, is that fair?

And then the word fair, you can interpret, in different ways, but fair is a human concept from one person to another.

And then I think it's that, it relates back to that humanity of that decision, especially when it's a damaging adverse decision.

And while it's easy, maybe just final point, it's easy to talk about things like you didn't get your loan or you didn't get the something.

I'm actually more concerned about the implicit decisions under the surface.

Your data feed never showed you access to these scholarships.

You never got to see that information that someone else got to see that they applied and they went for.

So you actually just from that fact, you never got the chance to say, why not me?

Whereas clearly, if you get a big no, you can say, why not me?

And then there's a whole conversation we just had.

Raymond Sun: That's why the key phrase in like many of the proposed regulations around the world is something called meaningful explanation.

Just disclosing the source code or the algorithm doesn't cut it.

It's got to be a meaningful explanation that actually explains what is really going on and how that affects the user.

So yeah, that's often, that's one thing that at least the world is onto already.

Yeah.

Ajit Pillai: Yeah, I think there's a really good advancement in terms of large language models and explainability, but there is also a requirement of understanding, as Michael said, the context, but also understanding different linguistic models within different countries that are there to explain these, to increase the explainability of the models.

The next question, if I don't have any questions on Discord, is, as businesses, we are trying to embed AI responsibly, But we also want to embed AI, and safeguard it according to the values, and the brand that we have as a business.

How do we go about doing that?

Bec Johnson: Yeah, so I, I think, honestly, one of the most important ways forward is fine tuning.

And fine tuning for your use case and for your, business and for your community.

It's actually something, when Sam Altman was here, I went, sorry, and that was the question that I asked him, was about that, and he says that it's coming, I don't know, cross your fingers.

But, I think, even though that Australia isn't creating these really giant models, like is happening in Europe and China and, the U.S., we can still be really active in this space by demanding the ability to fine tune the models to be appropriate, not just to the Australian context, but maybe to a school context, or maybe to an Outback context.

And so I think fine tuning, not just with paying people $2 an hour in Kenya or paying people $10 an hour from Mechanical Turk, but fine tuning in a really appropriate manner and with people that are reflecting the values of what you're trying to espouse in your company.

Michael Kollo: I think it's also an interesting moment for a lot of companies where they look at what they do today, let's say they look across their entire human workforce and they go, we've mostly got pretty good people that embed our values, but there's always a couple of bad ones that don't.

So actually, we're on this normality curve, right?

We've got about 5 percent of our people that are okay, 95 percent that are great, or 60, 40, or whatever it is.

So we're not starting from perfection.

We're not starting from a policy piece of paper that we're putting into a thing.

We're starting from something that has a distribution.

And then I think having risk parameters around that to go, if I go and embed something into an algorithm, it doesn't, the benchmark isn't 99-100%, obviously depending on the topic, but the benchmark is what I can reasonably achieve with other resources.

And I think once you think about that way, your risk management is, or your understanding of risk, of not meeting those objectives, or not meeting those standards that you're talking about, follows that type of human, resource curve rather than a technology binary curve.

Ajit Pillai: Cool.

I think, one of the questions which I have from Discord, which I want to continue this conversation on, is about literacy of, AI and the explainability of it.

And the question is specifically talking about, where should we start with the literacy?

Should we go all the way back to kindergarten or, first year or, schools?

Or should we start at the university?

Or is it only when you come into the workforce that you start learning about AI?

What are your thoughts about that?

Bec Johnson: I think it has to start really early.

I don't know the exact appropriate age for kids, I'm sure some other people can figure that out.

But it has to start early, and I was, giving another talk yesterday, and we were talking about this, and in your work space, literacy, isn't just about sending people to courses and to, great days like this, but it's about creating a particular time of the week, a couple of hours a week, to give your staff the ability to just play with the models, and so I've had the good fortune to be able to have access to these models for a few years now, and a lot of, often when I would go in and I think, oh, I'm going to do this with this model, and then I get in there and I'd just be playing around and be like, oh, actually, I found this totally other thing.

And so giving your staff and your employees the opportunity to just play is probably one of the most important ways to develop that kind of literacy and because they're always going to be changing as well.

So just even playing, taking a, a test case at your work and just trying it out, seeing if it works or it doesn't work.

So I think that's the one thing I wanted to throw in there about literacy.

Raymond Sun: I see two levels of literacy.

The base level is, I think AI education will eventually be somewhat like what privacy education has become.

Hopefully most people in the room will be aware that privacy is regulated, privacy is a thing, you gotta respect people's privacy if you collect their data.

I'm sure we had the very same question when the idea of data privacy first came out like in the 1980s in Australia.

We had the same sort of conversation where people asked, do people need to have data literacy or privacy literacy skills?

And eventually has evolved to somewhat of a citizenship type of issue where you don't need to get into the technicalities of what privacy is or what data is.

We just need to know that.

These are your obligations.

This is what's right and what's wrong.

Make sure that whatever you do aligns with what the privacy position is.

So I see that as a base level that are most applicable to, let's say, business industry.

At the upper level, that's where we get into the kindergarten education.

That's more of an economic type of benefit.

And you can see that already in Europe where, they've relaunched this, I guess this draft paper where they want teachers to start implementing AI in the classroom, teaching kids how to be like, adept, adapt with AI.

That's basically a way to upskill the workforce.

And that already in Singapore, China and South Korea and like Europe, but like basically upskilling the workforce so that the economy benefits as a whole.

So I see there being two layers of literacy here.

Michael Kollo: I, I look, I agree with you.

I think there's, this question is quite rich, from a social perspective.

I think we have a very poor dialogue on AI.

So let's put that out there.

So most people that I speak to, maybe outside of this room, perhaps.

Will think of AI as a threat.

They'll think of it as something uncertain, very difficult, and so on.

There was a great survey that I've been quoting a lot recently, which is done by University of Queensland.

They interviewed 17,000 people from 17 different countries, about AI and their perception of AI.

These are citizens, these are not workers, so they're old and young and everything in between.

And what was interesting for me to see was Australia ranks somewhere in the middle for risk awareness, AI is going to kill us, it's going to take our jobs, and all the negative narratives that we know, but ranked right at the bottom for how AI is going to help me.

How AI is going to make the world a better place.

And in that kind of environment, you just have low adoption and you have risk aversion, and you have a social narrative that colours a lot of these questions that we're talking about and brings out the risks and what about this and what about that.

And, most of the media engagements and so on ask me, okay, tell me about the ways that, superintelligence is going to kill us and so on.

So that's the kind of public narrative from Uber driver to CEO and everything in between.

I think as we go forwards, that narrative will die down because of the media cycle, but there's still this discomfort where you can't have when the blockbusters have run out of bad guys and they go, let's go fight the AI.

It's clearly being positioned in that way.

And the last time I saw something like that happen, by the way, was Brexit, where the European Union was being positioned as a bad guy for 10 years of negative media.

And so I suspect that there is a lot of re education to do on the social narrative AI and the benefit of AI.

I think as workers, clearly people are worried about job losses.

Clearly that was already mentioned.

One of the first thing was, what about my job?

What about my future?

What about my industry?

What about my training?

Which is the whole kind of future work narrative.

It's the whole, fourth industrial revolution.

It's, we're all in it together and moving and so on.

So I think part of that education is yes, these systems are a lot more friendly.

The future isn't about Python and STEM programs anymore with the advent of chat GPT, the future is now about critical reasoning and language modeling and actually moving with these things for, which is wonderful because it enables a whole other part of the, kind of the world, but I think we have to remove a lot of this fear narrative and we have to transform that and playfully playing with them is wonderful.

For people that are, intellectually challenged and engaged and thoughtful, I think for a lot of other people who are just standoffish and maybe already put off, already in this negative disposition, we probably have our work cut out in terms of how do we make this as a co pilot, co worker, a positive experience where you feel like, wow, I'm learning, I'm evolving and so on.

And maybe the final thought is, look, we have a very strong thesis at Evolve Reasoning that we think AI will help people understand the world better.

And so that's why we said evolved reasoning is not about the AI, it's evolved reasoning, it's about human evolved reasoning.

And I think ultimately that's a great goal to push for with the use of AI, but again the social narrative will mean the difference between people accepting and evolving and doing those things or burning it all down.

Paul Conyngham: I would like to add to everything that's already been said, coming in from a pretty different angle, I think that, to use John's analogy from the start of this whole conference, I think, AI existed three, five years ago and no one really cared about it, and same thing was like true for the internet in 1994, like prior to 1994, when Netscape Navigator came out, the internet existed, but no one really cared about it.

And, we're now at the 1994 moment for the, for, AI.

And I think that we can maybe it's a pretty good analog to use where we went about educating people on how to use the internet, like in your library or whatever they did back then.

Similar programs now.

And also to, to Michael's point around critical reasoning.

Point one is just general education.

Point two is, to get good at GPT skills.

That is like a fusion of, you can probably learn it, but there's a lot of creativity there as well.

And, I think maybe even that can be taught through programs like Critical Reasoning and et cetera, life experience.

Ajit Pillai: Thank you so much.

I think we are running out of time, do we have some more time or ten minutes?

Okay, cool.

Yeah, I think we can go ahead and there's one more question which has come out which is really interesting and, I'm going to reframe this question and ask, who has the responsibility when a negative consequence happens?

Is it the platform like OpenAI?

Or is it the business?

Or even within the organization, is it the CTO?

Or the CFO?

Or who is the one?

Or the person who is creating the data model?

So who at the end of the day is responsible when something bad happens?

Raymond Sun: Okay, that's probably a question for me now.

It really depends on what claim you're talking about.

Different claims give rise to different sets of responsibilities.

I'll probably start with the straightforward one, which is one that's already been addressed by the EU.

So the EU has a draft AI liability directive that states that if an AI system provider, so basically a company that deploys ai, regardless of whether or not they built the AI, if you deploy an AI and you, and the course of that, you, the AI does something wrong, which causes you to breach one of the AI regulation on rules.

Then there's a presumption, so presumption means assumed, it's assumed that, that breach caused damage to whoever's affected person, and it's on you, the company, to disprove that presumption.

In that sense, the EU sees the company or the deployer as responsible for the AI system, regardless of whether or not they built that AI system in the first place.

And then the company then has and there's a stat, there's like the regulation layer.

You also gotta account for like the, contract layer.

So you can also like, allocate risk between your contractors and suppliers to who indemnifies or who compensates who when stuff goes wrong.

But from a regulatory level, that's the EU's answer.

The rest of the world don't yet have a clear answer on that.

But that doesn't mean there is no accountability regime.

Again, depends on what area of law is being litigated.

So in the IP field, if you're If your system infringes upon copyright, then you're responsible for that.

In discrimination field, even if you use an AI that discriminates a person, you're responsible for that.

So it's really, it's a really big question that often gets asked, but it's actually a lot more nuanced than just saying who's responsible when something goes wrong.

It really depends on what area of law you're talking about, but at least from a EE regulation standpoint, there is that sense that it's the deployer who's responsible for any breach of the rules of the AI Act.

Yeah.

Ajit Pillai: Great.

I think that's also very interesting because, in terms of regulatory space, it's also about the autonomy that a user or an individual or an organization has in terms of making the decisions.

So it's always, there's always going to be a battle between informed decision or not.

So that's, that will be an interesting challenge, when that arises, The next question which I have is, Just Play is a great concept, which we talked about earlier, but what are the risks of putting sensitive data into something like ChatGPT?

Raymond Sun: I could go.

This is a very, it's like one of the big ticket confidentiality privacy issues when it comes to, not just ChatGPT, but AI in general.

Basically, so I think, so the, I guess the general answer is, yeah, if you upload sensitive information into chatGPT, depending on what version you use, that sensitive information may get stored, if it gets stored, it can then be used to train the model, and then it then learns from your sensitive information and applies that to another matter which is completely irrelevant to you, in that sense, when you, the moment you're putting sensitive information into the prompt, that's disclosing, that's an act of disclosure, which then breaches, technically breaches confidentiality obligations, privacy obligations, and if you're a professional, privilege obligations, depending on how they're drafted, so that's the disclosure element.

And if it gets stored, that's another act of breach.

And there's a common misconception that there have been recent updates to chatGPT where if you use the premium version of your turn of your chat history or use the API version, they don't store your data and a common misconception is that, oh, they don't store your data anymore, therefore it's safe to put all my confidential information into chatGPT.

That's not the case because it's still the act of disclosure you have to worry about.

So that's one thing I want to clarify.

So there's that sense and just to give you like a case study, Samsung, they're, you might've heard this already, but some of their employees have been using chatGPT to analyze and debug code relating to microchips.

And microchips are like a very hot asset right now in the world that's causing a lot of geopolitical tension.

And so when you have that sort of sensitive information uploaded to chatGPT, one, not only are you breaching trade secrets, but two, let's say you're working on a really cool product idea and you want to patent it.

The moment you disclose that information into a third party system, regardless of whether it's AI or not, that's a disclosure, which means if someone finds that out, you cannot patent your invention anymore.

That's just the rule.

Any disclosed ideas cannot be patented and so disclosing that information into an AI chatbot is an act of disclosure already.

So again, there might be a practical consideration as to whether you get caught or someone find out, but we're not withstanding that from a strict legal perspective.

That is what's at stake.

That's the risk.

So be very careful when it comes to inputting sensitive information.

That doesn't mean you can't use these chatbots at all, you could definitely use them to help your work, just be careful around what information you put in.

Normalizing information, redacting information comes a long way.

So definitely just be, just use common sense, that's the main, that's the main takeaway.

Just be, yeah, just be, yeah.

Paul Conyngham: Was the actual question how many API keys have you put into ChatGPT?

I'll answer it a different way again.

There are multiple different levels of protection you can take for transmitting sensitive data to these tools.

The first one is in chat GPT, you've probably all seen that you can opt out.

There's actually hidden further within there, there's a learn more button you can click, it's an HTML link.

Takes you to this form, you scroll all the way to the bottom and then there's another link there.

You click on that and it takes you to another form and you can actually opt out entirely from your data.

So that's one.

The next one is you can, yeah, of course, but on, on that one second and, and then the next level you can take is, using something like Azure OpenAI, where you have your own cloud instance, instantiation, of GPT 4 on your cloud.

It's your, it's essentially your model, but if you're not, if you're not comfortable with even that's when you can go and train your own models, which a few of our presenters have talked about today.

And so you can still, there's still multiple options you can use to deploy this technology.

And, and control your data in the process.

Ajit Pillai: I think, one last question which I want to end off with, which is, I want to, think about one question from the Discord and also, use some of my thoughts.

There's a huge problem that's happening in terms of creativity or, ownership when it comes to intellectual property or assessments within universities.

How do we tackle this issue where something chatGPT or GPT 4 is developing these assessment answers or creative, documents or code which is there, which we are utilizing on a daily basis.

How does that cause issues, one, to different stakeholders?

For example, there are people who have fed data or their data has been taken.

There are multiple lawsuits going on with OpenAI where they have taken data from books and stuff without the author's permission and that resulting in new creative avenues, which is taken from all of these data.

What do you guys think about that?

Bec Johnson: There's a lot of things in that question.

Ajit Pillai: I'm sorry.

It's the last one.

Okay.

Bec Johnson: I'm just going to go to the education part, the university kind of part.

There's big hype, all the students are going to all cheat.

There was a lot of students cheating before ChatGPT, that's the, that's there.

If anything, it actually democratized it.

But, unfortunate but true.

But, I play with, I still play with these things all the time, and at first you think, oh, look at that, all these words coming out and using lots of vocab and what not, and then I read it and I think, ah, you're just like a first year undergrad that's trying to impress me with lots of words, but actually I probably wouldn't give you more than 60 percent out of 100, right?

So I do think though that they're useful as Scratchpads, and some of my students have said to me, yeah, especially ones for whom English is their second language, that they use them as scratchpads and that they know that they're overly verbose, they know that they're, fairly hollow, they're not factual, but they use it, it doesn't replace things like grammarly, but it augments things like grammarly.

It is generative, it is creative so you can throw stuff in there and see what it comes out with.

You think, oh that's a good idea, but it almost always needs to be cleaned up.

It definitely needs to be checked if you're trying to write anything that's factual.

And we need to start embracing this, maybe it's a good kick in the backside that we have to have a bit of a paradigm shift in educational systems.

Because, I think.

We've really got to get over the rote learning kind of thing, we've got to get over really individualistic type of learning, we've got to really embrace more collaborative style of learning.

And I think that there is a really big scope for us to do that.

And, and I think it's great that we got slapped across the back of the head with this, and then the whole uni's kind of going, oh, what are we going to do?

I was like, okay, let's, alter that.

And so I think, I know a lot of people said it before when calculators came out, but it's the same thing.

Now we've got this cool tool where you have to educate the people that what it is a creative tool.

It's not a search tool.

It's not a factual tool.

It is a creative tool and help people.

Learn how to use that because that's what's gonna happen in the Ajit Pillai: future.

I think we can, end with one more thought.

Michael Kollo: I'll be really quick then.

Super quick.

Yes, universities get disrupted.

Yes, they have to change their business model.

Yes, it happens all the time.

Yes, that's what technology does.

It's just what it is.

There'll be better teachers in the future, which is AI.

We might have the last generation of students being trained by people.

Why not?

You.

Ajit Pillai: No.

No!

Never.

Michael Kollo: It's okay.

I wanted to be controversial.

I got, my end.

It's all right.

Ajit Pillai: That goes on another hour, maybe another hour for our discussion.

Michael Kollo: My point is, world changes, right?

Universities are a business model, they have an academic part, they have a business model, they have a reality of how they test, they've changed through different worlds, and they'll change again, and this is yet another change, so I don't think that's a big deal.

I don't have the solution, that's why I'm going quite quick, to how copyright is going to be managed when text is atomized or tokenized and then redistributed in permutations and combinations, because you need a really fine tooth comb of grayness to go, now you're similar enough.

No, you're not similar enough there.

And I think until we get that really fine tooth comb, we're going to be debating this for a while.

Paul Conyngham: I think there's one more thing I'll add to this, which is I found out like last week that a couple of these companies, Meta and a couple of these other companies, they started this initiative where every image, that's ever made, they're going to attach metadata to it.

Yep.

So this will be uniquely identifiable forever.

So we're going down that road.

Yep.

It's not an NFT, unfortunately.

Bec Johnson: But someone's going to work out how to fake that.

Paul Conyngham: Yeah.

It's not blockchain.

It's not blockchain.

Ajit Pillai: There is also a blockchain base.

I think pixel blocking is one of the things that they're trying to do.

Paul Conyngham: Yeah, it's like a real, it's a big organization that's come together.

It's like under the ground because they don't, want people to know about it yet.

Raymond Sun: I guess for me, you could say, fortunately or unfortunately, there are copyright lawsuits against these, especially, these AI companies on the issue of copyright.

Yet to be determined.

At least, we're eventually going to have some court guidance on what copyright is.

But I think eventually, it's either going to be IP law will adapt, or AI will adapt to fit current IP laws, or both.

Yeah, it's a very interesting time in the IP space.

Bec Johnson: And I think, like with a lot of other disruptions, the lawyers are the ones that are going to come out on top, They're always going to have a good job.

Michael Kollo: I think the number one automated industry for ChatGPT is lawyers.

Based on the data that I've seen, yeah.

Interesting, okay.

Ajit Pillai: Thank you so much for the panel, everybody, and a round of applause.

Unlike the with the early Web and social media few people are unthinkingly optimistic about AI and its applications.

Our expert panel will consider the harms, risks and challenges these technologies present, to help you minimize them, and mazimimize the value we can gain from AI.