Steam Engine Time

Introduction and Acknowledgment of Traditional Land

Mark Pesce opens his talk by acknowledging the Gadigal land and its traditional owners, reflecting on his 20 years in Australia and the significance of this acknowledgment.

Personal Journey into the Digital World

Pesce recounts his early experiences with the internet, describing how he explored the entirety of the web in its nascent days and the rapid expansion of web sites that soon followed.

The Foundations of Human Cognition and Learning

Discusses the developmental stages of human cognition, from the raw sensory input in infancy to the sophisticated recognition and categorization processes that form our understanding of the world.

Association, Recognition, and Self-Formation

Explores the neurological processes of association and recognition that lead to self-awareness and personal identity, emphasizing the interconnectedness of sensory experiences and cognitive development.

Emergence of Language and Social Interaction

Analyzes how language emerges from the interplay of sensory experiences and social interactions, shaping the individual's ability to communicate and connect with the external world.

Encountering and Overcoming Cognitive Dissonance

Highlights the challenges individuals face as they encounter experiences that do not fit their established cognitive frameworks, leading to personal growth and a deeper understanding of the self and others.

Developing Complex Communication and Cognitive Skills

Details the progression from basic sensory interactions to complex cognitive functions, including the development of sophisticated language and social skills that define human interactions.

Artificial Intelligence and the Misconceptions of AI

Introduces the concept of artificial intelligence, tracing its historical roots and the challenges it has faced, framed by the early separation from cybernetics and its impact on the development of AI technologies.

Cybernetics and System Learning

Mark Pesce discusses the core principles of cybernetics and its implications for machine learning, emphasizing the importance of feedback loops where the output of a system becomes its future input to facilitate learning.

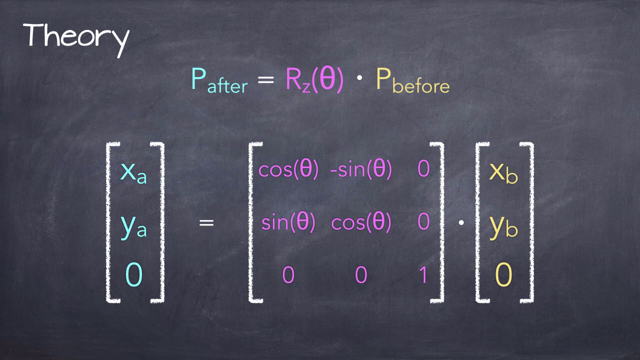

Evolution of Machine Learning Models

Explains the advancements in neural network models, particularly the development of the transformer in 2017, which enhanced the ability of these models to learn from vast amounts of data through advanced pattern recognition.

The Convergence of Human and Machine Learning

Pesce raises philosophical questions about the blending of human and machine learning, pondering where machine learning ends and human learning begins, highlighting the ongoing integration and mutual influence between the two.

Structural Coupling and Socialization

Introduces the concept of structural coupling in cybernetics, drawing parallels to human socialization and civilization processes, where continuous exchange and adaptation occur between individuals and systems.

The Emergence of New Learning Categories

Discusses the emergence of new categories of knowledge and learning that blend human and artificial intelligence, suggesting a deep, interconnected future where the distinction between human and machine becomes increasingly blurred.

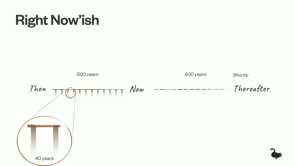

Historical Context and the Steam Engine Analogy

Reflects on the historical development of the steam engine as an analogy to current advances in AI, emphasizing the importance of empirical learning and the need for regulatory mechanisms akin to the steam engine's governor.

The Future of Learning and Artificial Intelligence

Predicts the future integration of AI in society, comparing the potential widespread influence of AI on our daily lives to the transformative impact of the steam engine on industrial society.

The Future of Human Labor and Learning

Mark Pesce discusses the evolving demand for skilled human labor in the context of an increasingly automated world, drawing parallels to how our learning processes will adapt in an environment that itself learns and evolves.

Communion of Minds and Multilarity

Explores the concept of a 'multilarity'—a future where human and artificial intelligences converge, enhancing and enriching our collective learning and understanding through deep interconnections.

Reflecting on the Past to Inform the Future

Pesce encourages reflecting on the past three decades since the web's expansion, questioning how different awareness might have altered our technological landscape, particularly in avoiding the pitfalls of surveillance capitalism.

Closing Thoughts and Folklore Reflection

Concludes with a reflection on human resilience and determination through the folklore of John Henry, symbolizing the enduring human spirit in the face of technological advancements.

Thank you, John.

I know you said you've been doing the conference for almost 20 years.

And this is, as of today, I've been in Australia for 20 years and two weeks.

All of that time, I have lived on Gadigal land, and it has been my honor to be on Gadigal land for all of that time.

And I'm going to open this morning with paying my respects to the traditional owners of this amazing land.

And paying my respects to all First Nations people who are here with us today.

All right, so timing.

30 years ago today, I, it was a Wednesday, I was consulting for Apple.

I was consulting for Apple because my startup, which was going to do consumer VR 20 years before it was actually possible, had collapsed because Sega had cancelled its consumer VR product that used our technology.

And for the first time in three years of being a poor startup person, I was making real money and I was investing some of that money in toys.

And one of the toys that I bought was a used Spark station.

I bought a used Sparkstation because I wanted to do some funky VR networking software on it, but it turns out the thing that I really used it for was to install the NCSA Mosaic web browser and to start using my dial up modem to browse the web.

NCSA maintained a website, the master list of all of the sites on the web.

On Monday, the 25th of October, I started at the top of that list when I got home from work at Apple.

By the way, the work at Apple was very unsexy.

I was connecting IBM mainframes to Apple networks.

Started at the top of the list, and methodically would just surf all of the sites.

And I wouldn't go to every page on all of the sites, but I'd certainly visit the sites.

Methodically worked my way through the list.

Wednesday, I would be around halfway through the list.

On Friday, I had surfed the entire web.

And you laugh because you're like, surely you're joking.

No, I'm not joking.

Because the web was so small in October of 1993, it was possible.

If you come forward just a year later, even the idea of doing that would be utterly inconceivable because websites were being added at a rate of It's faster than any person could possibly view them all, even if they had 24 hours every single day.

People call this the Cambrian explosion of the web.

It's not.

It's cosmic inflation.

That moment at 10 to the minus 44th seconds in the universe, when the universe went from the size of a point to the size of a basketball, which doesn't sound like very much, but it did it faster than the speed of light.

That's what happened in that first year.

Timing!

10 months and 26 days ago, I was right here, talking to all of you about all the funky stuff that was going on in AI, and I had a bunch of people talking about copyright, and authorship, and the role of the artist in all of this world, and where was it all going, and what we didn't know.

Was that on the same day, at the same time, on the other side of the international dateline, we were about to experience our own moment of Cosmic Inflation.

They say that we all enter the world as blank slates.

And of course that's true up to a point.

There are genetic differences between us.

There are environmental differences.

There are epigenetics.

All of those factors.

But, generally speaking, we enter the world as blank slates.

Each of our slates are unique.

And we enter this world, eyes wide open, unfocused, air is being troubled by every sound that we hear, a body that's moving, one of my friends described their newborn as "brownian motion", senses are wide open, we are taking it all in.

That's how we begin.

That's how every one of us begins.

All of it's coming in, all of it is leaving a mark on us because that's what we are.

We are the slates that are being marked up by our experiences in the world.

More experience, more marks, filling in all of those blanks.

And all of this is happening at a very deep level.

It's happening biologically, It's happening chemically, it's happening neurologically, and experience is coming through our senses and making its way through our peripheral nerves, into our central nervous system, into our brains.

Our brains are functionally empty at the time we're born.

But every experience leaves a mark in our neurology.

And this sensation triggers those nerves.

It reaches those neurons in our brains.

And when they're triggered, then those neurons fire.

And those neurons, at the beginning, they aren't really wired into anything at all.

They're just firing off.

That's an end to it.

And it happens the next time there's some sensation, and the next time, and the time after that.

But eventually, neurons that fire together, wire together.

This is known as Hebbian theory, and it's the foundation for how we all learn.

And it's the foundation for how anything with even a whisper of a nervous system, works.

That is associated with this.

And from that association, everything else follows.

Now, when the senses take something in, they pass it along those nerves, they take it up into the central nervous system, and because of that association, it's now recognized.

Break that word down, recognition, re cognition, to think on something, again.

Association is now the emergence of a past.

It's the emergence of a pattern.

It's the emergence of an awareness that is no longer just undifferentiated sensation.

It's the beginning of our personal history.

And so that means that experience is no longer quite unmediated, it's no longer quite unfiltered.

It's now and forever being measured against a set of associations.

We are seeing everything through how we recognize everything.

And the first of these recognitions emerges very naturally from the tiny sphere of capacity that the infant encompasses.

The sounds that an infant makes, the movements that it takes, and it starts to connect action to recognition.

And if you don't have association, then action and sensation have no connection in recognition.

An infant would act but could not encompass the consequence of that action.

And so now, in association, all actions get mapped to sensations.

And in recognition, the infant begins to see itself.

In seeing itself, a self begins to emerge from the undifferentiated sensations in the world.

And it associates itself with that recognition.

So at the beginning of cognition, we see ourselves as the change in the world.

And now the whole world is no longer just raw experience.

It's the fruit of all of those associations, think about it, you hear an infant, a voice cries, it screams, it shouts, it grows softer and louder, longer and shorter, higher and lower, burbling, babbling, everything that's being enacted by the child is coming back as another association between mouth and breath and voice and hearing.

The child's hands flail around.

They gradually begin to move as bid, they increase in fineness and delicacy as peripheral nerves find their mates in the deep parts of the brain, eye, and hand, connected by an association and sensation into a single fluid intention.

Feet used to strike out blindly into space.

They now find their way more deliberately.

They are unsteady at first.

One move produces a shift.

Another move produces another shift.

Now it's no longer just mind to muscle to movement, but now it's all of the proprioceptive senses being integrated into the where and how of our joints in gravity and then it's each arm, and each hand, and each leg, and each foot, and then this arm, and this hand, with this arm, and that hand, and that arm, and that hand, with that arm, and that leg, association, and coordination.

Limbs start to move together.

Synchronization.

And now, arms and hands and legs and feet, one before the other, bring the sensation of motion.

You get the common crawl.

And now, the self, activated, aware.

Active seeks out experience, and some of that experience is going to be recognized.

Some of that is therefore going to be reinforced.

But some of that experience is going to be new, raw, unfiltered, and it's going to be awaiting a repetition.

Association.

Some of those associations are going to be mistakes, because association is prediction, and prediction is never quite perfect.

And where association fails, and recognition fails to fire with association, the self falls into a gap.

That gap hurts.

It's a different sensation because it's not one that arrives through the senses.

It's a gap that arrives through the perception of the self in the self.

Expectation, disappointment, surprise.

How could the world deceive the self like this?

Doesn't the self encompass everything?

Or is there something beyond the self, a factor infinite and unknown?

And that mistake, repeated enough times, for the painful sensation to become the association, to become recognition, that becomes the experience of the other.

The other is that which isn't the self.

Sensation, association, recognition, and now, the very first category.

I and now, this and that, here and there, up and down, left and right, light and dark, warm and cold, sound and silence.

Those categories become the framework for the self.

Those categories explode in our minds between breath and voice and sensation, association, expectation.

And now what we see is the babble inside of us becomes repeated and repeated until it becomes a clear and coherent sound.

And that sound is being measured against the other.

Sometimes it's accepted and repeated, other times it's rejected and forgotten.

It's a word, it's two words, it's another word, it's word order, a word order accepted or rejected.

Concept and word order become a continuum of babble and output and disappointment and correction and the gradual emergence of a linguistic description for the categories inside the self, speak and be spoken to.

And now the self begins to move away from sensation, and association, and recognition, and category, because linguistic description becomes a sort of possession.

The self speaks to itself and comes to know itself through language.

And the self acquires language at a rate that can only be considered phenomenal.

The whole structure of sensation, association, recognition, and category geared toward the linguistic capacity of the self.

And as that project nears its fulfillment, This is precisely the moment that biology comes calling again, because neurons that fired together, wired together.

But many and more neurons have no connections or few connections, and at this point, the body deletes them unsentimentally.

And so now, the self within the body is never going to know the same ease and learning, the same direct experience of sensation, association, recognition, and category.

Everything that comes after.

Every bit of social knowledge, every bit of cultural knowledge, formal knowledge, all of it works within the categories that have already emerged from association, sensation, and recognition.

And those things that confound category, the things that sit between this and that, here and there, up and down, male and female, those things that sit between, they cause the self indescribable pain.

The passage of the self in the world is a navigation through disappointment in pain because in those we learn and we grow.

Okay, so you're all saying, It was very nice, Mark, but I thought you were going to be talking about artificial intelligence this morning.

Okay.

I have some news for you, kids.

There is no such thing as artificial intelligence.

It was a term invented by these seven folks back in 1956 as a velvet rope.

To keep a certain set of ideas out, and a certain person away from their little confab to talk about how they were going to make machines work.

The person involved was a man by the name of Norbert Wiener.

The set of ideas is known as Cybernetics.

The pioneers of artificial intelligence wanted nothing to do with an established field that was already at least 10 years old, had a significant body of theoretical work behind it, and had been having regular interdisciplinary meetings with researchers working across all a range of domains to understand learning, to understand information and how it was all emerging in the new computing machinery that had been given birth during the war.

The folks at Dartmouth didn't want any of that because it was all soft.

It was all mixed up in psychology and anthropology and biology and zoology.

And psychology, all of these things that couldn't be described in perfect, clear, mathematical formulae.

And so what they did, in the inevitable way that boys will always do when they put up a clubhouse and put a sign on it saying, no girls, is they created their own space where they would be able to crack the problem, of learning.

And you know what?

It didn't go well.

No field has so consistently over promised and under delivered over the last 70 years as artificial intelligence.

For the first 30 years, those seven people pioneered a whole bunch of techniques og machine learning, none of which worked very well.

In fact, It wasn't until we started to model neural networks that artificial intelligence began to work.

Because as it turns out, those folks over here who they wanted to ignore actually understood learning really well, and probably would have been instrumental in helping the field along.

So the history of artificial intelligence, and some of the problems that are embedded in that field today, come from a root that wants to see the world as a set of pure axioms.

Which it is not.

We have cybernetics as this core idea that was ignored by the first generation of artificial intelligence.

And we know that was ignored.

And we know that artificial intelligence failed because it ignored these ideas.

And one of the core ideas that it ignored is the first axiom of cybernetics, which is that the output of a system is its future input.

Unless you can do that, there is no way for that system to be able to learn.

It can't learn from the environment, it can't learn from its experience.

And what do we do now?

We build models and we feed models huge amounts of data.

We feed them sensation and we let the outputs of that system become its future input, which is how we train these models.

And we know that we can train models now because in 2017, there was a fundamental improvement in these models.

And by the way, I want you to notice the spelling on that.

This is created by DALLE 3.

What we found out was that we could train models according to neural network techniques.

And then in 2017, the transformer was invented.

And so you could train these enormous models and create these enormous matrices, many dimensional matrices of information.

Tensors.

And the thing that we've started to realize now about these models and the way they're trained and the way that we use them is that tensors that lie together, in other words, they're close together in the matrix, fire together.

And that's what the transformer does.

Transformer allows us to take the raw sensation that's been fed in as data, that's been trained into recognition and allows us to extract that recognition in a form that we can understand.

Is it the same way that we learn?

No.

Is it completely analogous at the level of principle?

Yes.

And so we have this re emergence now at a very deep level of Hebbian theory inside learning.

And so we now have these systems that are data driven, that are feedbacking into association, that are transforming into recognition.

And they produce an output that we now read and interpret and then feed back into these systems.

Here's a question for you.

Where does the machine learning end and our learning begin?

And we are already driving ourselves to distraction with this question.

From the vantage point, Of 10 months and 26 days ago, I can see that's what the panel that we had last year was actually all about.

Who are we?

What's our relation to these new systems that are learning?

Where are we going with these systems?

And on this side of that 10 months and 26 days, I can see things very differently, because this isn't about one or the other.

It can't be about one or the other because of the second axiom of cybernetics.

The second axiom of cybernetics states that systems that exchange information, they couple.

It's called structural coupling.

They couple into a greater unity.

And that sounds subtle, It's really not, because it's true for all of humanity.

It's always been true for humanity, because we're all in each other's heads.

And we're all in each other's heads because we're all in continuous communication.

We're all learning from one another.

The output of one of us is becoming the input to another, and then it's looping back as an output from them back into us, and back out again, and back into us.

And we don't call that structural coupling, we call that socialization and civilization.

It's just that now.

It's happening at a scale and at a depth that makes it feel like this is the first time this has been happening.

And we are now entering into a new range of deep informational relationships with learning systems that aren't just human.

And we're learning about learning, and we're embedding that learning about learning into systems that learn from their outputs and feed us information that we then learn and from that we learn more about learning which becomes future inputs to these systems that learn and then become future outputs to us.

It all winds round and round like an Ouroboros eating its own tail.

And that pretty much describes this last 10 months and 26 days.

Drop a learning system into a world that is populated with people who are all, in their essential nature, learning beings, and each will learn rapidly from the other.

And every pass through one draws the other closer.

And this is a structural coupling that eventually begins to look like a greater unity.

When I say that this can't be about one or the other, human or machine, this is what I mean, because all of our continued deepening interactions with these systems, all of our learning from these systems, which is being fed back into the design of these systems, now, who can say with any certainty where we begin and where they end?

And it vexes us, this not knowing.

We want it all to be very clean and very clear.

That's just the pain of a mistake, because we want to recognize this, and we can't.

What we're seeing now is the birth of a new category.

It's one that is born in learning.

It's actually a category that's about learning.

It's one that's born in relation.

It's one that's born in connection.

We don't have a name for this category.

That's much of the reason that we have difficulty seeing it.

But it's here, and in fact, it's pretty much everywhere.

And if you doubt that, think about how much has changed in the last 10 months.

26 days.

This, if nothing else, tells us where we are and where we might be going.

All right, before we talk about where we're going, let's talk about where we've been.

I want to reach back, way, way back, so that we can cite ourselves in this present moment.

I want to reach back more than 300 years ago.

Now, how many of you have read Neal Stephenson's The Baroque Cycle?

All 3,000 pages of it.

Bless you, so have I.

Remember the very last chapter of that book.

Bring that to mind right now.

The year is 1715.

The windswept coastline of Cornwall.

Something is happening.

Something that's going to change everything.

It's at a place called Wheel Vore.

And down there, the Coal beds lie exposed by the shoreline and the miners dig down.

They dig up coal and then inevitably the coal pit will fill with seawater when they hit the water table and there's no more coal out of that pit, so they'll move on and they'll dig another pit.

And they have done this for time out of mind.

That's how it has always been.

Not this time, because this time an inventor by the name of Thomas Newcomen has a massive contraption set up that he calls the proprietors of the engine for raising water by fire, and like it says on the tin, that is exactly what it does.

Coal is burned beneath a sealed iron vessel, steam is generated in that vessel under great pressure, the pressure drives a piston, the piston moves a pump, the pump drains water from the mine as it seeps in.

And here it is, this is magnificent, momentous, powerful, terrifying, that is the first steam engine.

It opens minds that have been closed.

And a lot more coal could now be mined.

Now, coal wasn't actually an enormous demand before this.

But all of a sudden, coal is in enormous demand because it's being used to fuel more steam engines, because everywhere, from the black country of England, to Warwickshire, to Newcastle on Tyne, over into Europe as well, steam engines are everywhere pumping water out of mines.

And that led to an explosion in the production of what we would now call critical minerals.

Copper, and steel, and tin, and lead, and silver, everything that you need to build more steam engines.

And you need that, because now you have a mechanical source of power.

And now that you have that, everyone is finding a use for it.

Using it in this mill, or this machine, or this process.

Steam's taking off like a rocket.

Or it should be, except for one small problem.

It's really, truly, dangerous.

Newcomen's boiler explodes regularly.

And without warning, it turns into lead, pardon me, iron shrapnel flying out at hundreds of kilometers an hour, making a pink mist of anything that is close enough.

Because it's high pressure vessel is built out of the best technology of the time, which is not very good, and even if you make it well, it will eventually fail, and under pressure it will explode, and it will shred you.

And it did this regularly.

You never turned your back on a steam engine, you tended it carefully from a safe distance.

At least, as safe as you could imagine.

Because no one was going to put a little thing like sudden death from an explosive shrapnel.

Nothing was going to cause that to slow down the adoption of the steam engine.

It was way too useful.

For the first time, power had been liberated from either humans or animals.

Which generally meant slave labor, or animal labor.

Just endless power for the price of a little coal to keep it all running.

There had never been such a thing.

And despite the dangers, they persisted.

But also, because of those dangers, it was resisted.

There were circumstances that could benefit from steam, but in those circumstances, it was just too dangerous to use Steam.

And this went on for 60 years.

An entire lifetime in the 18th century.

And then James Watt came along.

And James Watt looked at the Steam Engine as a system.

And he realized that you could use the output of that system as its future input.

And he invented something known as the Governor.

It allows the steam engine to self regulate.

Because it's turning the output of the steam engine into a controlling input.

It's the first modern cybernetic system.

And suddenly now, the steam engine is twice as efficient as it has ever been.

It doesn't explode nearly so much.

It's such a big deal that we generally credit James Watt with the invention of the steam age.

Watt's name is the unit of power in our civilization.

And because he brought a reliable steam engine to the world, it transformed the world into the world we're living in today.

Now we need to think about that for a second.

I need you all to do a little bit of a thought experiment with me.

I need you to imagine a world completely without mechanical power.

If we could suddenly magic away all of the mechanical power in the world, the first thing you would notice would be a lot of aircraft suddenly dropping out of the sky.

Nearly everything that you see around you right now, except for other people, is the direct output of some process that used mechanical power.

Or it's running right now, in this moment, driven by mechanical power.

The HVAC system, for example.

Our lives and our civilization are so thoroughly intertwined with mechanical power that we no longer notice it unless it's not there when we need it.

In other words, it stages its appearance only when it disappears.

And that last bit, that traces back to James Watt, because he provided us that massive power and made it safe.

Now here's an interesting point.

James Watt was not a physicist.

He was an inventor.

All of the work that he did on the steam engine was done empirically, trial and error.

The taming of power was a trial and error affair.

And observation, experimentation, there's nothing wrong with that.

But it would be another three quarters of a century.

The 1850s, until we started to get any theoretical understanding of this process, until we get what we today call thermodynamics.

Alright, so you've got Carnot, Kelvin, gave his unit temperature, Boltzmann, James Clerk Maxwell.

They nutted out the physics of energy transfer and how energy is transformed into work.

So it's 135 years.

Between a coal mine in Cornwall and a workable theory of operation.

I don't think it's going to take us quite that long.

But it has to be understood that what we understand about learning right now in 2023 is not a lot.

We have a lot of practices.

Some fun tools.

They seem to work much of the time.

Sometimes, though, they just explode.

And we don't really have a lot of clarity about how to construct a governor that would enable these systems to self regulate.

Feeding the outputs back in as inputs, so that they can govern themselves.

That's where we are right now.

We're in that 60 years between Thomas Newcomen and James Watt.

And we will use these new powers because that power is so useful.

This is the first cognition that does not rely on Human Labor.

Now, it may be unreliable, but for all of that, it is unique, and it is potent, and it will be used, but, like the first steam engines, we can never really turn our backs on these systems.

They require our constant attention from a safe distance.

That's us today.

We possess incredible new powers.

They're more than a bit scary.

And we are waiting for our James Watts, someone who will take the hard yards of empiricism, of experimentation, and cybernetics, and give us the new empirical means to make these new tools that learn, entirely self regulating.

It doesn't mean that these tools will ever be perfect.

Even today, steam engines explode.

But they will be remarkably safer than they are today.

And when we hit that point, Sometime in the next decade, that intelligence, that learning, about learning, our ability to learn and to learn from, and to pass that learning along, all of that is going to hit an inflection point.

And all of that learning will feed its way into the material world.

It will so thoroughly leaven our material world and our physical culture that it will feel like we are bathing in a continuum of intelligence.

Just as today we are bathing in a continuum of mechanical power that is so pervasive and invisible we now no longer notice it.

But it won't be one intelligence from a single point any more than we have a single gigantic motor powering our civilization.

Each of these is going to be a product of their own learning, their own experience, their own situations, and their own outputs fed back to them as inputs.

We are going to be part of that mix.

And there's no question that world is going to be a challenging world.

When the steam engine entered common use, people wondered whether the value of human labor would vanish.

Well, human labor changed.

How and where we use human labor has changed completely.

But it hasn't disappeared because highly skilled human labor is in higher demand than ever before because in this country we call them tradies.

The same is going to be true for human learning and our learning about learning.

It will be different, of course.

How could that not be true?

We're going to be, we're going to be navigating our own learning amidst a world that is also learning.

Learning alongside us.

That's the door that's already open.

That's the world that is already coming to pass.

And that's the lesson of this last 10 months and 26 days.

And someday, when some mind, or some communion of minds comes along to crack the theory of learning, I suspect it will be a mind in deep communion with other minds made of other matter, and it's not going to be a singularity.

It's going to be a multilarity, a greater unity in learning.

Which brings us into understanding.

We've gotten to where I wanted to get us.

But before we go, I want to point back to the beginning of this talk, to 30 years ago.

Now this moment is not that moment.

The past doesn't repeat, but it does rhyme.

And with that in mind, I have some questions to ask you.

If we could go back and revisit the choices that we made 30 years ago, how would we do it?

Would we understand that the web would write us, as much as we have written it.

If we did understand that 30 years ago, what would we have done differently to avoid being imprisoned within the dark satanic mills of surveillance capitalism?

We can't keep from making new mistakes, but we can hope that we have learned enough to prevent us from repeating the old ones.

[Man sings a folks song] John Henry told his captain, a man's gotta act like a man.

And before steam drill beats me, I will die, hammer in my hand.

[Mark] Thank you.

Generative AI isn’t the biggest thing since the Web – it’s the cognitive equivalent of the steam engine, multiplying human intelligence just as the steam engine multiplied human (and animal) muscle power. Cranky, unreliable, even dangerous, the early age of steam has a lot to teach us of these early days of Generative AI…