Disruptive or defective? Towards ethical tech innovation

(bright, upbeat music) - Hello, everyone.

It's really great to be here, and before I start, I would like to acknowledge that this presentation is held on the traditional lands of the Wurundjeri people of the Kulin nation, and I would like to pay my respects to elders past, present and emerging.

The demand for innovation and artificial intelligence and "disruption" has never been higher.

And in many ways, technology has brought incredible advancements, contributing to improved quality of life, living standards, redefined communications, and amplifications of business opportunity, and so much more.

So, in many ways, technology is reshaping our world. Its economics, culture, society, and democracy, as we speak. And to be honest, all of us have benefited from playing a part in this digital revolution. However, there has been a cost to this disruption and to this progression.

"We demonstrated that the Web had failed, "instead of served, humanity.

"The increasing centralization has ended up producing "a large-scale emergent phenomenon which is anti-human." And I'm not gonna attribute this quote right now. We're gonna go back to it later within the talk, and it will make much more sense then.

So how does technology influence us in a negative way. Well technology, especially social media, is known to affect mental health through fostering low self-esteem. Anxiety, depression, feelings of loneliness, which feels very paradoxical because it's social media. There are so many people out there to connect with. Social media platforms have become a home to rampant harassment, bullying, victimising, shaming, trolling, and doxxing, which has driven a lot of people away, primarily from underrepresented groups.

In a way, personal data has become currency, sold to advertiser, and even weaponized to alter election results, as in infamous Cambridge Analytica and Facebook scandal that was quite recent.

In many cases, if the product is free, as a user, you are the product.

Data breaches have become more frequent, leaking billions of users' information, from such giants as Yahoo, Uber, Equifax, and locally in Australia that happened as well due to poor security practises.

And some of those companies covered up those incidents without alerting those who were affected until a year later. And yes, I am talking about Uber here.

Reality can be mended, bent to commercial goals, just as recently, HealthEngine, a online GP booking platform here in Australia, did by altering 20,000 patient reviews to sound positive, because they weren't sounding very positive at the time. The lack of diversity inclusion isn't something very specific to tech, but it is very prevalent here, and it is reported more in tech industry rather than non-tech industries.

And accordingly to Tech Leavers' study, 78% of employees reported some form of unfair treatment. One of 10 women are subject to unwanted sexual attention, and women of colour are twice as likely to experience stereotyping compared to white women. And it doesn't end there.

In a recent study by Reveal, it highlights massive racial and gender disparities, showing a median of 2% latina women, 9% asian women, 15% white women, and 0.8% black women in tech.

And this is Silicon Valley, but honestly, it's not any better in Australia.

These numbers decrease dramatically when we talk about executive roles, managerial roles, and leadership roles. So it becomes quite obvious that women, especially women of colour, aren't very welcome in engineering in this line, and in the tech industry in general.

So these are just very few unintended consequences of this technological growth that we're experiencing that are happening every single day.

So honestly, to me, we are facing a crisis. And we are trying to figure out, well, who takes responsibility for this? Who has to fix those side effects of this advancement. And the way we got here is from a place of comfort. Ignorance and complacency.

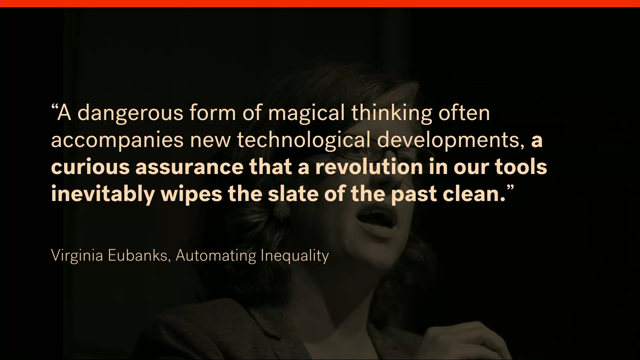

Because we forgot social and historical context. And this quote pictures that really well.

"A dangerous form of magical thinking "often accompanies new technological developments, "a curious assurance that a revolution in our tools "inevitably will wipe the slate of the past clean." Which isn't and hasn't been true.

So we're gonna talk about ethics today, and ethical principles We're gonna talk about defending and systematising moral, righteous conduct, because I personally find this question very challenging and difficult.

What does it mean, ethical? What does it mean to be good in our industry and in general? As a person, as a leader.

As a developer, designer, freelancer, or CEO, whoever you are.

So let's start with human rights and democracy, because it's not a question of when, but how, technology's currently advancing democracy and human rights.

So as much as tech can serve democracy and be very empowering, it can also serve as a tool for authoritarian governments and regimes to censor press. You can track down dissenting citizens and enact legal repercussions.

And that actually happened, for example, in Turkey. A few people who were expressing criticism for current prime minister were actually trialled for this.

For their tweets that weren't very favourable. Facebook discovered thousands of inauthentic ads affiliated with the election 2016, meant to spread misinformation.

And we all know how that that went.

Many algorithms, including Google and Facebook, help creating so called filter bubbles, and those filter bubbles prevent us from seeing disagreeable content.

And that feeds into our confirmation bias, so it makes us unable to make informed choices as citizens, and also challenge fake content.

And as far as I know, we kind of live in a post fact era. Human rights, especially those around privacy, freedom of expression, and fighting discrimination are challenged every single day.

And digital technologies that we work on should be used to harness collective intelligence. Support, and improve, the civic processes on which democratic societies depend.

So I have a little list of guidelines and rules in this spectrum.

Ethical technology should respect and extend human rights. It should serve and support democracy, fight against spread of misinformation, and encourage civic engagement.

The next ethical principle I would like to talk about is well-being.

We talk about burn out and mental health quite a lot these days.

And we talk about those negative effects that social media can have on our well-being a little bit already.

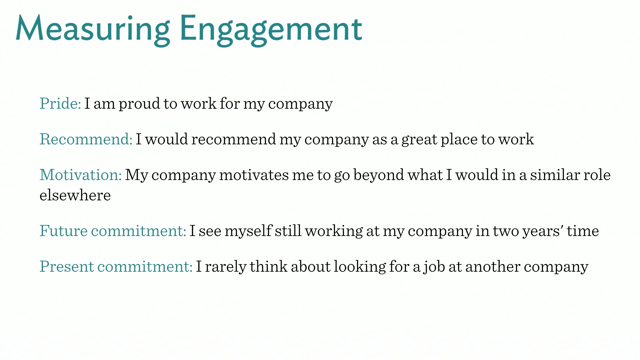

And there have been numerous studies that show that cognitive capacity decreases as hyper-connectivity fosters attention deficit disorders. And those social media services and technology in general taps into creating addiction, social alienation, anxiety, depression, stress, trouble sleeping. These are quite common side effects of this digital advancement and being really connected. And technology should really be a quite mindful companion to our live, rather someone who's really disrupting and hijacking our attentions through not only complex interfaces, but also tricks to feed into addiction And if you're interested in finding out about the concept of calm technology, I would strongly recommend Amber Case's book that's entitled Calm Technology. Or checking out Centre for Humane Technology. So ethical technology should require minimum attention, inform and create calm instead of distress, work in the background, and respect societal norms. The next ethical rule is safety and security. And that can span over many different dimensions, including reliability of software, against hacking, ransomware, as well as physical and emotional safety of the users of the software.

Data breaches can have catastrophic consequences. Just in Australia alone, in 2014 and 15, over 700,000 people were affected by data breaches.

That's a lot of people if you ask me.

And those details can be used to do many malicious things such as opening bank accounts, obtaining mortgages, putting the victims of breaches at risk of police raids, immigration issues, financial bottlenecks for years to come. And in other scenarios, this data might be used to put victims of harassment, domestic abuse, activists, and whistleblowers are an incredible risk of being doxxed or swatted.

And then, if you don't know what swatted means, that means actually prank calling police so they come to your house and hopefully hurt you in some way or another.

So there are actually really grave consequences to data breaches.

So technology in terms of security and safety should respond promptly to crisis.

Should eliminate single points of failure.

Invest in cryptography and security.

And most importantly, protect the most vulnerable people, which in many cases are underrepresented groups. Tech workers and organisation should be accountable for their creations and liable for potential damages they make on the society, and we haven't really done that well on that front so far.

And that social impact should be estimated and measured on continuous basis with the same level of scrutiny and level of importance as delivering to your investors or pushing new feature releases.

A number of algorithms rely on AI and machine learning, but it is humans who design them, and it is humans who are putting them in place.

So we should take ownership for those systemic flaws and for the, of those algorithms And it is really crucial to understand this fundamental distinction of who is responsible. Recently, Margaret Stewart, who's the VP of design at Facebook, very timely, spoke about four quadrants of design responsibility and how our liability for our designs rapidly grows as product becomes an ecosystem, as it turns from one user to a thousand to a million or several millions, which is very relevant to Facebook, as they're absolutely massive.

But to be honest, no matter where you are, where you're falling on this spectrum, whether it's just a side project, very small business, a start-up, to a massive enterprise, it's necessary to evaluate potential impact. Especially negative one, but positive as well. So ethical technology should be aware of diverse and cultural, diverse social and culture norms. Create policies for accountability.

Collaborate with lawmakers to advance regulations, because honestly they're so behind technology there's almost no laws to protect us, and there is nobody to work on it, because they just don't, they don't move at the same pace as we do.

So it's necessary that we have this link of collaboration. And complies with national and international guidelines. Data protection and privacy.

So we talk about security and safety a little bit, but in the age of massive data collection, the right to protection and control of our personal information is also challenged. There is a portal called Just Delete Me that is a directory of web services that will showcase how difficult it is to delete your account and get rid of your data.

And it would be surprisingly difficult.

You would be surprised how many services actually prevent you from deleting your account and you have no control of your data whatsoever. And open and closed door surveillance has kind of become a norm challenging human rights, and that even is happening in Australia.

So, few weeks ago I've heard that Peter Dunn called for rights to access emails, bank records, text messages of citizens without a warrant or any consent.

To me, that's horrifying.

So data certainly can be used in our work to enhance the user experience, provide useful services, but it can also be very easily weaponized by governments or malicious individuals.

Even creators of facial recognition technology are well aware of how dangerous the use of that technology could be, so some of them actually declined to provide their services to governments. Sensitive data should be accessible to the users, modifiable, restricted, and easily deleted. And Maciej Cegłowski, who you probably heard of as Pinboard, suggested in his talk, "Don't collect it, if you have to collect it don't store it, "if you have to store it, don't keep it." And I think this is a very sensible call for minimal approaches to data collection and mindful short storage times.

So ethical technology should collect the data that is only absolutely necessary for operation and give full control of this data to the users, including permanent and swift deletion. And more importantly, allow anonymity, which is really important, again, to activists, whistleblowers, members of underrepresented groups especially.

Transparency.

Ethical design is transparent and has nothing to hide. Disclosing information on how services operate, how they work, how data is gathered, stored, and used, both stress worthiness and mitigates misuse. Often users aren't very well informed in how applications work and how does that affect them. Software, for many people, is a black box and it's decisions cannot be explained.

And that lack of clarity increases the risk and the magnitude of harm, as well as lowers the chances of enforcing any accountability in the responsibility. Because there are no governing bodies over privately-held tech companies.

There's no one, no one here to kind of to keep us in place. Keep us in check.

And transparency can be implemented in numerous ways. Through policies, processes, critical assessment. I think it's really important to responsibly disclose abuse of software.

Establish clear rules for reporting and accountability. How can I report a breach? How accountable you are, what are you gonna do? How are you gonna respond? And it's important to think about mission and value statements.

Misuse and bias awareness.

So you might have heard of Eric Meyer, who is a very prominent author of countless resources and tools on front end engineering and design. So a few years ago he wrote this article entitled Inadvertent Algorithmic Cruelty.

And he described his horrible horrible experience of Facebook Year in Review treating the death of his daughter Rebecca as the highlight of his year. In 2016 Microsoft released Tay, which was an artificial intelligence chat bot on Twitter which in less than 24 hours become a massive racist and a misogynist, and had to be taken down.

Software that's used in America called Compass used to predict future crimes and the chance of recidivism has been proven by Propublica to falsely flag black defendants as future criminals at twice the rate compared to white defendants, simultaneously mislabeling white defendants as low risk. And these are only three examples.

I could go on for an hour, but yeah, these are only three examples that show algorithmic bias. Because what we forget is that algorithms actually, at the core, are thoughtless, because software is written by people, and it reflects their ideology, their biases, their thought processes, and their beliefs. And so very often, we only test for positive successful use cases, forgetting about malice, misuse, empathetic design, and more importantly, the social context we are working on.

Because this software doesn't magically learn on it's own. We teach it.

Technologies and their design do not dictate racial ideologies.

Rather, they reflect the current climate.

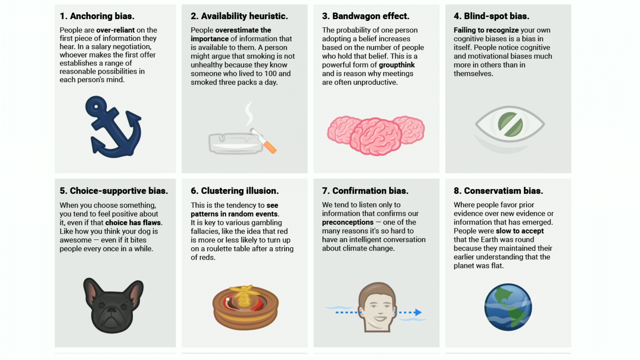

So ethical technology should be aware and combat unconscious bias that we already talked about today.

Test for misuse and malice.

What are the failure cases? What is the worst thing somebody could do with this piece of technology? And fight against harmful social, societal inequalities that are very prevalent. And last but not least, diversity inclusion. The business case for diversity has never been clearer. So, if somebody needs it, investment groups with shared ethnicity were prone to be less successful by 26 to 42%.

Teams that are diverse are 46% more innovative and creative. And again, I could go about those stats forever, but if our teams, organisations, conferences, friendship groups, were less homogenous, technology redlining, which is a form of algorithmic reinforcing oppressive societal norms and enacting racial profiling might have been way less significant.

Because no matter what your background is, you deserve equality and equity.

And that's not happening currently in tech. So if our systems aren't currently trying to prevent exclusion, aren't trying to find exclusion, they're reinforcing it.

So ethical technology's inclusive of all people, prioritises diverse teams and organisations and events, and prevents technological redlining.

So we talked about a few guidelines, but what are some tools that you could use? Some prompts to help you start with ethical technology and implementing all of those values? Well firstly, since we're on the book subject today, there are some excellent books that were published, especially on algorithmic bias, such as Weapons of Math Destruction, Algorithms of Oppression, Technically Wrong, or Automating Inequality, that will shed a lot of light on what algorithmic bias is, how it manifests itself, what can you do about it, and how can you prevent it. There are some more fun projects such as the Tarot Cards of Tech.

Which is really just a set of, a deck of cards that will prompt you with interesting questions in the ethical sphere, and will guide you to make more ethical choices in design or software engineering.

There are also more committed applications and workshops and canvases.

For example, how to practise ethical design workshop, which is quite committed but you can go through every single step with your team and brainstorm and really make sure that your application or design is ethical. There are also other apps.

There's one called So You Want to Build an Algorithm. Making an ethical decision apps that will actually ask a bunch of questions about your assumptions and your context and give you answers, which is very helpful. There are also a lot of manifestos and pledge and value statements.

In my opinion, without any commitment and action, they don't mean a lot, but they can be a great source of inspiration and education, so if you wanna check one of those out, I would recommend the Ethical Design Manifesto by Indie, or the Designers Oath is just one of the few. There are really countless examples out there. And don't worry about writing this down, because I will publish my slides and everything will be in them.

So I've mentioned a few tools, and I've mentioned this quote at the very beginning how the web has become anti-human. So I want to go back to it.

And this actually is Sir Tim Berners-Lee, and for those who don't remember or don't know, he is the person who literally invented the World Wide Web. And he said, in a recent interview for Vanity Fair, that he is very disappointed and devastated to see what the web has become.

But on the other hand, he's also feeling hopeful about the future. He thinks that we can turn the ship around and have this people first, human technology. And frankly, I am too.

Because I can see the shift towards humane technology, but it needs your help, because our enemy is complacency and doing nothing.

And especially in a room full of managers, leaders, we have to remember that we are all responsible for what the web has become, and will become in the future.

So for this reason, it's crucial to understand those ethical challenges that we're facing. What does ethic means? What values do we have? Because just because we have the capacity to design or develop all of those amazing things, it doesn't mean that we should.

Because the focus on chasing disruption makes us forget that all of those digital tools are embedded in old systems of power and privilege, and those systems have to be challenged.

Advancing technology should not compromise humanity. Because the web should enhance our lives, and fulfil our dreams, not crush our hopes, magnify our fears, and deepen our divisions. So I have one thing for you today.

I invite you to think carefully about the repercussions of your work on the web platform. And I invite you to take action.

I invite you to think about ethical engineering when you go back to work.

And I invite you to build a better future, together. Thank you.

(applause) (bright, upbeat music)

Technology has a profound effect on humankind. But the exponential progress resulted in negative societal impact—from data breaches, promoting inequality to rampant harassment. Tech is incredibly susceptible to bias and manipulation, which has led us astray from humanity towards profitability and exclusion. Disruption cast a shade on responsibility for our creations.

In this talk, you will see examples of algorithmic bias, learn how to understand the impact of exclusionary decision-making and implement actionable strategies to combat it. Come and learn about pitfalls of disruption and how to design products, business models and cultures for ethical innovation.