Falling off the Edge: Practical Uses for Edge Computing

Introduction to Edge Computing

Alexander Karan, a senior engineer at Atlassian, introduces the topic of edge computing, emphasizing its importance and the need to understand its trade-offs. He alerts the audience about the evolving nature of the field due to continuous product releases by companies.

Defining Edge Computing

Edge computing is described as an approach to architecture, focusing on the location sensitivity and distributed programming patterns. It's not about specific technologies but rather about running code as close as possible to the end user for efficiency.

Historical Context and Evolution of Edge Computing

The history of edge computing is traced back to the early internet days, with the introduction of the IBM 360/91 and the development of ARPANET. The evolution of edge computing is discussed, highlighting the role of CDNs and the progression to early edge computing services in the 2000s.

Relevance of Edge Computing in Modern Technology

The talk shifts to the significance of edge computing in contemporary technology, referencing the rise of smartphones and IoT devices. The role of Apple in offloading computational work to devices and how it relates to the concept of edge computing is also discussed.

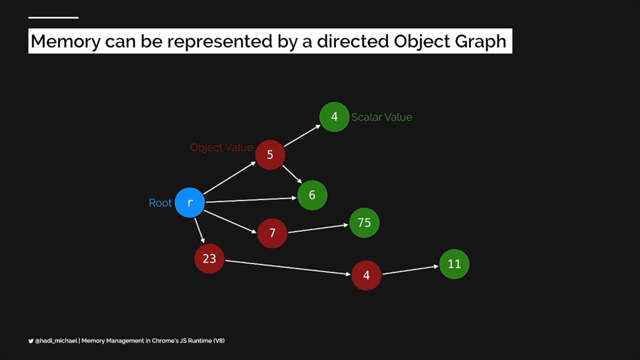

Edge Functions and Their Capabilities

Edge Functions are introduced as a blend of serverless functions and CDNs, offering advantages in terms of speed and efficiency. The concept of edge runtime and its variability across different providers is explained, using examples like Cloudflare Workers and AWS Lambda.

The Need for Edge Computing

The increasing volume of data and the necessity for fast data processing are cited as reasons for the growing need for edge computing. The talk covers how edge computing can alleviate network strain and improve data distribution and program efficiency.

Practical Use Cases of Edge Computing

Karan delves into various practical applications of edge computing, discussing its use in web development frameworks like SvelteKit and NextJS, and how it enhances user experience with faster response times and dynamic content delivery.

Edge Computing with Databases

The challenges and solutions of integrating databases with edge computing are explored. Examples include MongoDB Atlas and PlanetScale, which facilitate database interactions in edge environments. The talk also touches on the strategic placement of compute resources relative to data sources.

Expanding Capabilities and Limitations

Karan discusses the expanding capabilities of edge computing, including the use of cron jobs and API building. He also addresses the limitations and the importance of choosing the right tools and architecture for specific use cases.

Concluding Remarks and Key Takeaways

The presentation concludes with key takeaways about edge computing, emphasizing its flexibility and the need to consider data location and compute proximity. Karan invites the audience to engage further through his social media and blog.

Yeah, so I'm, Alexander, I'm a senior engineer at Atlassian, I work on the Cloud FinOps team, and I'm from Perth, the best state.

Anyway, that's, that's another story for another time.

Anyway, falling off the edge, practical uses for edge computing.

I love edge computing, it's really great, but I want to start the talk with a warning.

So two warnings.

There is no silver bullet, golden tool, best way of doing things.

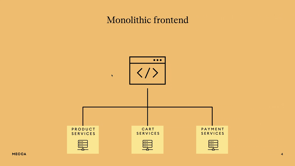

The whole serverless versus traditional computers, monorepos versus, lots of repos, or monoliths versus microservices.

It's always about trade offs, right?

And I just want you to keep that in mind.

Because as I start doing this talk, I'm going to get really excited about stuff, and be like, yeah!

And you might walk away thinking, I need to switch everything to the edge.

And I don't want you to take that away.

That's not the takeaway I want.

The second thing is I've given this talk three times now and it keeps changing between each session because all the companies keep releasing new stuff.

Personally it feels like they're ganging up on me and they're like, there's this random dude in Australia, let's keep pushing products and he has to change his talk.

But I'm sure that's not the case either.

Let's dig into it.

What is edge computing, right?

So this is actually a really key thing to understand.

It has nothing to do with a particular technology, language, or stack.

It has nothing to do with that.

It's an approach to architecture, right?

So it's location sensitive.

It's about where you place things in the network.

And it's a distributed programming pattern, right?

Nothing to do with JavaScript, although that does feature a lot.

Nothing to do with a particular provider or anything.

It's architecture.

So, if we sum it up, it's running code as close as possible to the end user.

Although I've probably simplified that a bit too much now, and globally distributed, but running code as close as possible to the user.

You've probably seen things like this from, Vercel and SvelteKit.

We render this page using an edge function, and we do some fancy stuff with location, which we'll get to in a minute.

But actually, to understand edge computing, we have to go back in time, before I was born, maybe not before some of you were born, but anyway, so let's have a look.

So we need to talk about the OG server, the IBM 360/91.

Look at all those buttons.

I just want one so I can press them.

Anyway, so if we think back to the original internet that connected four universities in America, I believe this sort of shortened hyphen was the ARPANET, I might be pronouncing that wrong.

So anyway, the Network Working Group released an RFC that sort of detailed how users on the ARPANET could use production services, from an IBM 360/91.

And we've changed the way servers are built, the software we run, how we provision them, but those basic protocols for how the internet and, servers work, still are used today.

So as the internet started to grow, it ran into issues, especially around the 90s, so CDNs, or Content Delivery Networks, were created.

And the idea was that we could serve static content closer to end users.

So dynamic content would be rendered on, rendered or created or whatever on the origin server.

And static content, such as files, images, CSS, HTML, would be delivered from CDN servers that were placed closer to end users.

So this is actually where edge computing starts to come, into life.

Because here we're deliberately placing things closer to end users.

But it's not quite edge computing yet.

These, networks actually evolved in the early 2000s.

When I was still in high school, and, that led to the first edge computing services.

Microsoft Windows came out in the 2000s, had a file system where you could access a file, looks like it was shared on one server, but it was actually stored across multiple.

To, make the load on one server a lot less.

Amazon CloudFront came out in the mid 2000s, and they had a CDN that not only allowed you to deliver static content, but dynamic content.

So these were the first edge computing services.

But still not what you're probably used to seeing today.

But before we jump into that, I just want to have a slight tangent for two minutes.

And you're probably all going to be like, what's he talking about?

This makes no sense.

But I really want to hammer home the fact that edge computing is architecture, not software or a stack, right?

Rise of the iPhone.

IPhone changed everything, right?

We've all got smartphones in our pockets, with more computing power than most base laptops, right?

Now, Apple's a really good example of this because they choose to do a lot of encryption and biometrics on the phone.

They choose to do this code on the phone rather than their servers for a lot of reasons, not just this reason I'm about to say, security being one of them.

But this offloads work from their servers and they choose to do it on the iPhone, which for them is the start of their network.

It means users get faster response times as well as all the security stuff.

I can argue that this is a case of edge computing.

Now, you could also argue that I'm wrong.

That's programming.

It's all up for debate.

And we can take this further with IoT or Internet of Things.

There's a word that loses meaning the more you say it.

But anyway, so we've all got Siri speakers or Google speakers, temperature sensors, door locks, cameras, you name it.

And they're all processing data and sending it to the cloud.

But now, they're running ML models, they're doing fancier stuff with this data on the actual device.

And these companies, they manage, maintain, and ship and update that code.

They're choosing to run it on the end device, close to the user, rather than on their servers.

So once again, that's actually an example of edge computing because it's architecture.

But anyway, let's forget about that and jump into what you care about, as web developers or whatever you like.

Edge Functions, that's where it's at, so we've got places like Netlify, Netlify Edge, it's an advanced global platform for powering web experiences that are fast, reliable, and secure, make your websites feel nearly instant for every visitor.

I actually think that marketing spiel is the closest one out of all of them that doesn't blur and muddy the water about what it actually does.

And then we have things like Cloudflare Workers, exceptional performance, reliability, and scale.

Deploy serverless code instantly.

What are edge functions?

Before we can understand edge functions, we have to understand serverless functions, right?

What is a serverless function?

A traditional server, you provision a server, upload your code, And you pay for every minute that it runs.

With serverless functions, you normally write maybe an endpoint for an API, or one particular page, you upload it to your serverless provider, and you only pay for the minutes that function is running.

Most of the providers actually give you like, a million free calls a month, so maybe you don't pay anything at all, but, that's the sort of difference, right?

That's a serverless function.

Edge functions are like serverless functions and CDNs got together and had a baby, right?

Edge functions are serverless functions.

They're just being run close to an end user.

And they have some advantages over serverless functions, right?

Downside of serverless functions is they often suffer from cold starts.

And this is where if the function hasn't been called for a long time, When it gets called, it has to provision everything to make it work, and this leads to a delay in its response.

But then once it's been called, it gets cached, and it sticks around for a while, but if traffic increases, and we have to spin up another instance of the function, we get the cold start again.

Or, if enough time passes, it disappears from that cache.

Now, edge functions don't suffer from Cold starts.

Traditionally they don't, but as engineers, I'm sure we can code them in.

There's always that sort of, it could if we do things badly.

That's what an edge function is.

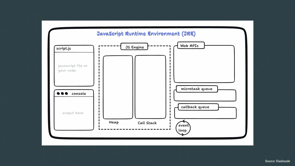

We're just going to cover a few more words and what they mean, and then we'll dig into why we need it, and then we'll dig over into the use cases.

This comes up a lot.

Edge runtime.

I hate this word.

And that's because it doesn't really mean anything.

There's been lots of arguments on Twitter.

Oh, yeah, edge runtime.

It's what it's all about.

But people are like, it doesn't mean this.

It doesn't mean that.

If someone mentions this to you, the best thing to think about is edge runtime is about what provider am I using to run my Edge functions, and what is the runtime environment they provide?

That's the best accurate description, because they're all different.

Most of the time it's JavaScript, but a lighter version of JavaScript, think less NodeJS and more browser runtime let's dig into that a bit deeper.

If you look here, we've got, AWS Lambda, normal serverless functions, NodeJS.

When you edge optimize them, NodeJS.

But then you have things like CloudFlare Workers, which is a V8 isolate for both regional and edge deployments.

And Deno, which uses denodeploy, it's just Deno, right?

That's their runtime of, JavaScript and it works on the edge.

But there's something to dig in a bit deeper here.

AWS Lambda suffers from those cold starts, right?

But Cloudflare workers and Deno don't necessarily have that issue.

And we can dig into it with Cloudflare workers quite easily, right?

Traditional serverless providers, they use a containerized or virtual machine to run an instance of a language runtime, okay?

Where Cloudflare workers, on the other hand, they pay the cost of JavaScript once at the start of a container, and this essentially allows them to run limitless scripts, by running a V8 isolate for each function or worker call, and that has little to no overhead.

And this means that they can start a hundred times faster than Node running in a traditional containerized or virtual machine.

And it also consumes way less memory, right?

So it's this sort of instant compute, that we're talking about.

Now, it may seem I'm punching down a little bit on things like AWS Lambda, and it's true.

I, I am.

But it's also really good, and you can also optimize Lambdas to be really, great as well.

We shouldn't always punch on technologies, but now and then it's, fun to make a little bit of fun.

The final place that you might have heard of the Edge Runtime is Vercel, because Vercel decided to get involved and was like, let's confuse everybody some more, and we'll create an open source project called Edge Runtime.

So the Edge Runtime is a project where you can run their version of the Edge on your local machine, and it's all about creating a general API layer for JavaScript that is the same no matter where you're running.

And the key difference there is Edge for them doesn't refer to location, because they were like, let's make it more confusing.

It refers to that instant compute.

That no call start for serverless environments.

And it's part of the WinterCG group, which is all about, once again, creating that general API layer for JavaScript, so you have the same APIs no matter where it's running, whether it's the browser, server, edge, embedded applications.

We all know how long it took to get Fetch in Node, right?

So we want that to disappear.

We want to have the same APIs everywhere.

Why do we need the edge?

Simple answer is data and speed.

I don't want to be one of those, doomsday data-geddon people or whatever.

But, the world's data is set to grow to 175 zeta or zettabytes by 2025.

So that's a lot of data, right?

And although network technology has improved there is a small chance that cloud providers won't be able to guarantee, sufficient transfer rates and bandwidth if we keep growing like this.

Edge computing is one of the answers to this problem.

By distributing our data and our programs, we can actually lower the strain we put on the network.

Yeah, let's, look at an example just to give you an idea because I think that's the best way to see the difference.

So here's a AWS Lambda that I wrote.

It just returns a half baked JSON API response.

I'm sorry for not finishing it properly.

But you, get the gist.

And then I wrote the same code, in JavaScript as well, but deployed it on DenoDeploy.

The AWS Lambda is in Sydney, and obviously DenoDeploy sent the code all over the world.

So I'm from Perth, and I did this test in Perth to start with, and there was hardly any difference between the two.

Because the nearest edge location in Perth is just as far away as, Sydney.

Because no one cares about Perth.

So I was like, oh, this isn't a good test, so I turned my VPN on, and I pinged from Germany.

Now, of course, my request has to go to Germany, and then to Sydney, then back to Germany, then back to me.

But when I hit the edge function, it goes to Germany, hits the nearest edge location, and comes straight back, right?

So you can see that it's faster.

And the same again when I do it from US.

It's, what you're seeing here is if you've got globally distributed users, or just geographically distributed users, using edge most of the time, unless you're in Perth, is faster, they get a faster response time.

Let's dig into it.

So now you've got a bit of the history, we've got all those boring terminologies out the way, why we might need it.

Tell me, how we can use it.

Not everything needs to be on the edge, of course.

But, I'm not one of those YouTubers who's I'm moving everything to the edge.

I'm not one of those people in the Ruby community that's I'm building my own server.

I sit comfortably in the middle.

I'm going to dig a lot into SvelteKit and NextJS here.

That's because if you're not using those, you're clearly doing web development wrong.

No, that's not it.

It's not at all.

It's, more that, that's just what I use every day.

But you can pull this off with Nuxt, Remix, Qwik, there's too many web frameworks now, but there's a lot, so pretty much all of them.

Yeah, so Netlify and Vercel, you can use Edge functions to render SvelteKit or NextJS, right?

So traditionally you would have used serverless functions.

With SvelteKit we use functions to render the page.

So if I render the page with an edge function, it's rendered closer to the user, giving them that faster response time.

And come back to this, right?

So here, the page is rendered with an edge function.

But we also use something called edge middleware with Vercel.

So edge middleware is code that intercepts the request and runs before the page does.

Like middleware in any other application you've built.

So what we're doing here is we're intercepting the request, getting the location and the IP address from the request, and then dynamically changing the render of the page.

But because we're rendering it so close to the user, they get a really fast response time as if the content was static.

Which is great, right?

So we all want those faster response times.

And we can take this further, right?

So we've all done A B testing at some point, I'm sure.

It's, a pain.

We have to create multiple versions of a page, or a feature, multiple requests to a server.

Maybe a bunch of client side code that just, bloats the front end.

Now, we can cache each version of that feature and page at the edge, and we can just quickly swap between them with hardly any difference in time for the end user.

And that's great, right?

That's fantastic.

Fast A/B testing.

And we can take this further.

Maybe you've got configs.

We can store configs on the edge now.

I'm sure we've all, had a promotion, or, released a new feature, traffic has increased, and something goes wrong.

Just, click a button in the config, and all of a sudden, they've got a maintenance page, right?

And I'm gonna borrow a term from Vercel here.

One, because it's ridiculous, and two, because it conveys what I'm trying to say is, give your users dynamic at the speed of static.

Which is true, right?

You're giving them this dynamic content, but static isn't always necessarily fast.

So that's where it gets a little confusing.

But, that's what we're saying here.

We can have dynamic content rendered really fast.

And that's cool.

That's, what we want.

It might seem like I'm pumping up Vercel here.

And, I do that a lot, but that's because I use them every day.

You can do this with Netlify, with Cloudflare.

Some of this stuff with AWS, don't know how much you can do with AWS because I don't do a lot of websites on AWS anymore.

But, Vercel are great.

Let's say we need more, right?

We've got a website, it's no longer static, it's rendering dynamic content, that's all fine.

But what if I need state?

What if I need a database?

What if I need more?

Can we do that on the edge?

And any of you who've dealt with databases, I'm sure there's that sort of voice in the back of your head that's distributed data, that's a bad idea, that's a bad idea, don't do it, don't do it, don't do it.

And you're right.

So let's look at Vercel's edge functions and I'm going to focus on their edge functions.

There's limitations with every provider that you use.

So always check it, you have a code size limit of 1 to 4MB, maximum memory usage of 128MB, and initial response must be within 30 seconds.

So if you're going to install a database, we don't have room for a driver, we can't install a database driver, like, how do we do it?

It's not possible.

Let's have a look at how that works.

So traditional server, spins up, creates a connection pool.

Uses that to talk to the database.

Obviously, it's way more complicated than that, and I'm just simplifying it, but that's like a talk in itself.

Serverless functions, on the other hand, they create connection to the database and they cache it.

And then they use that cached connection to keep making further requests.

Now, this actually leads to bigger cold starts, because, if the function's not cached and it has to be spun up again, then we have to create a brand new connection to the database again.

Now, Edge Functions, they use HTTP, to make requests.

How does that work?

I'm going to start my favorite, MongoDB.

It's great, even though it's not web scale or whatever they say.

They have loads of different drivers in lots of different languages, but you can also use a HTTP library to create, update, whatever you need with the database, right?

You can just spin up a database using MongoDB Atlas, dedicated or serverless.

Because who wants to manage a database anymore?

And then just update it with HTTP.

But, say you're like, Alex, I don't no SQL, I like SQL, don't worry, I've got you covered.

PlanetScale.

So PlanetScale is dropping serverless MySQL and this stuff is pretty, it's pretty dope.

They've gone really far and they've created a fetch based library in JavaScript just for serverless and edge environments.

And this thing is mad, right?

So when you create a request from any edge or serverless location, it connects to their nearest edge location and it uses long held connection pools in their internal network to reach the data source.

So you can use PlanetScale, and with caching on the edge, get really fast, dynamic responses from a database.

Nice and easy, and it's just beautiful.

It's beautifully fast, it's great, but there is a caveat.

Obviously, relationship between a client and a server is a lot simpler than a relationship between a server and a database.

So if I've got a page that shows me all the users in my account, right?

That's one request to the server to get all the users and one response back.

But between the server and the database, it might be two or three.

It might be, hey, I've got to check this user is still valid, has the right permissions.

Then it might be, then fetch all the users, right?

So if my compute is located here in Melbourne, but my database is in Europe, I've now got two or three trips to the EU and back, where if I just put my compute or server next to my, database in the EU, it would have been one trip, and it would have been faster.

But the thing is, NextJS and others have already thought of this, so they allow you to choose the type of compute you want to use per route.

Do you want edge?

Do you want serverless?

They also have this weird one that's in between, which allows, it's it's called regional edge, where it's get the best of both worlds.

Still not quite too sure how that perfectly works, because, but hey, it means you can get your compute closer to your database, but still get some of that advantage of the edge.

Now, Deno, halfway through the first time I gave this talk, dropped a new database, DenoKV, right?

So it's built on top of FoundationDB, which powers iCloud, Snowflake, so you know this thing is battle tested.

And it allows you to store any JavaScript value.

And it also has read consistencies because you might be thinking, Hey, if I've got users over here and users all the way over there and they're reading and writing data, I'm going to get stale data, right?

At some point, I'm going to do a read and it's going to be out of date.

They're like, don't worry, we've got you covered.

So you can actually choose the consistency level when you're doing a read.

High consistency means a slower read, fresh data.

Lower consistency means a really fast read, but there's a small chance it might be out of date.

So this stuff is great for, real time data, between users, collaborating, user authentication, user management, so many things are going to be done with this.

It's, I'm still on the wait list, I just want access to it so bad.

And the thing is, Vercel also dropped a whole bunch of their stuff.

They dropped, config, KV, Postgres.

This stuff's built on top of Upstash and Neon and things.

They also did a blob, which is built on top of Cloudflare R2, and I'm like, why not just implement Cloudflare?

It'd be cheaper, but I'm sure they'll throw some DX improvements on the top that make it worth it, right?

What if you need more?

What if you're like, okay, Alex, you've convinced me, I've got a database on the edge, I'm using edge functions, everything's going groovy, I need, More stuff that I could do in two seconds with a traditional server.

Look, the downside of this whole serverless and edge thing is sometimes you end up needing another subscription, right?

You could do something in a second with an old school server, but because you're doing serverless, you now need another, service.

And before you know it, you're up to your eyeballs in subscription costs.

Now, that is true, but I feel if you architect things properly, that's also false as well.

But always worth watching out for.

We can use, cron jobs in Cloudflare Workers now.

Piece of cake.

We can also use tools like Ingest to ship background jobs from anywhere on the internet.

It's super easy.

Okay, we're building a web app, everything's hunky dory, we've got database, we've got this, we've got that.

What if we want to just build an API?

And I know this is a front end, mostly focused content, so most of you are like, Alex, I never build just APIs, and I'm like, I do that all the time, that's my job.

We can still build that on the edge, right?

We can use tools like Deno and Deno Deploy to quickly build an API that looks exactly like something you would see in Node and Express, Ruby on Rails, Python.

Super easy, and deploy in seconds to the edge.

Now, it's worth mentioning, not all Node APIs are available in a lot of these edge runtimes.

However, just last week, two hours after I finished this talk in Oslo, Deno just pushed an update and you can now run every single node API in Deno.

That's pretty cool.

And just, Deno makes it so easy to do TypeScript.

Plus, they also have this cool little, art page on their website.

Deno Simpson, Pride Deno Space.

Honestly, if we use software based on cuteness of mascot, Deno would just, absolutely crush it.

Maybe you don't want to use Deno.

Maybe you're like me and you're used to things like ECS or Lambdas.

I'm going to be totally honest.

I've forgotten how to spin up an EC2 and do stuff with that.

I use these things all the time.

You can build an API, deploy it to these, and you can connect it to an API gateway in AWS.

And then you can choose certain routes and optimize them for the edge.

You can actually choose what's best.

And the thing is Cloudflare just dropbed this brand new feature, which is super cool, where you can build a whole bunch of Cloudflare workers.

You can build an API, build whatever you want, and then they will automatically choose the best compute for each function based on where the data source is.

So you don't have to worry about anything.

You can just focus on product.

Because, and I sit firmly in that lane.

I'm not one of those people that's yeah, I roll my own auth and I manage everything myself.

I'm like, no.

I just wanna ship product that my users care about.

And, that's what CloudFlare is really doing with this whole serverless and edge stuff, is they just allow you to focus on your product and they take away some of those hard choices for you.

So one of my key takeaways, building a web app, make it edge ready.

Chances are most of you using Vercel or Netlify, or CloudFlare workers or something.

Start taking advantage of it.

You can do some really, cool fun stuff.

Think carefully about where your data is located versus your compute, because that will catch you out.

But if you're using some of these modern tools maybe it won't, although I'm not too sure I would use Deno KV for my entire database, but maybe only for some stuff.

Edge computing is not a one size fits all, but that's my approach to every technology.

It's about choosing the right tool for the right job.

Because maybe you've got one of those use cases where legal reasons and other things mean your data has to be in one place.

Or maybe you have to spin up a new instance of your application for every single user because of security concerns.

So always think about that.

But if you're building an API, maybe don't start on the edge.

But, you can still do a lot with Deno.

And it's, pretty damn fun.

Yeah, thank you very much.

You can find me on Twitter or my website, alexanderkaran.com.

I do blog occasionally about stuff that I care about.

There's some blogs that go along with this talk as well.

You can find me around if you want to ask me questions.

And that's it.

Thank you.

Unlock the full potential of edge computing with this session. We’ll dive deep into what edge computing is, how it works, and how it can enhance the software you build.

Learn about new runtimes and frameworks like Deno and Fresh that can take advantage of edge computing. See how easy it is to deploy to the edge and understand what it means to deploy your code as close as possible to your users and the benefits it brings.

The session will give you real-world examples and uses cases of edge computing so you can apply it to your work. We’ll also cover the limitations of the edge and when it may not be the best fit for your project.

You will walk away from this session with the knowledge and skills to get the most out of edge computing.