(audience applauds) (audience cheers) - All right, I guess we'll see how many shades of red I can turn. (laughs) (audience laughs) It's really intimidating 'cause all of the slides coming up yesterday, today have been awesome, the speakers have been awesome, and then all of the slide decks have been really polished, and from me, you're gonna get black slides with white text and some screenshots just to warn you. (laughs) (audience laughs) But hopefully the technical content will make up for it, and conveniently, I am not going to be talking about WebPageTest at all, but I will be here for the rest of the day, so if you have any questions, I'm happy to talk about WebPageTest all day. But I'm gonna be talking about prioritization, and so it was awesome to have Harry really, really get excited about the head, and reordering the head, and moving things in the page because that's pretty much, web performance, for me at least, from what I've seen over the last 20 years, and it hasn't really changed. It's two things: get rid of crap, reorder crap. And so once you get rid of as much as you can on the page, it comes down to, "Okay, how can I juggle all the stuff "I still have to deliver to try and get it to deliver "quickly for the user." And so that's pretty much what prioritization comes down into play, and it's changed a lot as we moved in to HTTP/2.

So really quickly, just gonna do a really broad deep dive into prioritization as it stands.

Browsers, there's really not a whole lot of complicated logic to what a browser does when it's loading things, and so the thing you need to know for the browsers, there's the preload scanner and sorts of all other stuff but the main parser, the thing that goes through your HTML one line at at time or one character at a time. Stylesheets block render, no matter where they are, usually stylesheet's in the head.

And non-async script tags, so blocking script tags, usually in the head, block the parser, and so the parser can't see anything in your document until after it gets past that script tag, and it stops there until any stylesheets before it have loaded so the stylesheets become blocking as soon as you have a script tag, and it blocks until that script tag has loaded, and then it can finally go, "Oh, okay, I can "actually get this script and go on to the next thing." And so if you mentally think of yourself as the parser when you're looking at your HTML, you can go through the head, as Harry was saying, and you can go and look, "Okay, it's gonna freeze here "until it loads this third party tag and my CSS before it, "and then it can go on." And it doesn't know anything about your DOM, which is why the head matters so much, until it's actually made it into the document. And so there are a couple of things.

So I talked a little bit about the preload scanner, it's when the main parser stops and gets paused, there's another scanner in all of the browsers these days that tears through your HTML and looks for anything that might be in a URL that it might need, an image, a script, anything, and it goes, "You know what? "I'm going to need this eventually.

"Let's load everything we can as soon as we can "so we have it ready." But these are the things that the browser knows about. There are certain things that aren't in your HTML, tend to refer them as late-discovered resources. Harry had issues with certain cloud font providers, I have issues with all web fonts.

They're evil. (laughs) (audience laughs) I understand designers love them, they make sites look pretty, I'm sure there's conversion uplift but they're the most evil thing on the web, (laughs) from performance at least.

Because by the time the browser knows it needs a font, it's trying to draw the text.

And so the browser's going, "Hey, okay, I've made it "through all of my script tags, "I've made it through all of my stylesheets, "I'm going to draw the headline "in the text that you asked for.

"Oh crap, I don't have the font.

"Lemme go think about fetching that now "before I can draw the text." Same for background images and the stylesheets, they tend to be less of a problem than fonts. Any script-injected content, so if you've got Google Tag Manager or any tag managers in your head, are sort of the big culprit for these.

Anything that they inject into the document, the browser has no idea what it's going to do until it runs the code so the preload scanner can't see them, and any imports from CSS and ES6 modules and things like that that aren't webpack bundled, the browser can't see those so the preload scanner can't see those.

So I'm gonna take the most simple 1979 webpage, like before the web existed. (laughs) There are no third parties, it's all served from one origin, one HTML file, one stylesheet, four scripts, two of them are blocking in the head, two of them are down at the bottom of the document, one web font because I want to show you how evil they are, and 13 images, some of them are above-the-fold, five of them are above-the-fold, the rest of them are sort of below-the-fold, and it's kind of your typical, news content-type page if you would.

And so for scripts in particular, when we're talking about resource loading, at least, there is, I'm gonna be very opinionated, but there is an optimal way to load your scripts, and that is to load one script at a time, in the order the parser is gonna hit them, 100% of the bandwidth dedicated to each one of those scripts because that way, when you've downloaded the first script, it can start executing while you're downloading the second script, and you can sort of pipeline the downloads and the executions.

If you download both of the scripts at the same time, sharing bandwidth, then by the time the parser gets to them, it needs to execute them serially, and you end up with a later time.

So for scripts, optimal would be 100% of the bandwidth, in the order in the document, one after the other. So for our page that we were talking about, it has an overall optimal loading sequence as well if you want to get as much visible content to the user as quickly as possible, so your start render as soon as possible, you're above-the-fold as quickly as possible. I'll warn you right now, the images are gonna be very contentious.

I am a huge fan of progressive JPEGs.

Tammy, wherever you are, broke my heart (laughs) with the research study.

We still debate on how accurate it is but that, (audience laughs) users may or may not like progressive images, it may make them work harder, so depending how you feel about progressive images, downloading images concurrently or one at a time. So with progressive JPEGS in particular, by the time you get 50% of the way into the bytes, visually, the image is almost indistinguishable from when it's 100% and so you can get visually complete on the page about twice as quickly if you download concurrently than if you download one image at a time completely until going onto the next one.

And so on our sample page, if we load the HTML first, kinda have to, the CSS is before the script tags or the CSS is gonna block the script execution, so we wanna go ahead and load that as quickly as possible. The script tag's 100% of the bandwidth so we're going from time, left-to-right, and then vertically, it's the pipe, if you would. The Internet's nothing but tubes right? And so this is what we're shoving down the tube to the users.

The script, one after the other, so that the browser can execute them one after the other. At that point, we've made it out of the head. We're into the document, we can render something. We don't have our web font yet so we can't actually render any text but we can render the shell of the page, the color of the divs, and that kind of thing. The browser panics, fetches the web font.

We can now draw all of the text.

And then keep things simple because I suck at math. We're going to assume each one of the blocks of unit of time is one second.

So you're downloading the script for one second, the HTML for one second, so around five seconds. You've got all of your content and the text displayed. No images yet, they're still all blank.

We're downloading the images concurrently because we all love progressive JPEGs, right? (laughs) So about halfway, we're at about seven seconds, the page is visually indistinguishable for what it's gonna be complete but even if we wanted to wait until 100% of the image bytes come in at 10 seconds, we've got all of the above-the-fold-content rendered, visible to the user, and then we can run the the async scripts or the scripts at the bottom of the page.

We need to run the marketing, re-remarketing tags, the analytics, all of the things the business is going to yell at you if you move too far out of the way. And then we're gonna go ahead and load all of the images that are down below the viewport.

You can lazy load them if you want.

Also not a huge fan of lazy loading, just because it ends up being one of those races where, okay, so when you lazy load, I'm more of a fan of load all of your visible content, and then load your images lazily after that but don't wait until the user starts scrolling because you end up in a race condition with, "Can you start fetching and complete fetching the image "before the user gets to it?" But like I said, I'll be opinionated.

The worst case that we can do for this, is to download everything all at the same time, sharing the connection.

And so what happens in that case, we download the HTML, and then we start downloading all of the content. We don't know about the font yet because it's late-discovered.

And so we've got the scripts, the style sheets, the async scripts, the below-the-fold images and the above-the-fold images all downloading at the same time, a completely blank screen until you get to 19 seconds, at which point, everything displays except for the text because of evil web fonts.

And so the web font loads, and at 20 seconds we finally have something for the user to consume. And so the difference between those two loading strategies, we're loading exactly the same content, we're just changing how we order it, how we load it, is from about seven seconds for everything to be complete until 20 seconds, so more than twice as fast to load the content just by changing the order on how we're loading it.

Pre-HTTP/2 and the HTTP/1 days, the browser was largely the one responsible for making these decisions.

It would open up six connections per origin and the browser maintains a list in-memory of the things that it needs in the order that it needs them.

And so, at least in Chrome's case, it would basically maintain this linear list. As a connection became available for an origin that it needed, it would pick the first item off the list and fetch it.

And then, as things reprioritized, like as the font comes in it knows it needs the font, it'll put it at the front of the in-memory list, and whenever a new connection became available, it'll go ahead and fetch it next in front of all of the images and everything else. One of the biggest changes for HTTP/2 was the browser no longer controls prioritization. The browser can still maintain its list and it can decide how important things are but it tells the server, "Here's all of the things I need. "I'd really like it if you could send it to me "in this strategy or in this order "but I'm trusting you're gonna do the right thing "and send it to me." And then, like the web font or like in the case of Chrome, visible images in the viewport get a higher priority than below-viewport images. It doesn't know that until it's done your layout, and so all images start at a low priority.

Layout happens and then Chrome's sense of priority frame that says, "Oh, those five images I sent you before, "they're high-priority now! "Send them to me now!" The HTTP/2 model, extremely flexible, too much so, perhaps but as they were designing the spec they created a generic tree with arbitrary weights that the browsers can send, and they can build whatever hierarchy they want. And you can sort of see where we're going with this. All of the browsers did something completely different. This is part of the fun of the web, right? (laughs) Nothing works the same on any browser.

Chrome creates a linear list matching what it would normally have in-memory. "This is the order I'd like things in." It works great for scripts, style sheets, that kind of thing, right? We discussed scripts work best in order, 100% of the bandwidth, images, not great.

So it is serializing the images one after another but it's still generally in the, I'd say, the good camp, as far as performance goes, 'specially if you don't like progressive images like Tammy. (laughs) (audience laughs) Firefox went all in.

I mean, they love the tree, the tree was awesome! "We're gonna build this awesome structure for it!" And so they created five different groups that are placeholders that organize the different resources into a weighted tree, and so they can put lazy resources all the way over here, that only get downloaded if there's nothing that's in one of the higher priority groups. And then they have groups that are staged so that all of the resources in one group will download before any resources in the next group. And then, between two groups, like leaders and unblocked, you can have the JS over there and images over here, and the images will have twice the weight of the JS as a group.

So it has interesting sequencing that give higher weight to more important resources but one of the biggest differences with Chrome, is that, within any group, everything downloads all at the same time.

And so in Firefox, we're in a situation where all of your scripts are all downloading concurrently, all at the same time.

Not great, but images, the Firefox model's better, for me anyway, because the progressive images are all downloading at the same time.

Safari looks a lot like it was frozen in time at the point Chrome forked Blink.

And so the the SPDY model was a lot simpler than HTTP/2, and what it did was it had weights from one to five, and it basically, depending on what the weight was, was the order that things got delivered in. And so Safari basically just took those five weights, or those five priorities, mapped them to weights but everything downloads all at the same time, it's just images get less priority than scripts. So scripts will get three times the bandwidth of images but all of the scripts, all of the images all download at the same time, it's just scripts and CSS get a little more of the bandwidth.

So it still ends up being in a situation where you get most of your critical resources sooner than your low priority resources, and it's a much simpler model.

Edge, before Chrome, doesn't support prioritization at all. And so the default mode for HTTP/2, with prioritizations not supported, is everything downloads all at the same time all with the same weight, which if you remember our earlier models, is absolutely the worst thing you could do for the web. And so, very, very excited that they're moving to Chromium. Hopefully that won't be a problem anymore.

it's sort of the IE6 of the web resource loading. This was a really simple model, remember, we had no third parties.

I don't know if any of you have ever seen a site that has no third parties.

I mean, even google.com has a bunch of different origins it fetches from so we're in a much more complicated world, and prioritization across connections, when you're talking about first party, third party, even static resources, for those of you who don't know, this is Mad Max Thunderdome, and that's basically what it comes down to, is the browsers throw everything on the wire and let the network sort it out.

And so now the servers have no control over it, the browser has no control over it, and it comes down to whatever the network's gonna prioritize among the different connections.

And we can do this a lot to ourselves so there is third parties.

Domain sharding, which we did a lot for HTTP/1. If you've got any static domains, odds are you're doing this to yourself.

I think I have a slide on it later.

HTTP/2, there is a way to coalesce the connection so all of those go over a single connection but you're still paying the cost of having to do a DNS lookup, in that case. So if you were ever doing domain sharding, now is a good time to back off of that.

Even if you're doing HTTP/1 in effort to migrate to HTTP/2, there's not a whole lot of reasons to be doing it anymore. And this is what it looks like in a waterfall. So this is a connection view waterfall, rather than a normal waterfall, and you can see, oh, this is CNN and so it's obviously way truncated 'cause I think CNN has something like 200 third parties or something ridiculous, but you can see yellow are scripts, red are the fonts. So the fonts, even though they're super high-priority, they're downloading concurrently with all sorts of other scripts.

The WebPageTest waterfalls were updated so that the dark parts of the waterfall are when data's actually downloading so you don't have, necessarily, a long dark bar for when it's time the first byte to complete. You can see each chunk of data as it arrives and you can see the network sorting out across the different connections but you've got high-priority stuff, low-priority stuff, images, fonts, scripts all downloading concurrently. Even though both the client and the server support prioritization because HTTPoo, (retches) HTTP, poo, (laughs) (audience laughs) HTTP/2 prioritization only works on a single connection, the server can only prioritize resources that it knows about, and so the separate connections aren't prioritized against each other.

Ryan in the house! (laughs) And so even if you're using a image-optimizing CDN or the publishing platform you're using is using an image-optimizing CDN, that ends up on a separate origin from your main origin, your main resources, and now you've got your images competing with your JavaScript because they're coming from a different origin. And so I'm not saying don't use image-optimizing CDNs but if you can find a way to front them with your CDN, the same CDN that serves your origin, you'd be in much better shape because then you can prioritize your images against your scripts.

Yep, talked about connection coalescing.

So connection coalescing is a way for HTTP/2, if you have static domains, different domain names, if you serve the same certificate to both of them so the certificate name includes all of your domain names, and they resolve to the same IP addresses, then the browsers will request over the same connection. And so you can serve multiple domains over a single connection but it doesn't work if you're using an image CDN, for example, that's a different origin from your main CDN or like one of the top retailers in the US has a multi-CDN strategy where they use Akamai, Cloudflare, Fastly, they basically use all of the CDNs, and they decide at runtime, which one's cheaper or more appropriate for them to serve, or they'll serve some assets from one CDN from another, and when you do that, all of a sudden, you can't coalesce your connections anymore because you're actually serving from different CDNs, and so you're shooting prioritization in the foot. And so one of the things that, when I first joined Cloudflare, I was really excited and I was like, "Okay, you know fonts.

"I hate fonts.

"If it's not clear yet, I hate fonts." (audience laughs) (laughs) But Google Web Fonts, in particular, annoy me. (audience laughs) (laughs) And the main reason for that is when you use Google Web Fonts, first, you include a CSS file, which is the top arrow, from Google Web Fonts' origin in your head. So it's a third party domain in your head, so it needs to do the DNS lookup, socket connect, TLS negotiation, fetch the CSS before it can execute whatever else JavaScript you have in your head. And then, when it goes to fetch the actual font, it does it from a completely different origin, and so you'd need to do another DNS lookup, socket connect, TLS connection, and fetch for the font. And so, not only does the browser have to do the last minute panic of, "Oh crap, I don't know how to draw your text." It's, "Oh crap, I don't know how to draw your text, "and I don't know where the hell to get your font from "so I need to go figure all that out first." And so when I was started out Cloudflare, one of the first things I wanted to do was fix this problem and make Google Fonts run from your origin, so transparently proxy Google Fonts if you would, from the CDN behind.

And so I did it! And the waterfall went like crap.

I was like, "Holy shit, what the hell happened?" (laughs) All I wanted to do was move the fonts onto the origin. All of a sudden, my start render time ended up at the end of my waterfall at like nine, nine-and-a-half seconds.

And so it ended up the prioritization was actually broken on the Cloudflare side of things, and so even though I was proxying the fonts from the origin, the images, the CSS, and everything else were all coming from the same origin but they weren't getting prioritized.

The prioritization ended up randomly, and so on any given page load, you'd get resources in completely different orders, and your start render time would go all over the place.

It would be really early if you happen to get all of the head resources early but it could also be really late if it decided to download all of the content images before it downloaded the CSS.

And the browser wasn't doing anything different. This was a problem on the origins, that I'll dive into why in a second, but once we got it fixed, yay! Fast render, and (coughs) in this case, we're actually preloading the fonts as well so by the time we went to render we had all of the fonts ready, and so we could draw all of the content really quickly. I didn't feel like I had been a complete failure at that point, and I could still have a job. So we were loading Google Web Fonts as if they were origin-served so all was good. And then Zach, I don't think Zach's here, but Zach is the God of Fonts so when he started doing this, it really freaked me out. I was like, "Okay, I got Cloudflare fixed.

"All is good with the world.

"No one has to know we were broken." And then Zach moved his fonts from his origin to a separate origin to speed them up.

And I was like, "You know what? "That sounds very familiar to the brokenness that I was just fixing." And it turns out his origin was also broken and it wasn't on Cloudflare.

And at that point it was like, "Oh crap, "how much of the web is broken?" And so I worked with Andy on a prioritization test for the web to see how well HTTP/2 prioritization was working for any given origin.

And so there's this page on webpagetest.org where you can give it a query param of any image that is hosted on your origin somewhere, roughly around 100k image would be best, just to get the timing right so you can see the prioritization working.

I should mention I see lots of cameras.

Feel free to take pictures but on the first and last slide, all of the slides are up on SlideShare, so all of the URLs and all of the content and stuff, you don't have to memorize now.

So you pass the image URL of the server that you wanna test as a query parameter, just make sure you URL encode it as a test into WebPageTest.

So it's very meta, WebPageTest is testing a WebPageTest URL with your image on it.

The most important thing is use a 3G fast connection. Chrome changed its logic so on really slow connections, it decided that HTTP/2 was too broken, and it's gonna do HTTP/1-style prioritization on the browser side.

So anything slower than 3G fast and you're not testing HTTP/2 anymore.

And so what the test actually does and what it looks like, is it loads that one image a whole bunch of times. First, it warms up the connection to the origin to just try and get rid of some of the TCP slow start issues, and then it will request 30 copies of that image as below-the-fold copies of the image, so really low-priority, followed by, once the the first two images have been delivered, to make sure the pipe is warmed up and stuff is flowing through the connection, it will serve two in-viewport high-priority images, and what you wanna see is you wanna see those two images down at the end of the waterfall.

The high-priority images interrupt all of the other images so that you know the server is reprioritizing content correctly.

And so the high-priority stuff jumped in front of the low-priority stuff.

And you can see the gaps in the the data download of the existing image flow.

And so, in an optimal world, you've got the standalone warmup one-image download by itself. In an optimal world, even when your pipe is full, and you request a high-priority resource, you would still get it back just as quickly. In reality, it's gonna be a little bit slower but you're still gonna get much faster than if it wasn't prioritized.

What it actually looks like, and when things go wrong, those last two high-priority resources will end up being all the way at the end of your waterfall or somewhere lower.

What you really need to think about when it's doing this is, when this happens, you're in that really screwed up waterfall state that I had with my web fonts or you're in the case where a late-discovered resource, like a web font, is gonna be blocked on all of your low-priority resources, all of your images that are loading.

And so you'll end up with situations where you'll have 20-second blank pages or 20 or 30 seconds with no text when prioritization isn't working.

And so these are all examples of what we see in the wild. So this one, I'm not entirely sure, but it looked like they were just round robining across all of the resources independent of the prioritization, and you can see little chunks of data coming across no matter what the priority but the high-priority images still end up way out here. I honestly have no idea what was going on with this one. It looks like it took, maybe, the first six round robined those and went, "You know what? "I can't do anymore, I'm gonna get these out of the way." And then it just gave up and loaded one at a time. (audience laughs) (Patrick laughs) You see all sorts of weird shit when you actually look at the real Internet. And so this one looks like they downloaded half of each image, went on to the next image, and then went all the way back to the beginning and downloaded half of each image again.

But still, you have the issue where your high-priority images didn't actually download when you wanted them to.

And yeah, at this point, I just stopped trying to guess what the servers were doing. (audience laughs)

This one, it looked like it serialized the first 10 or so images, and then it went into a "download half of each at a time" kind of a model. And so we stood up "Is HTTP/2 Fast Yet?" Totally riffed off Ilya's "Is TLS Fast Yet?" And we ran that test against basically every CDN and cloud hosting provider we could think of, and no, only nine of the the CDNs actually prioritized correctly or nine of the origins we tested.

The big ones, pretty much Akamai, Cloudflare, and Fastly, if you're using any of those three, you can relax. Prioritization's working, all is good with world. This list is a little scary.

So there are a lot of big CDNs, image CDNs, Amazon CloudFront. (laughs) (audience groans) I hear a lot of groans and freaking out in the audience. Yes, that is the right reaction.

(audience laughs) (laughs) And every one of the cloud providers is broken. So yay, let's all move to the cloud! (laughs) (audience laughs) If you are a customer of one of these, my main ask, my beg of you is, talk to your account rep and make it their problem because it's been broken for a year, by the way. We stood that site up about a year ago and there hasn't been one company that has moved lists yet in that year of shame. But we have had two or three really large AWS customers, for example, working with their account reps to the point where it's on their radar but that's as far as they'll commit, which may just be a, "Quit talking to us.

"We understand but we're not actually going to fix it." So I think there could be more pressure applied, for example, or you could just front it with one of the CDNs that does work but it would suck to have to pay twice if you're already using a cloud provider or a CDN. When you're testing for this locally, and my apologies, DevTools is awesome, the one thing that's not awesome about DevTools is the traffic shaping.

I was really excited to see Harry's slide.

Harry was using Network Link Conditioner.

A lot of people have Macs.

It's free, Xcode includes a thing called Network Link Conditioner.

It does packet-level traffic shaping so the HTTP issues that we were talking about are all really low-level protocol problems that only work and show up if you're doing your connection emulation at the packet level.

Chrome's DevTools' throttling is really good for giving you an idea of, "I have a really heavy page, "it's gonna kind of feel like this." But it's really bad at protocol-level stuff because the traffic shaping is done within the application and it's done between internal Chrome guts but between the render and the browser processes of Chrome as it's moving data around, so it actually reads data off of the wire as quickly as the wire speed.

And beyond prioritization, (coughs) that's also completely broken for HTTP/2 Push, which is the other big one.

Push ends up being completely wrong if you're using Chrome's DevTools throttling. So on Macs, Network Link Conditioner.

If you happen to be Windows-based, there's a Windows shaper? I forget, winShaper, I think is what I called it. I built a Network Link Conditioner clone, basically, for Windows, and WebPageTest does packet-level shaping.

It uses NetEm on Linux for most of the traffic shaping. And so, just to give you an idea of what it looks like in DevTools, this is the same prioritization test for a good origin where it is running the prioritization correctly.

Because of how Chrome's traffic shaping works, since it's after the data has actually arrived that it's doing the the throttling, it looks like prioritization's completely broken, and so it's not giving you an accurate waterfall for what's really going on.

The other thing to watch out for, I think I have it on the next slide, but right around the time that this happened, I changed WebPageTest's waterfall to show you the actual chunks of data arriving because of this.

So you can see how it's sending you data as far as the prioritization, is it round robin or whatever. And you can see it's fairly common, there's a really thin, dark line right at the beginning, where the server sends the headers back, and then it actually prioritizes the data so it's not unusual for the headers to arrive immediately. And historically, what that would look like is a bar like this in Chrome's DevTools where you have a really dark line for the entire time but you see, "Okay, they all seem to be downloading "at the same time." But it really helps when you can see the actual data flow and when the data's arriving.

Yeah, so like I was saying, it's not unusual to see the HTTP headers themselves delivered early 'cause they aren't prioritized.

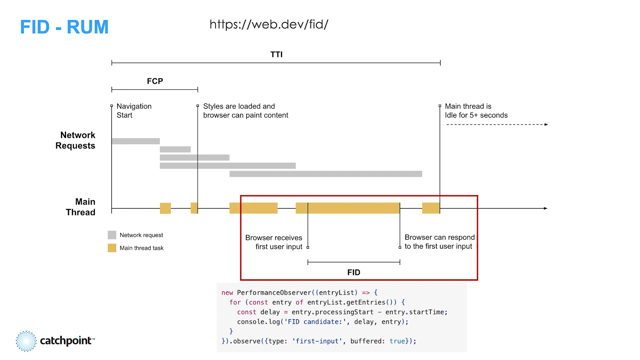

On your monitoring systems, if you are using synthetic monitoring, RUM is great! You don't have to traffic shape RUM.

RUM is what the actual users are doing.

But if you are doing synthetic testing, things to just watch out for is if they're using DevTools or Lighthouse without custom traffic shaper outside of Lighthouse, Puppeteer, or if it's using a proxy. Pretty much any of those will not expose your network layer problems.

And this is where we dive a little lower into the networking stack.

Hopefully, you don't necessarily need to know this but I think it helps with the mental model. I don't know if anyone catches the Snowden reference. But so your HTTP/2 is largely broken or fixed at the point that the browser connection is terminated. So that's either your CDN, or your load balancer, or your server if you happen to have a server that's talking to the wild Internet.

It's wherever the connection from the browser is terminated. If you have a layer for load balancer, which is a TCP load balancer, it can be a combination of whatever's behind that and the TCP load balancer that are at play. And so when you're doing HTTP/2 prioritization on the server, the server itself is basically just a traffic cop.

It has a whole bunch of data coming in from the backend connection so if you had, like in this case, it's six different requests that are all bundled over the same HTTP/2 connection, it makes those all in parallel at the backend, and in theory, it has a whole bunch of data available to pick from on all of those connections when it has outbound buffer available, and it picks, "Okay, what's the next highest priority thing "I need to send?" And it sends it out on the buffer and it needs to pick from what it has available. This is, of course, assuming the server supports prioritization.

Most do, technically, just not practically. And so where it goes wrong, is TCP send buffers. This is the problem we had at Cloudflare in particular. When your ops teams were scaling your HTTP/1 servers, the goals were to shove bits out as fast as humanly possible with as little CPU as possible.

And in HTTP/1's case there was never any contention or other things going on.

It was a serial stream, it never changed.

If you had the bits, they needed to go out on the wire. And so what happens in HTTP/2's case is the traffic cop never has anything to do. So out of the six requests that it makes on the back end, as soon as the first one comes back, the send buffer's available, it shoves the entire response into the outbound buffer.

It's not necessarily gone to the client yet, and so what you end up with is an order of responses in your outbound buffer in whatever random order your backends happened to come back with the responses. And those could be out of cache on-disk from slower backends.

It's completely random.

And the HTTP/2 prioritization has nothing to do because as each one comes in, it has outbound buffer available so it builds this enormous outbound buffer. I think, in our case, the outbound buffers were something like five megs, which is bigger than most websites so you can deliver an entire website in an outbound buffer without ever prioritizing anything. The other thing that wasn't entirely expected, which was a little surprising when we fixed it, our server metrics, the send times tanked because when we had large outbound buffers, the server thought it had sent everything almost immediately and our response times looked really fast from the server's perspective, and once we lowered the buffers to be more realistic we actually got real response times to the clients. What that means, as far as solving it on the server, there's a TCP option called TCP_NOTSENT_LOWAT. What it does is it models what the outbound connection is and how much the outbound connection can actually sustain, as far as data.

And it says, "Okay, let's keep another 10k or 15k "of data above and beyond what the network actually needs "for retransmits." And it can dynamically adjust that.

And so you can end up in a situation where your send buffers can still adjust dynamically but they're only as big as they actually need to be to fill the pipe.

And so, all of a sudden, now the server can actually prioritize correctly.

Buffer bloat.

I heard a whole lot of stink about this five years ago when cable modems were a thing.

I was like, "Ah, doesn't matter." Turns out buffer bloat's a huge problem for HTTP/2. And so what buffer bloat is, is network devices over time have gotten much bigger buffers, memory.

And so, usually, this matters most at your last mile connection.

So in my case, I have a fiber connection but if you have a cable connection and your cable connection for your last mile is five megabit or 10 megabit, and their uplink is gigabit, you deliver a whole bunch of traffic over one connection to that cable frontend, and it can only send five megabits or 10 megabits of traffic on the last mile. It doesn't just drop any packets that are faster than that. It buffers them up for a little bit so it can keep a stream of data going out, and those buffers growing end up being effectively the same thing as the TCP buffers. The network can now absorb megabytes of data. And so that was the other problem is, even if you fix the TCP buffers on the server, you need to make sure you're accounting for buffer bloat on the outbound, otherwise the network absorbs all of the data in the responses and there's nothing left to prioritize. Where this comes, you've probably seen the sawtooth pattern congestion control before, probably not spent a whole lot of attention paying to what it really means.

But what the sawtooth really is, is adjusting for how much data the network can absorb, and the buffer bloat in particular.

And so loss-based congestion controls, this is Reno, CUBIC, the old, classic congestion control algorithms. What they do is they throw as much data as they can at the network, over time, until it looks like the network lost data, so until it looked like all of the buffers filled up and stuff started to disappear, and then it goes, "Oh crap, I sent too much." Drops down and it grows, and it keeps doing that over and over again.

But the key point is right at the beginning is, it tries and fills up the network as much as possible until data starts disappearing.

And that's, plays right into buffer bloat's key hand, right? Is like, "Okay, let's see if we can fill "all of the buffers." And you really don't wanna do that because you end up sending all of your data without being able to prioritize it.

Newer congestion control algorithms, BBR is one of them, it's the most popular one.

There are other flavors of it.

What it does is, it keeps increasing the amount of data it sends until it notices that it's taking slightly longer for the data to arrive to the client, and then it goes, "Oh, there's probably something "in the middle buffering somewhere.

"I've reached my saturation limit.

"Lemme stop sending things." The bonus there is it also doesn't have to lose data and retransmit and all of that.

It just goes, "Oh, okay, I think we've reached the limit "of the actual transmit speed." Keeping the buffers as low as possible, and every now and then, it probes just to see if your connections have changed and it tries to hug things as close to the optimal throughput as possible. But for what we care about here, in particular, is the buffers, it really minimizes the amount of over-buffering in the network. And so the combination of TCP_NOTSENT_LOWAT on the server and BBR congestion control need to work together to minimize the send side of buffering for HTTP/2 to even have a chance of prioritization working.

The other thing you need to worry about is, (laughs) in the case of multiple connections, the Thunderdome problem.

BBR, as a protocol, is known for being able to starve out CUBIC and other congestion control.

So if you have third party data and your origin party data coming in, over two separate connections, one of those is BBR, one of those is not, odds are the BBR one is going to use all of your pipe and starve out your other connection.

And so, if you're in an unfortunate situation where your images are coming over a connection that's BBR, and your JavaScript is coming over a qubit connection, for example, they lost Thunderdome, and so your experience is going to be really bad. Outbound buffers are not the only place where you have problems.

This is a big problem in NGINX, for example. When the server, the traffic cop, is managing the upstream data that's coming in, you need to make sure you have enough data to pick from to be able to prioritize.

And you get into a situation, if your buffers aren't big enough on the receive side from upstream, that you might pick five k of data from your high-priority resource before you don't have any more data in the buffer, and you go to lower-priority resources, and so you can also screw up your prioritization if you just don't buffer enough of the data available. And if you have upstream concurrency limits, can you handle the 100 plus requests that the browser sent you all at the same time? Do you make all of those backend requests or are you only round robining five or six of them at a time, at which point you can't prioritize again either? As far as the HTTP/2 implementations on the server go, H2O is awesome, Kazuho has done an incredible job with that. It's basically the gold standard for HTTP/2 implementations. Apache is also really good.

NGINX, there are issues, it's got all sorts of buffering issues that still need to be sorted out to actually be able to prioritize correctly, even if you've got the network stuff configured correctly. So as far as a site developer, what can you do? The easy answer is use one of the CDNs on the good list, right? And then you just forget about it.

Doesn't matter what's behind that, the real critical part is between the edge and the browser. You do need to worry about your networking stack or at least have your ops team worried about your networking stack, congestion control algorithm, what web servers are being used.

I pity you if you're using an appliance, a NetScaler or something like that. (laughs) The odds of those getting fixed or even support for prioritization are low to none. Right, just leave this in the slides for you later. These are the actual Linux configs for turning on a lot of those things.

Prioritization, like I said, the browser requests prioritization and it's up to the server to do whatever the browser asked for.

The server doesn't actually have to care about what the browser asks for, so you can do things if you know better than the browser, like Edge, for example, about what you can do for prioritization.

You're welcome to send back resources in whatever order you want.

So that's one of the things we worked on at Cloudflare, as well, where images, we can do concurrently, even though Chrome asks for them serially, for example. And so maybe your application there, you know what your hero images are before the browser knows they're in the viewport, and you can prioritize those higher, for example. Wouldn't be complete without talking about HTTP/3 and QUIC. All our problems are over, right? (laughs) (audience laughs) Unfortunately not. (laughs) It moves a lot of what we talked about with BBR and CUBIC, and all of the congestion control and prioritization into the application layer, so it's no longer in the networking stack of the OS, it's UDP-based. So now you're a lot more responsible in NGINX and Apache for doing, not just prioritization, but all of the congestion control and buffering. The other thing to be aware of is HTTP/3 and QUIC will never ever reach 100% availability because it's UDP-based.

Because the enterprises can't man-in-the-middle it, the easiest thing to do in an enterprise environment, for example, is to just block 443 UDP and force browsers back to HTTP/2, which they can man-in-the-middle for all of their traffic logging and everything else. And if you aren't aware, by the way, all of your companies log all of your traffic. (laugh) (audience laughs) And prioritization in HTTP/3 was originally going to look a lot like the HTTP/2 tree.

We managed to get it before the spec rolled out, and it looks like we're moving towards a much simpler model where there's five priority levels by number and a separate bit that says, "Download these resources concurrently or not." And so hopefully, with that simpler model, we'll get all of the browsers behaving more like each other and much better server implementations.

And so if you have opinions on what to do there, join the working group.

The main takeaway I want everyone to try and get out of this, is not necessarily to know about the networking stack details, but when you see weird shit in your waterfalls, ask why. Don't necessarily hack around it, don't try and find a way to make it work.

Dig into the details because when you dig into the details, the really interesting stuff comes up.

I mean, you get scared to death about how broken the Internet is but (laughs) you can fix a lot of stuff, and you can fix your long tail and the core root problem instead of working around it for your one case. Thank you.

Wouldn't be-- (laughs) (audience applauds) - [Tammy] (laughs) That was awesome! Good, have a minute for...

- [Patrick] Darn, I didn't use up all my time! - [Tammy] (laughs) We're gonna make you answer a question, at least.

So yeah, we've probably only got time for one question, so let's see if it's-- - [Patrick] I'll happy to talk to people in the hallways, too, though.

- Awesome.

Yeah, somebody made the observation, "Maybe HTTP/1, "wasn't so bad?" (laughs) "Could we turn back time?" - I mean, it's scary but that's what Chrome did, right? - Yeah. - For everyone, by default,

basically, is if it detects a slow enough connection, it resorts to the HTTP/1 prioritization because things are just so broken in the wild. - There you go, thanks Chrome. (laughs) I was personally going to ask you a question that we can save for later on, which is like, "What's your dream browser?" So we can talk about that later on.

- I mean, Chrome does almost everything perfectly, I just want the images to be concurrent.

- I'm just more thinking like if you could design one from the ground up but that's a bigger question. "What's Pat's dream browser?" That's the one I wanna use.

Okay, so one question, and, oh, let's ask this one. "So what's the best way to test HTTP/2," it is hard to say! (laughs) (Patrick laughs) "Push? "Simply avoid browser throttling and use system throttling?" - To test Push, a packet shaper, Network Link Conditioner, winShaper, but more importantly, just don't use HTTP Push. - [Attendee] Woo-hoo! Yes! (Tammy laughs) - It is broken.

There is no site ever that has been able to figure out how to get Push to work in a net positive way universally. I think Akamai has found one edge case where they can, if they know how long it takes the server to respond, and they know the available bandwidth, and how much data they can send to the user before the server's gonna respond, they can maybe push those resources without impacting the overall, but in most cases, the cases where, 'specially if you're using link rel preloading your headers, and your CDN automatically translates those into Push, you're shooting yourself in the foot.

I'm surprised we haven't been able to kill Push yet. It sounded really exciting.

It was worked into the spec as the last minute of, "Wow, this would be awesome, let's do this as well." Without the proof that it works, like we needed with SPDY and everything else but if you are using Push, and you're experimenting with Push, just stop. (Tammy laughs)

Preload works much better.

It's friendly to the browser and the browser model, and it's less likely to make things worse.

- I like that you're just full of declarative opinions today, (audience laughs) like no web fonts, progressive JPEGs, don't do Push-- - And now you know what my perfect browser would be, right? - Yeah, there you go! (audience laughs) - Doesn't support web fonts, it doesn't support Push. (laughs) (Tammy laughs) (audience laughs) And I'm on Myspace, right? (laughs) (Tammy laughs) (audience laughs) - Awesome.

Okay, well thanks very much Pat, and he'll be around during the break so if anybody has more questions.

(audience applauds) (audience cheers) Okay!