Failure is an option

(upbeat music) - Hi, thanks for having me.

So, John's taken some of my jokes.

So that's good, it's fine.

Yeah, really brief background on a Chief Product officer at SEEK.

We basically have, so my team is over 100, which is product managers and designers.

And we basically got somewhere in the vicinity of 23 to 25, product development teams.

So it's a lot of product development work and discovery work going on all day, every day at SEEK. And so for me and my role, one of the things that I'm always looking at is how we can be doing things better, how we can be more effective, how we can be more efficient.

And so really, there's kind of three things that I wanted to cover today with all of you. The first is talking about failure and kind of failure linked to innovation.

Failure is a real kind of buzzword at the moment. And it's something that I reflected on a lot because at SEEK, one of the things I realised is that, from business stakeholders all the way to the product teams, I found that there were a lot of cases where we were trying to be right, we were trying to always be right.

And we thought we will always right.

And that's actually an inherent problem.

And so I'll go into a little bit of that, I didn't just want to go into and this is unfortunate, it's, I don't know if I should even be saying this, 'cause I have a lot of team members in the room. But often, our decisions are inherently flawed. And we make lots of bad decisions, which they now are gonna use this against me every time I tried to tell them to do something. And then the third is knowing all of that, I want to walk you through an approach that we've been using at SEEK as to how we're trying to tackle that so that we deliver better products for our customers.

So, who doesn't want to start with Elon Musk? So, failure is an option if things aren't failing. You're not innovating.

So as John, rightly pointed out, I was a professional Thai boxer for about 10 years, I had 40 fights.

One of the things when I think about failure and 'cause unfortunately I did lose a couple of times, it was only a couple.

One of the things that I think is really interesting about failure, when you think about it from that perspective is how much you learn how much you actually learn and think deeply when you fail.

So, anytime I won a fight, you go out you party, you eat, you drink, and then you just move on.

And you don't actually give it a whole lot of thought and you just happy for a minute for a moment, then you kind of wonder about what's next.

When you fail.

You obviously get quite sad, sometimes you cry. Sometimes you have a busted nose that makes you cry. And you get really reflective and you pull apart the fight you pull apart mentally, what you did physically what you did, you get to the gym faster, and you start to really try and work very specifically about how you're going to win that next fight.

And so I think that's very interesting.

Because in product, it's very much the same. When you fail, you start to pull apart, something that you thought was gonna be true. And then all of a sudden, it proved not to be true. And we start to build out and we start to learn. So I would say failure is not even an option. It's required and it's actually quite critical, because from a business sense, if we're not failing, it actually means we're probably not pushing the envelope, we're probably not developing out our solutions as creatively as we could or as well as we could. So this was something we recognised at SEEK. And this is something that we wanted to change. It's linked to decision making and the problem is without decision making, our decision making is incredibly flawed.

And it's incredibly flawed and why are we so wrong. So just to give a few examples, there was a study done by the American Bar Association, where they asked lawyers if they would recommend law to young students thinking about what to do next.

44% of lawyers said they would not recommend a career in law to up and coming lawyers.

They were 20,000 exact searches done of senior executive placements, and the success of those placements over time of the 20,000, what they found is 40% of these placements had either been pushed out, quit or failed during that during the first 18 months. And then there was a study done of corporate mergers and acquisitions.

So these are huge decisions that execs and making about where to invest and where to sell. And actually in this big piece of research, what they found is 83% of the time, these decisions did not deliver any shareholder value. And so these are big decisions involving very smart people. And yet what you find is often these decisions and not delivering the results that we thought they were going to deliver. For me, it was really humbling where I most noticed this is when I was at SEEK and I was looking after our small to medium hires, so people looking for people.

We had the experience basically the E commerce funnel, and so the whole job was really to try and reduce friction and increase motivation run experiment after experiment and what a very quickly worked out which was so humbling is that quite a number of times I would have these really strong hypothesis is something was gonna work it was gonna be amazing I actually remember there was this one test where we had these ad products and we had a big drop off on this page and I said you know what, it's because we don't tell them about how great SEEK is. Feels weird to say that now 'cause you're all gonna go, yeah, sure.

It could be the price but I was sitting there going you know what if we tell them why SEEK is so good and what they're gonna get out of it.

This is gonna really improve and we put a huge amount into running this experiment and it failed because process still the same and price is probably the major factor that decision but So through this I learned that I couldn't rely on my own instincts and have to rely on more.

So why we wrong so often.

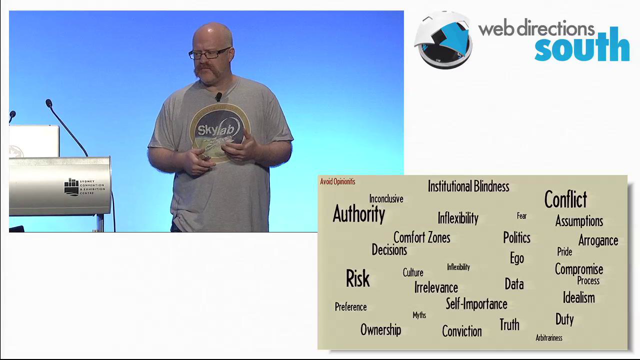

It's basically because we've got some villains in decision making.

These four I'm gonna step you through them all now. Our first villain.

Apparently this is Thanos, so I found out yesterday. Apparently he's a big deal in Marvel is a bit evil. I don't know, this is what happens when you get other people to help you make your packs pretty.

Anyway Thanos I otherwise know it as Narrow Framing. So a big problem that we tend to have is that we really, really quickly narrow our frame. And so we start to forget that actually, there was a whole bunch of options.

The way this manifests itself is when you starting to ask whether or not decisions.

So you start to say, "Should we build this feature or that feature?" "Should I break up with this person or not?" And you're actually forgetting that aside from that there's a whole range of other options that you've instantly discounted.

how I've seen this play out at SEEK, is we had a case where candidates can apply when can of course candidates can apply for jobs otherwise, that would be a problem.

Candidates when they apply for jobs on SEEK, there are a number of jobs where they need to go to a company's website, so when they hit apply, they actually link out.

And often that's actually not a great experience for our candidates and it's something that we wanted to fix. The business and by the business, I mean, myself and other senior stakeholders, I will call out that I may not been great in this situation, we had found this third party vendor solution that we thought was gonna solve the problem. And so we were getting very specific about this third party vendor solution.

We had all these conversations and all these debates about whether we should go with them or not.

Was the ROI gonna be there? How much was it gonna cost? We seriously spent months.

And then of course, commercially it didn't work. And we hadn't thought of all of the other ways that we could actually address this problem, because we were so narrow in our thinking.

And so this one can really inhibit you.

And it can really mean that we just went with stemming our creativity.

And so how do you defeat this one? Well, part of it's about opportunity costs. So really stopping and forcing yourself to think about what's all the other things that I could be doing, if it's not this option. The other one is the vanishing options test. So this is really around, if I turn around and you're trying to make a decision, and I say to you actually you can't make that anymore. Like that's not on the table, like what else have you got? Multi tracking options is a really good idea. So when you are thinking ideas or you are thinking solutions, trying to have two or three that you progressing all at the same time, so just stops you from getting too emotionally invested in one particular way. And then also looking to others who have solved similar problems.

There was a piece of research done and it was done a little while ago, because it was on buying DVDs, where people were asked, they were told to select the DVD that they really wanted and then the question that was posed to them is, would they buy the DVD or not? And in the study, what they found is 25% of people said, they weren't going to purchase this DVD.

And then they tweaked the question.

And so then they said, Will you buy this DVD? Or will you save the $15 and spend it on something else? And just him reframing that question.

The number of people that said they wouldn't buy the DVD jumped from 25 to 45%.

So often, we're just not thinking about the fact that in making one decision.

We're letting go of the whole raft of other decisions. Our second villain, confirmation bias.

It's a good one.

(laughs) So this is the one we start to quickly jump to something. And then what we're doing is we're looking for all the signs and all the signals that confirm what we already believe to be true.

It means we pay more attention to the information and the analysis that confirms our beliefs. And we ignore or pay less attention to the things that don't, the good example here is, does this look good on me? The reality is, in half the time do we really want the true answer? I mean, not if it's negative, because if you didn't think it looked good on you, you wouldn't be wearing it in the first place. And so half the time, that's a really loaded question, and you're looking for the affirmative answer. In SEEK, we had an interesting example with this one, we have candidate profiles.

And so candidate profiles, help hires contact candidates proactively, and also helped the search experience.

One of the things we're looking at doing is attaching people's profiles to the application form. And so our team, we had this ongoing hypothesis or assumption, we believed that people do not change their CV or their profile very often between one application to the next.

We knew it existed, we felt like it was an edge case.

we felt like it was an edge case where you've got someone who's working two jobs, or someone who's working a job, but they're studying for another job.

And therefore, the types of jobs they're apply applying for might be different. So the team started doing analysis and we started doing research, but we were doing it to prove our hypothesis. We believed we were right and what we actually found through both the analysis of how often things were changing on CVs, and then also customer research, is that people actually change it incredibly frequently. Even when it's the same type of job one after the other. They want to stand out and so to stand out they're actually tailoring each and every application. And so in that case, we're actually just fortunate enough that we did the due diligence of analysis and research because if we hadn't we just would have gone and built something that actually wouldn't have worked for our candidates.

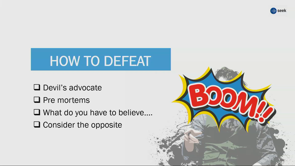

So how do you debate this one? I actually hate devil's advocate in the room, they always frustrate me.

But actually devil's advocate is is kind of the right way to go.

So whether it's yourself or whether it's someone else, but you really go, Okay, what happens if we are wrong here? What happens if it's not true? Another good example is pre-mortems.

I've done a few of these, I find them incredibly helpful.

It's basically where you sit there and go, we've rolled this feature out, we've rolled this new product out, and it tanks. Why did it tank? What are the things that we potentially got wrong? It just helps you plan for what you can do differently about it.

The other one, that's always a good one is the what do you have to believe? So what is it that's, what assumptions are inherent in your thinking, do you have to believe for this idea or this product or feature to be successful. And the final one is just consider the opposite. You will have a gut instinct, you will go a certain way and just force yourself to spend a little bit of time Thinking about what if I'm wrong? What is the opposite use case look like? I do know who Darth Vader is by the way, 'cause I know who that would be.

When I did the villains by the way, I put in, I have heroes a little bit later. And this is showing my age.

One of the villains I put in, which is obviously being removed was the screen character. And then I had the heroes Nick Campbell.

Obviously that's showing how old I am.

And it also got removed, but that's fine.

So, I got some feedback, Our third villain, short term emotion.

I mean, this one doesn't actually need that much explaining. We all know this. We all experience this.

But this is basically where you let your short term emotions, cloud your judgement . It changes an answer that you gave and we've all done this. You short on time, you frustrated, you know your team member comes up.

They asking for advice or they asking for a steer, and you rush it and you just give them some hurried answer and it's actually not what you would normally get them to do.

So this is wise how we approach things, it's wise at the decisions we make.

At SEEK in example I found I had this one is we were running an experiment on with hires, basically there's a page they come to, where they choose which ad they want to purchase. So, ad choice architecture.

We have three ad products.

There's a lot of research out there that says, four choices can actually be really good as well. And so we wanted to test that model, and basically say, could we uplift conversion? And could we uplift the revenue per purchase. What happened though is when the test went live, the results started to tank a little bit.

And so in the moment, myself, the team, we all just got really nervous.

We got really nervous we got really worried about what that meant from a revenue perspective. And we kind of lost our nerve.

And so in that moment, we decided to turn the test off, and we basically chalked it up as that it wasn't successful. Playing around in that area even more what we now realise is a lot of the time when you're changing behaviour, especially for hires, who are used to posting frequently, there's a bit of change that you see.

So you tend to always see it deep, and then it will come back up.

So, we still have anyone that goes on the site, we still have three ad products, and actually full model being the right way to go. But we made that decision in the moment.

And it's one that we still regret.

So how do you defeat this? I always like this one, ask what your successor would do. So sometimes we're so emotionally involved, and we're so caught up in the moment, sometimes ask yourself, if I wasn't here, and a brand new product manager walked in, what would they do? How would they think about this? Sometimes the other way to think about this is usually on a more personal level, you sometimes ask, what advice would you give if it was your best friend that was going through this scenario? So just to try and take yourself a little bit out of the emotion. Not always possible, but the second one is to try and put some distance between it.

Obviously one of the great things to do you get an email, it rolls you up, you get angry, the best thing to do is to not reply in that moment and actually let it sit for a bit.

And then the last one is the 10, 10 and 10 approach. So what this means is, you're trying to make a decision, you're trying to do something, think about how am I going to feel about this decision or this choice 10 minutes from now? How am I gonna feel about this 10 days from now? And then how am I gonna feel about this 10 months from now. And it just it's a useful tactic I've used it before and it just helps give you a little bit of perspective. Our last villain, probably my favourite, to be honest.

I mean, who doesn't love him? It's great.

I get carried away by the villains.

The last one is overconfidence.

I mean, and who can forget Smeagol's overconfidence about he's precious, and he's ability to keep it. So this one is one that I think if we're all being honest, we see and probably take part in a lot.

This is one where we think we know how the future is going to play out.

We believe we know what the path forward looks like. And what it means is that when you're making decisions and choices, you have this confidence that you go on these decisions and confidence with because you believe you inherently know how it's all gonna play out and happen.

An example with this, I think one of the best examples of this can often be AB testing.

It can often be where we're running experiments, and we believe we already know how the experiment is going to go.

We had an example of this at SEEK, we had a particular test that we were running on kind of ad packs and packaging part of the site. So it's way higher is going to look at what type of contract they want with us and what kind of products they want in that contract. The team felt that we could probably Lyft engagement and conversion on that page, if we made it more compelling.

And also if we help them make it easier to compare and contrast.

Tested really well with customers.

So we did customer research, people loved it, they said it was way better than the existing page. So what the team did, because we were all so confident that this was gonna work is they built the page and a new tech stack.

And they invested technically in this page, they put a fair bit of technical investment in the page because they basically banked on the fact the page was going to win and then therefore we were going to implement it.

Probably we all know the end of the story.

You run the AB experiment, it doesn't win.

And therefore we actually don't go ahead with the page. And so what it meant for that team is that they actually, there was wasted effort there for them, which obviously they didn't love.

So how do you defeat this one? It's really about just thinking through what if this goes really wrong.

Or what if this goes really well, and thinking through the different scenarios of how that plays out? And then we've already spoken about pre mortems says also pre parades, which I just love the idea of imagine just sitting at your desk and they stream is in balloons for no reason because you preparing, but preparing for what is something goes really well and just how that would all impact your decision making and the choices that you're putting ahead.

So there are four villains somewhere in limit yesterday. But if they're the villains, and they're in all our heads, and they impact our decision making, and it does mean that often when we're building products and services, we're not building them as efficiently or as effectively as we can.

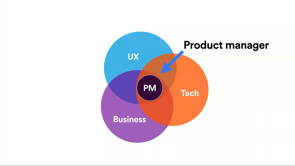

If they're the villains, then what's the kind of super power that actually helps us get around all of these. And so for us at SEEK what this has been is an approach called continuous discovery. So continuous discovery is essentially a robust process. I know we don't like the word process, I tried to think of another word, but it's a process that is meant to help you move quickly and be repeatable. And it's basically meant to help you decide what to build. It's basically meant to help you so that when you're building something, you're building something with a really high level of confidence that is actually gonna meet your customers needs. McKinsey did a piece of research with which with over 1000 businesses, when I looked at all the different business decisions were made, and these were reorgs new product releases acquisitions and mergers. And I looked at it over five year period.

And what they found is that the process of how they made the decision was more important than the analysis that was done by factor of six.

So the process which is something we just don't put a lot of effort usually into how we're making decisions, but actually matters so much because of all of the villains that I just spoke about before.

So what I want to do is step you through this. It's basically the way I like to think of this from an objective perspective is imagine, you're only giving your sacred resource, which is your engineers, you are only giving them things to build, that you have a high confidence that are gonna meet your customers needs.

Because you have already validated your assumptions through rapid experimentation with customers. And you've already worked out the parts or the ideas that that failed.

So that's really what this process is trying to achieve. So to get into that, I wanted to introduce the opportunity solution tree. Our heroes need some where to hang out, they like to hang out around the opportunity solution tree, and who wouldn't.

It's a tree of opportunities.

It sounds pretty cool, right? So this is what an opportunity solution a very simple opportunity solution tree looks like. And so what we're gonna do is we're going to step through all the different levels of the tree.

Really, what this is really helpful for is it helps shape your thinking it helps all the things that you're all the needs, that you're discovering all the ideas, you're validating or invalidating, it helps you lay out all of that thinking so that you get it clear in your own head, what it is that you're doing.

It also helps from a team perspective, because people can see where different needs fit in, what's already being considered and what also hasn't been considered.

It's also very useful as a senior stakeholder. It's actually a very, very good engagement piece. Because when I get taken through opportunities solution trees, I can see the extensive thinking that's been done, I can actually understand why some needs have been de prioritised and others have been prioritised.

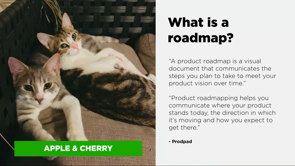

I know there was some amazing conversation this morning about how much everybody loves roadmaps. You can actually use this as a roadmap.

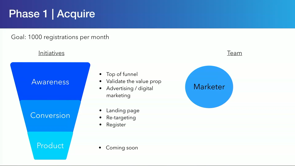

I've got teams that they use this as a roadmap and basically all they do is they actually just number the customer opportunities, so then I know what order they actually think they're tackling the customer opportunities in. So getting in starting with the top of the tree. So step one is a great new desired business outcomes. Now at SEEK we've got fully embedded OKRs.

So this is really around aligning on OKRs.

But it doesn't have to be.

Did you love the animation? This is obviously not me, this is just people having fun with me now. It doesn't have to be OKRs.

It doesn't matter if your business doesn't have OKRs, but get specific about what customer outcome it is that you're actually trying to achieve. It's important because if you're too vague, your tree will end up having like related and unrelated needs in there and solutions in there and it'll get messy, and it'll kind of distract your thinking a little bit. So I'd really encourage you if you're going to do this to really try and focus on what is that customer outcome that we're trying to achieve.

So in this case, we've got a SEEK example.

just highlighting what's not a good objective for us is to describe use of a product.

It's very vague and why are we doing that like what's in it for the customer? So really here the objective we used was helping small to medium business hires efficiently identify suitable candidates to interview. And the key results we were looking for was increased higher satisfaction score and increase the shortlist right.

So the right that hires were shortlisting candidates. So for the purposes of the tree that our walkthrough, we actually use increase the higher satisfaction scores there on top of the trade the business outcome. So you've got your desired business outcome, it's awesome. Step two is probably one of the hardest steps I would say. And it's definitely taken us a little bit at SEEK, to try and wrap our heads around it.

This is identifying the customer opportunities and how do you do this? You do this with really regular customer interactions. Now, I know we all love to say that we interact with customers and I know we all do, but in this model, Teresa Torres, who's a big advocate for this model and came up with a lot of it.

She basically advocates that you're engaging with customers every single week.

So every week you're engaging with like four to five customers and you're having interviews with them.

To walk, right.

It's hard, like recruiting people to talk to is hard, trying to fit that in the schedule around all the meetings. But basically, what you're trying to do here, is you're trying to have really regular, consistent contact points with customers, and you're interviewing them. So you're not doing usability research.

You're not doing more structured trying to walk them through a product or idea, you're actually being more open ended and you're asking them to tell you about a time like in our case, talk through a time when you last had to hire somebody.

What did that feel like? What did you go through? Because again, if you think about the villains of decision making, you're trying to not start to narrow because if you start to narrow, you're leading them down a certain path.

So let's say we haven't got to weekly yet.

We've probably got most teams operating on every fortnight out to kind of every four weeks and it's something that we're continuing to work on. Basically what you do is you're out there you meeting customers, you're obviously documenting what they're saying, you starting to map, what are the different opportunities that you are hearing from them? What are these different needs that you're hearing? And obviously, then you keep adding to these over time. So once you start your tree, if you're staying on the same business outcome, this can be a long running trade, this could be weeks, even months in some cases, and you just keep adding to it. With SEEK, we had an example, like one of the things I would say is definitely for us. We're getting insights that we wouldn't have otherwise got for sure. Like there's just no doubt about it.

Like just being more open and being more frequent. We're absolutely hearing things that we didn't know or didn't expect.

I think an interesting example of this, we had a we've got a team that look after our talent search product.

So a talent search product is where all the profile seat and hires can basically go and they can look for relevant candidates and they can reach out and they can connect with them. The team we're looking at doing some integrations with third party systems and so the team we're going and they were doing some research around this. And what was really interesting for them is what they found is we actually have an existing integration with a partner at the moment to access his product. And we think it should which is sad, because I actually was a product manager on the integration a number of years ago.

I try not to tell people in my team that.

To be fair, it was time box, I had to move on to something else .

Anyway, we won't get into it.

(both laugh) and can't tell if they've done that a few times now. But we think the integration is rubbish, because it was time box, we did a kind of MVP integration. We don't think it's that helpful.

In actually going and meeting with these customers, and we weren't even asking about this integration. What we found is that they actually love it. They love it, they find it really useful because they've got this efficiency need.

There's this need to be able to access candidates faster and message them faster.

And actually, even though we think it's rubbish, they actually love this integration and so therefore, It started to flush out these needs, and the fact we were already starting to solve this need, and how can we expand on it.

One of the questions you often get with this is what happens with needs that I didn't hear from a customer.

So what happens with needs that I've found through analysis or someone in the office, or I just have a gut instinct, 'cause we all do that every now and then, you can put them on the tree.

What I'd recommend is if you putting them on the tree, you would them from the perspective of a customer. So you put it into customer language, like what is the customer need.

'Cause sometimes you'll actually find in doing that, it's hard to do because it's hard to hear a customer articulate that need, which is probably starting to raise the question about, is that actually a need? And then the other part is, then you start to do some customer interviews and discovery where you basically try to validate Is that a real need or not? We had an example of this, again, going back to ad products obviously we do a lot of work on ad products very important to us.

With one of the teams that manages them.

We've often had these discussions around that one need is that hires need help, when they are trying to choose what ad product to use. They need more help about for that particular role, some insights as to how the ads likely to perform and what we would recommend.

So we've always in the back of our mind for years, we've always felt like that's a need.

They need more information, they need more help. So team put it on the tree.

They went out and started doing research.

And actually what they found is it wasn't really a very high need.

Actually, what customers said is, I'm quite happy to make a choice based off the contract I've got, or actually, I'm just happy to make a choice based off the price and the table where you show me like what I get and what I don't get, I actually don't think I need any more than that. And so the team then they prioritise that on the tree and actually moved on to higher customer priorities. And that was a fantastic one because that is one that senior stakeholders as you can imagine, felt was really important. And so it was a great way for the team to be able to demonstrate, they taken that on board that explored the idea that actually didn't get too far in it though because I was then able to say actually based on customer feedback, it's not that valuable. So in our examples, we've got increased being higher satisfaction score. So the three needs that we identified, there were more than three by the way, there is a lot more than three.

Let me know when something I need to know about happens. Obviously, this has to do with my job and not just about anything.

The second one, it's hard work, assessing and selecting candidates to interview, which I'm sure anyone that has had to do this would agree. And the third one I'm worried about my job advertisement is falling.

So it's losing prominence is losing attention. Therefore, I'm not gonna get a great outcome. So, once you've got these, the next step is to size these and pick one. So in doing this, if we take the three examples that I had, now, this is where you can get a bit flexible, this is like normal kind of roadmap prioritisation. You can use the factors that are relevant to your organisation.

In this case, we use customer value customer satisfaction, alignment to take strategy.

What I would say is, at this point you're not using technical effort, 'cause you're trying, to not constrain your thinking too early by actually what's feasible and what's not. So at this stage, I wouldn't be using technical effort to try and identify what opportunity to focus on. So you've got your business outcome, you know, which customer opportunity focused on. While this is all going on, you're still continuing to meet with customers every week and every fortnight and build out your understanding of them.

But now you get into the fun part.

Now you get into it, ideating and validating the assumptions and using experimentation.

So this is where if you think about all those villains of decision making we talked about, this is the part that's actually quite critical. Because this is the part where often what happens is we think we've got a lot of ideas.

But actually what we have is, we usually have like one or two ideas per customer opportunity.

If you actually map out all the ideas you've got they usually trying to solve slightly different problems. And so what you really want to do here to stop yourself from reframe, to stop yourself from your overconfidence and confirmation bias is you want to do a lot of ideation. So the recommendation here, is you try to come up with 10 to 15 ideas on how you would address that one customer opportunity. And so that's actually that's a lot.

What I would definitely recommend is you don't do it in one sitting, 'cause it just won't work.

Get the team involved.

One of the ways we've seen this work well as you do it over kind of a few days or a week and you basically, each day you pick 15 minutes, and you basically just ideate and come up with all different ideas and solutions. Another recommendation would be often we do a lot of ideating, vocally with our teams, actually, sometimes it can be really helpful to do it silently. 'Cause when you stop a little bit of the group, the group think in the confirmation bias, it can start to creep into teams, but here you really tried to come up with a lot of distinct ideas and don't cheat yourself. Don't come up with ideas that are a slight twist, but actually it's the same idea.

Just with a different font or colour, try and come up with distinct different ideas. So in this example, entertainment expanded on this a little bit. So we've moved from It's hard work assessing and selecting candidates to interview.

One of the next needs we identified is I'm looking at a list of candidates.

Help me choose which ones to look at.

And so then from here, and there was actually about 15 ideas, but three that I thought I'd walk through.

So one was we've got something we call date match, which is basically an AI algorithm that can predict for any given job, the candidates that are likely to be a good fit for that job with pretty high confidence.

So we had an idea around what if we badge candidates to say that we think they're a good fit? Or what if we sort candidates, so we sort them by the threshold of being a good fit versus not.

Another idea was, well, we filter out low match candidates. So we actually just started show them.

We put them in another part of the experience, and actually we only show the ones that are above a certain threshold.

And then the other idea was when we add extra roles to the candidate card, we know what roles somebody's done are incredibly important. What if we just add more roles to the candidate card, so then when you're comparing and contrasting, you've got more information to do it.

And so you can see here, the team is decided to focus on date match, badging and sorting.

Again, what I would say with this is ideally at this point, so you should always really be trying to focus on one customer need at a time.

But once you're looking at that one need, you should ideally look at a few ideas in parallel. Because that stops you again, that stops you from narrowing in too much.

It stops you from getting too emotionally invested in one particular idea or getting overconfident that that idea is going to work.

So, remembering they're working with a few at a time here.

But basically what you do from here is you then kind of take the idea and you flush it out a little bit.

And in particular, what you're flushing out is you flushing out what assumptions underpin this idea. Why do I think this idea is gonna work? What has to be true for this idea to work? And what you're trying to do in how you map your assumptions, is you're trying to map them by importance set. So basically, if this assumption is wrong, is the whole idea done? And also, by your data points.

Do I have an easy way within the building to work out if this assumption is true or not? As you can see in this case, I've highlighted a few that were basically the big assumptions for this idea. So this idea was, we are going to when you log into six advertiser centre, and you look at your candidates, we're gonna badge the candidates if they meet a certain threshold, which means they're likely to be a good fit. So the big assumptions we had one was the hardest actually notice it, did they even know what we've done and it kind of makes sense to them.

And then the other two, so hirer is actually then assess these candidates first, because if they notice it, but they still go on with their normal process, we actually haven't changed anything.

And then the third big assumption, which anyone that deals with I know algorithms will definitely agree with this one that hirer has actually trusted algorithm that actually believed that we're right.

And we've had some really interesting examples with this. So then you take your biggest assumptions, the ones that basically made, that idea will fail, and you then work out.

Okay, so what data do I need to prove whether this assumption is going to be true or false? What I'd really recommend with this, and it's hard work and it takes a bit of time. Don't be vague on this.

Don't just say, oh, if I get 10% adoption, because then what happens if you get 9% adoption? If you get 9% adoption? Are you going to stop proceeding? Is it gonna be a failure or not? Like force yourself to think about what is that threshold? Where actually if it goes below that, I'm probably considering this idea as failed. And then think about, well how am I actually gonna collect that data? And this is where it gets really fun and really exciting. Because this is where you actually look at all the experimentation that you can run and remembering, you're trying to do this as much as possible with very little to no engineering effort.

So you are not trying to run AB experiments on site to prove this out, you are trying to not build these solutions, because you are still in this phase of trying to determine if these solutions are even worth the investment. So there's a whole range of ways that you can do this. And this is by no means exhaustive.

I wanted them to be heroes, but they're not it's okay. I move on, but you've got things like usability interviews, actually just going and speaking to people about it. You've got paper prototypes, you've got mock ups that you can do and you can walk people through.

You've got fake door test.

So putting a test on a site, they click on it and you say it's coming soon. Are we wanting to gauge interest? Another one we've been using all of these, another one that we've been using a lot is often you can use email campaigns.

And you can use email campaigns to kind of generate interest.

A few examples of these that I thought I would share. So one is, again, our talent search team, they were really focused on.

So basically, when you post a job at SEEK, you get free access to talent search database, and you get a certain number of credits that you can use to connect with a certain number of candidates.

But the usage of it for small to medium businesses is quite large.

And obviously, that's something that we want to change because we think it's great value. So the team, were really thinking through, well, part of the problem is, we don't promote it enough.

It's not enough in their flow.

It's not enough in their experience.

If we put it in the points where they're gonna make a decision, we can uplift this conversion.

And so one of the places that we thought through is, okay, well what about when they've just finished posting a job ad. We know they've got a need, we know they want somebody and they've just finished advertising.

So it's really top of mind that in the experience. You're a little bit like how could that not work? And the take like any past days, we just would have gone on and built an experiment and kind of run it. In this world where we don't do that anymore. What the team did is they built out some prototypes, and they went out and sat with users, and they walked through it and talk through the experience. And actually, what they found is that hires when they post a job ad, fun fact, are the most optimistic.

They're really optimistic when they post a job ad this job ad is gonna work and that they're gonna find the right candidate. So at that point in time, because of that mindset, they're actually not interested in proactively reaching out to candidates, 'cause when you proactively reach out to candidates, a lot of hearts feel a bit awkward about it, 'cause you've got a message and go, "Hey, I noticed you, you interested in me." It's a bit weird, right? You would just prefer applications.

And so really, so then we dismissed this idea, because based off the feedback we saw, it wasn't gonna work.

Another example would be that algorithm that I talked about where we can basically predict if you're likely to be a good fit or not.

We wanted to say if people trusted it, it helped shape their decisions.

And so what we did is we did an email campaign, we ran the algorithm, we manually pulled out who the right candidates were, we put it into an email, and we basically sent it to these hires.

It wasn't in the floor.

It obviously wasn't integrated.

It wasn't beautiful.

But we basically said for this particular job, here are five candidates.

So we think you should look up based off our algorithm. And what we found is it was a very small sample size, but we found on those examples is that people actually commented on it changing their decisions. People actually said, "Yes, it's actually I went, and had a second look "at these candidates." And so again, it's little tests like that, that are basically helping us to prove, is it worth pursuing what we're thinking of pursuing, like, should we keep going on? So the hard part about this is you hear it all, and it feels hard.

It feels like it's gonna take a long time.

And that is the real challenge in this.

What I will say to you, and I've actually seen teams doing this, once you get momentum, and if you stay diligent to it, they should actually be a very fast process. You should be once you get your rhythm of booking and customer interviews and customer research, you've got your regular customer research, you've got your trees, once you've studied your tree, you're basically mapping new opportunities on new opportunities.

You force yourself every week to be pulling apart an opportunity and to be quickly ideation.

A lot of this is about trying to set time boxes for yourself.

So not spending weeks on this.

I think the interesting part about this is sometimes what you're looking for is directional information that you're going in the right direction, not statistically significant information or the best analysis in the world.

So a lot of this how you can be fast is you time box it and you just build it into your weekly schedule. So you know on this day, we've got customers coming in. We're gonna do interviews straight after that, we're gonna spend time mapping out the tree and working out what's different about that. And then on the next day, we've got ideation and we're gonna do ideation over the next few days, and then we're going to work out the experiments we've got around.

So what I'd say is, this can be a really fast process, but it's a little daunting in the beginning, 'cause it feels like it's a lot.

And what it should help you do is it should help you fail fast, because as opposed to failing after the fact, once you've built something, once you've done all that work of taking it to market and then realising it doesn't work, you realise the soul super early and it helps you focus your effort on the things that are actually going to work. So recap.

Agree a desired business outcome.

Map your customer opportunities.

And then ideate lots of ideas, lots of ideation and really fast, cheap experiments to prove whether you're on the right track or not. This just to put it in perspective, this is an example of an opportunity solution trade. Obviously, you can't read it, I can't read it. But I wanted to show it because what you can see is you get all different branches start to branch off. And what will happen sometimes is when you're meeting with customers, you'll be focusing on a certain business outcome that you start to hear needs that don't relate to that business outcome, but they relate to something that's in your domain. So then in some cases, what you do is you start a new tree. So some teams will kind of have two or three different trees, with all sorts of branches coming off the back of them. But this is a real tree.

This is in our loyalty space.

This is around how we help hires, find the right candidates. And then this is part of what it looks like up close. So you can see you start from a need, from business outcome sorry, you go into the needs, and sometimes what you'll find is with the needs, they then go into sub needs.

So you start with this kind of a bigger need. So good example would be I didn't get enough suitable candidates.

And so then you could say in this case, it's broken into let me know when my job ad isn't performing or how can I find more suitable candidates? 'Cause sometimes it's the job ad.

And sometimes it's the market.

And then you can see from there, this is obviously one that they've worked on from an ideation perspective.

You can see them they've obviously gone and come up with all the different ideas.

So what to take away? Failure is good, we should be failing.

If we're not failing, it's a worry.

We just got to make sure we're trying to fail in the right way.

And we're trying to fail as cheaply and fast as possible. I hate saying it, but we all make bad decisions, and we make bad decisions a lot.

It's not our fault.

It's just unfortunately, we're inherently biassed. And so really, what you wanna try and do is you wanna try and build it.

It doesn't have to be exactly like this.

But just think about how you build an a bit of an approach or a bit of a process around your decision making. To stop your biases creeping in.

A continuous discovery approach can really help you work out what to build cheaply and quickly.

It can save your engineering efforts, the things that you actually have a really high confidence that they're going to work.

Agree your outcome.

Get into a regular cadence with customers, and focus on just some exploratory general interviewing. You can still do all the all your other forms of research, but actually just focus on getting them to tell you stories, getting into tell you experiences.

I saw this great example I was at a conference and now we're talking about, you ask somebody, they got someone up and they said, "How many times do you go to the gym?" And they go out five times a week? Okay, how many times did you go to the gym last week? And I said two, and then they're like, okay, why? Well I had work and I had drinks, and I did this, and I did that.

And then and then I said, What about the week before? Two.

And so you start to see that sometimes when you're asking questions, people give you their ideal answer.

They give you what they believe, what they're aspiring to do, not what they're actually doing.

And so for building products that can get dangerous. And so what you can do is in interviewing, just probe a bit further prob about the last time they hired somebody, on the last time they looked for a job, like, what is it that you actually did? What tools did you use, all those types of things.

'Cause you often find it can be different.

Get into a really regular rhythm of that.

Use the trees, we use the trees, it's all about trees and branches at SEEK.

It's a little bit weird, but use the trees. (both laugh) Use the trees, map your opportunities, prioritise your needs, make sure you're only focusing on one night at a time, try and stay focused.

But then ideate.

Ideate a lot, get creative.

You can do that if you're not spending engineering effort on all of those ideas, you can actually get pretty creative, if you know that you're just gonna find creative ways to test it before you decide actually what to invest in or not.

And the nice part for me is, I remember, back in the old dark days of product management, where it was like too hard to AB test.

We just had to build it.

And you'd sit there and people it spent months on stuff and you're like shit, I'm so I don't know if this is gonna work, like, I hope it's gonna work but it may not. And sometimes you will lucky, right? Like sometimes it works and sometimes it didn't. The nice part about this is you can actually have confidence that when you move into build, when you move into delivery of something, you can actually have a pretty high confidence that to some degree it's going to work because you validated it.

Some further research on this topic Teresa Torres, she really kind of earned some other coaches really pioneered these. Her blog is amazing if you don't follow it, lots of tutorials, lots of blogs, she's got templates on all of this stuff.

What I would say is, even if this feels a little bit overwhelming, maybe just pick a couple of parts that you think you could add to, your process your approach, how you go about doing things.

Marty Kagan is also a massive believer of this and he talks about this a lot as well.

And indecisive a book by Chip and Dan Heath, they talk about the villains of decision making. Why we're flawed, lots and lots of examples. It makes you quite sad.

It makes you never want to make a decision again. So I'd encourage you to get in and not make it 'cause who doesn't want a product manager, that won't make a decision? And that's me done.

Thank you so much.

(audience applause) (upbeat music)

Humans are really bad at failure. We become emotionally invested in our decisions, and good old confirmation bias reassures us we’re on the right track. Even when we really have no idea where we are.

Modern aircraft though are literally always off track. Which is why they constantly course correct.

Continuous discovery is a structured product management framework for how we understand customer needs & then rapidly generate and test multiple distinct ideas to validate how to best address these needs

It’s about identifying your riskiest assumptions, and how to test them.

In this session Chief Product Officer at SEEK, Nicole Brolan, will show us not only is failure an option, if we’re not failing, we’re not innovating enough.