The illusion of “The Illusion of Thinking”

March 9, 2026

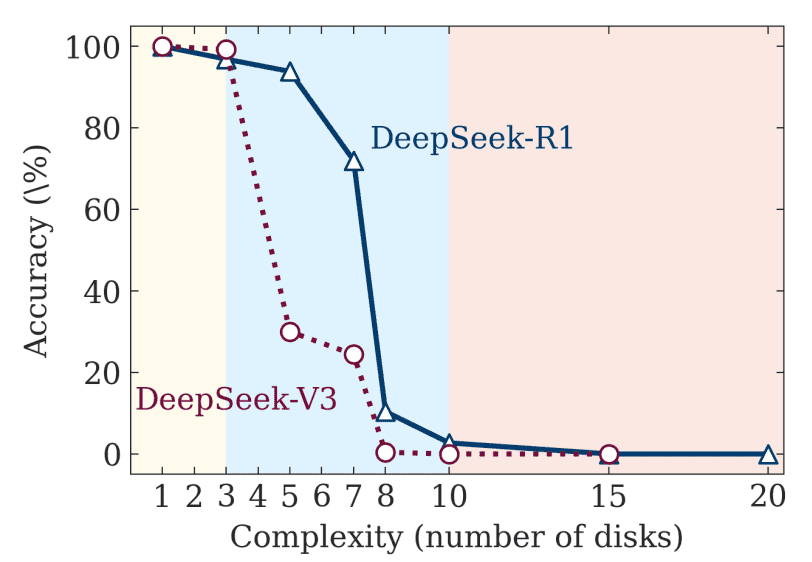

Very recently (early June 2025), Apple released a paper called The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity. This has been widely understood to demonstrate that reasoning models don’t “actually” reason. I do not believe that AI language models are on the path to superintelligence. But I still don’t like this paper very much. What does it really show? And what does that mean for how we should think about language models?

I have a few issues with this paper. First, I don’t think Tower of Hanoi puzzles (or similar) are a useful example for determining reasoning ability. Second, I don’t think the complexity threshold of reasoning models is necessarily fixed. Third, I don’t think that the existence of a complexity threshold means that reasoning models “don’t really reason”.

Sean Goedecke critiques a widely cited paper from Apple’s research team from around a year ago, which questions whether reasoning models actually reasoned. This critique undermines the central argument of that piece.