Mercury 2 won’t outthink frontier models but diffusion might out-iterate them

February 27, 2026

Inception Labs have just released Mercury

2 – their latest

diffusion large language model and it’s pretty solid. This goes beyond a

technical proof of concept and into the realms of something that is genuinely

interesting as well as practically useful.To my mind, this is the first “prime time” diffusion language model that’s

viable for developers. One that is blisteringly fast at text generation and

powerful enough to do real work – especially for coding, which I’ll get to in a

moment.

Standard, state-of-the-art models like those from Anthropic and OpenAI are autoregressive models which work on predicting the next token which has led to some extraordinary results but has a significant performance bottle neck.

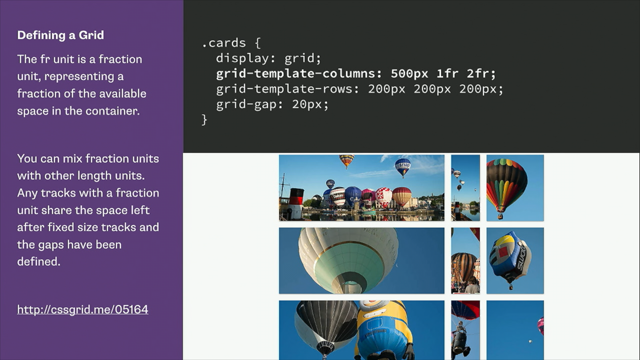

A different approach called diffusion is what’s behind image generation models. And it works by, in a nutshell, generating tokens between other tokens rather than simply predicting the one that comes at the end.

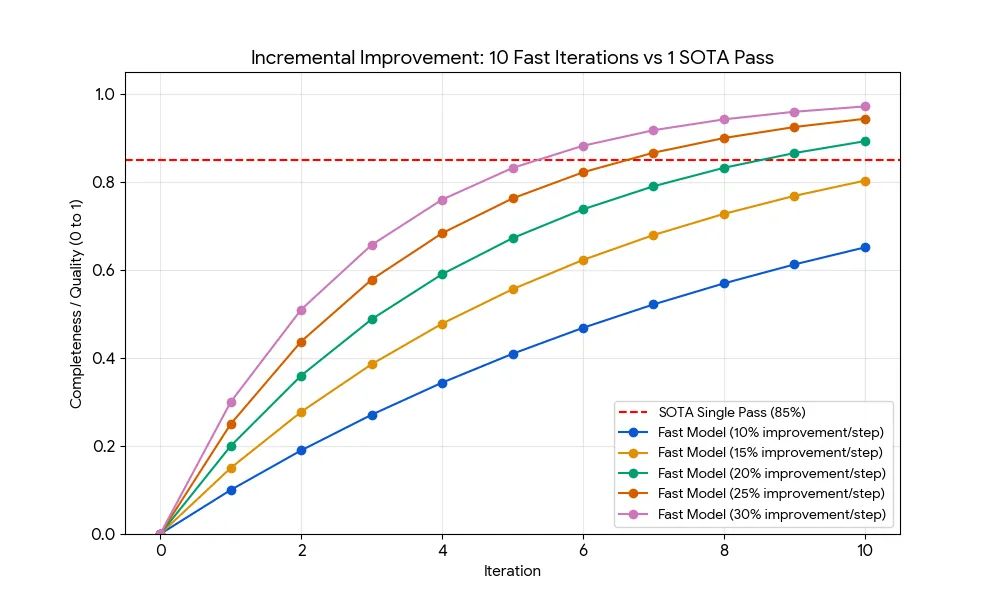

There’s been an increasing amount of work put into using a diffusion approach to text-based models. And here Andrew Fisher looks at Mercury 2, a new diffusion model, and how even being less capable than frontier models, its dramatically improved performance might close the gap in many programming applications.