Making coding agents (Claude Code, Codex, etc.) reliable – Upsun Developer Center

March 4, 2026

That’s the pitch every engineering team is hearing right now. Tools like Claude Code, Cursor, Windsurf, and GitHub Copilot keep getting better at generating code. The demos are impressive. The benchmarks keep climbing. And your timeline is full of people showing off AI-written features shipping to production.

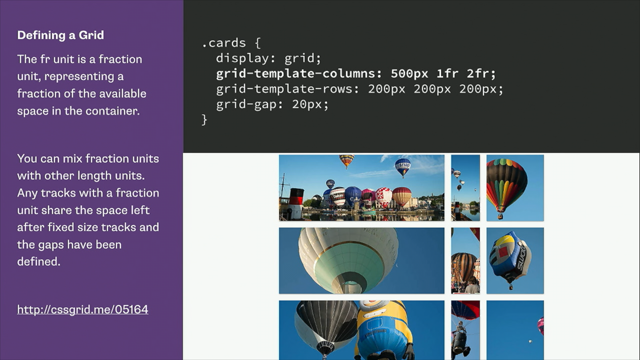

Software 2.0 works differently. You specify objectives and search through the space of possible solutions. If you can verify whether a solution is correct, you can optimize for it. The key question becomes: is the task verifiable?

Software engineering has spent decades building verification infrastructure across eight distinct areas: testing, documentation, code quality, build systems, dev environments, observability, security, and standards. This accumulated infrastructure makes code one of the most favorable domains for AI agents.

More thoughts on what software engineering looks like as agentic coding systems increasingly produce the code that we work with. It’s been observed quite frequently that the role of humans in this is guidance and then verification. But that verification as others have observed doesn’t scale if it is humans reading line after line, something that we rarely if ever do anyway.