A Dream of Spring for Open-Weight LLMs: 10 Architectures from Jan-Feb 2026

March 6, 2026

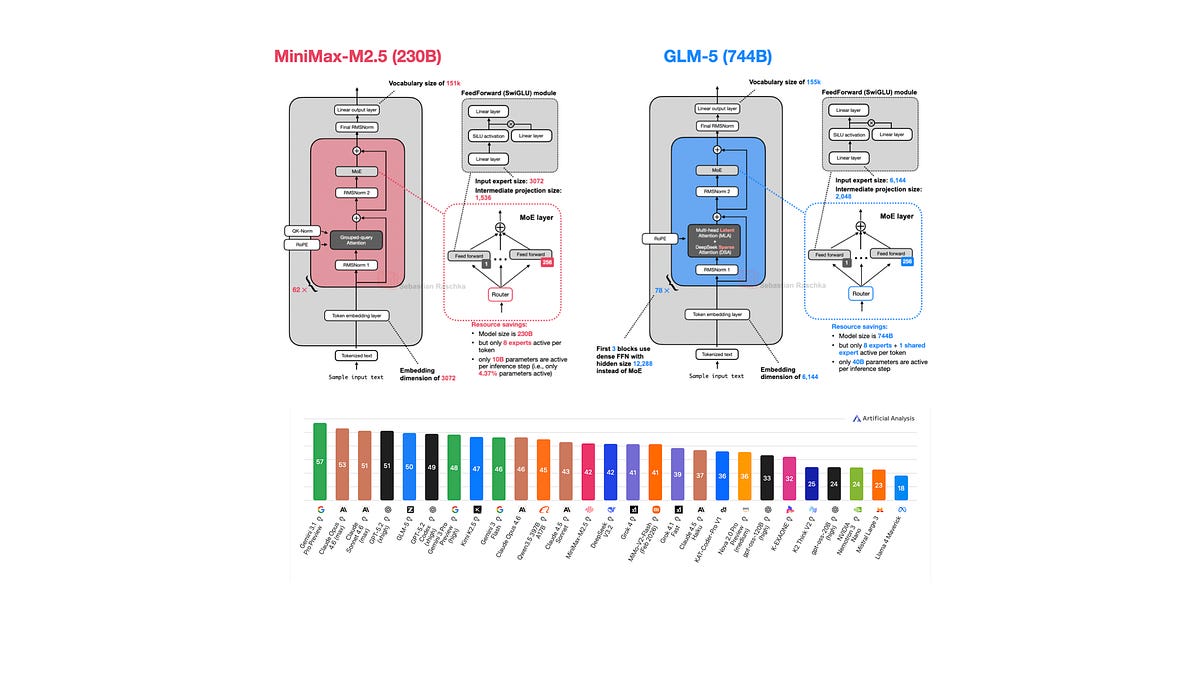

In this article, I will walk you through the ten main releases in chronological order, with a focus on the architecture similarities and differences:

Since there’s a lot of ground to cover, I will be referencing my previous The Big LLM Architecture Comparison article for certain technical topics (like Mixture-of-Experts, QK-Norm, Multi-head Latent Attention, etc.) throughout this article for background information to avoid redundancy in this article.

Open weights models, even when very large, offer the prospect of running our own inference locally, saving money, addressing privacy issues. But are they up to scratch? Here is a detailed overview of 10 high-profile open weights models in early 2026.