AGENTS.md is the wrong conversation

March 3, 2026

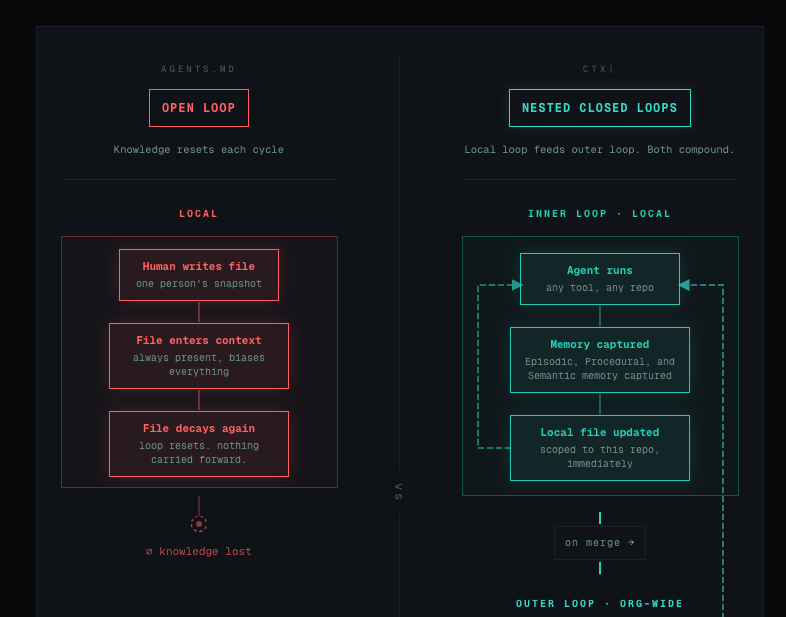

A paper dropped this week that tested AGENTS.md files — the repo-level context documents that every AI coding tool now recommends — across multiple models and real GitHub issues. The result was uncomfortable: context files reduced task success rates compared to no file at all, while inflating inference costs by over 20%.

Theo’s explanation of why this happens is the clearest in the conversation. Your prompt is not the start of the context. There’s a hierarchy: provider-level rules, system prompt, developer message, user messages — and AGENTS.md sits in the developer message layer, above your prompt, always present, biasing everything. The critical insight: whatever you put in context becomes more likely to happen. You can’t mention tRPC “just in case” and expect the model not to reach for it. If you tell it about something, it will think about it — even when it’s not relevant. That’s the mechanism. That’s why these files backfire.

Three or so years ago when LLMs first arrived, we started sharing prompts–tricks, magical incantations that we were sure helped these models do their job better. “You are an incredibly intelligent software engineer…” we should start our prompts. Over time prompting faded into the background as we realised broader context was significant and prompts were only part of that.

Now there are a magical set of files we should include to provide context to every project, except perhaps there isn’t. This article looks at the current state of context for software development with tools like Claude Code and Codex.