Three AI customisation concepts by Anna McPhee

December 4, 2025

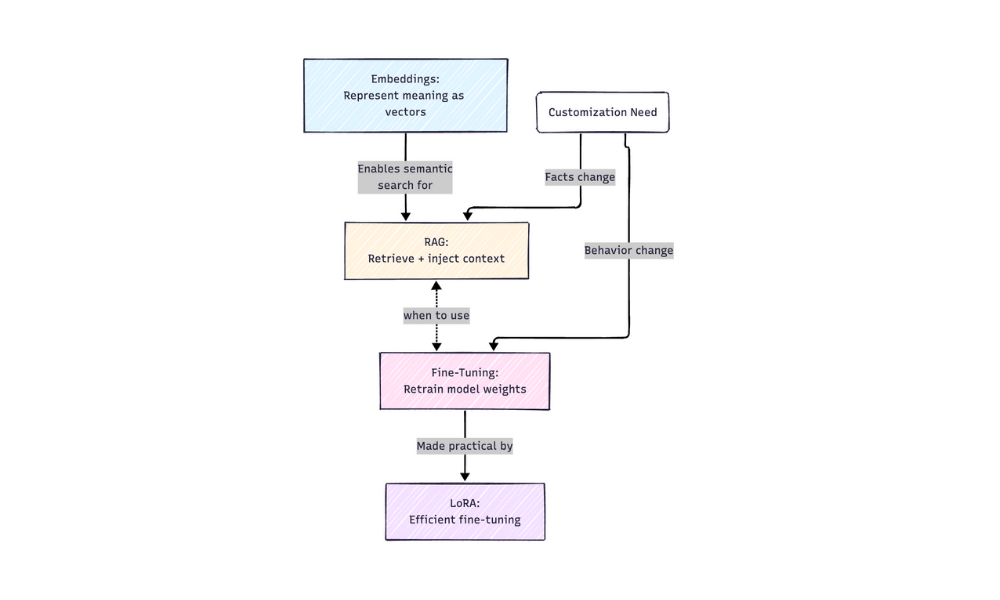

I’ve narrowed this down to three concepts that feel foundational – the ones I keep coming back to when I’m trying to understand how AI systems actually work, or when I’m explaining MCP to someone, or when I’m making decisions about how to build something. These three form a kind of progression: understanding how AI represents meaning, then how you customize it, then how you make customization practical.

The patterns between these concepts interest me as much as the concepts themselves. How embeddings enable RAG, how LoRA makes fine-tuning accessible, how choosing between RAG and fine-tuning depends on whether you’re teaching facts or behavior. These connections make the whole landscape easier to navigate.

An excellent article exploring metaphors we can use to understand how to work with large language models.